Using the SMILES notation for each drug, all currently available ADMET parameters were calculated utilizing the ADMETsar 3.0 platform. Subsequently, frequency distributions for several of the computed parameters were generated, and the resulting plots are presented below. The calculation of the frequency distribution of a variable and the fit to a Gaussian equation can be done using the following Python script.

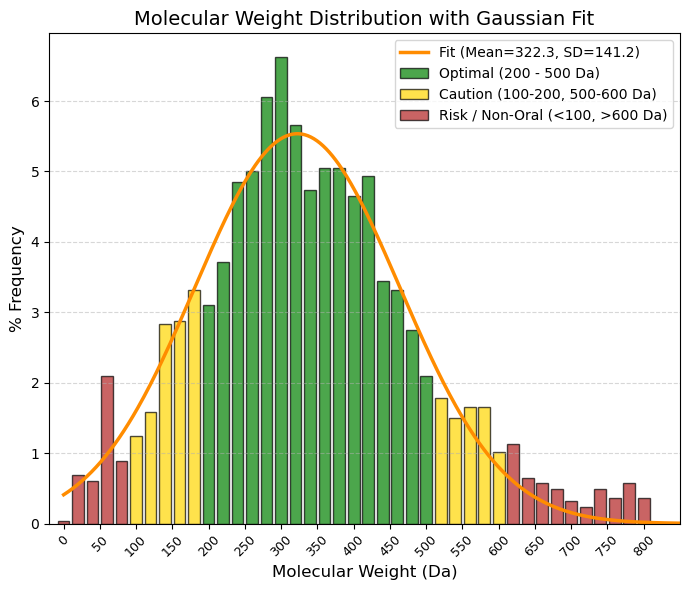

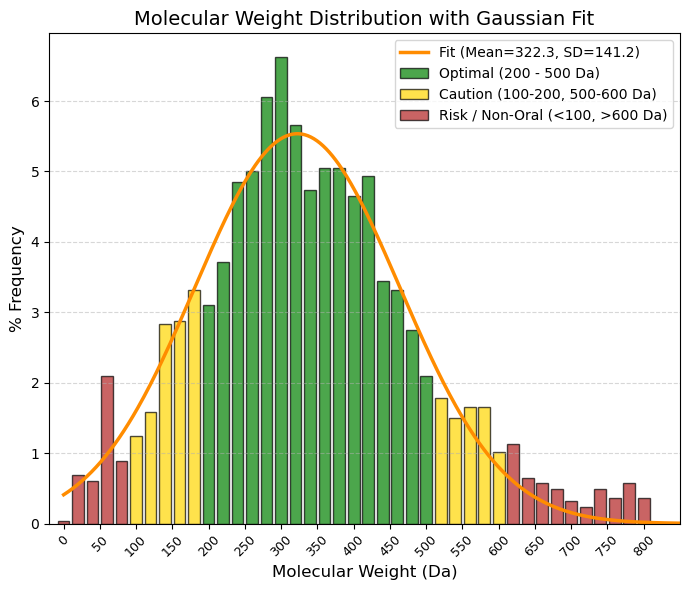

MW: Molecular weight. Unit: Da.

🟢 GREEN, 200 to 500 Da, Optimal (Lipinski Zone). This is where specificity and bioavailability intersect. Your average (322 Da) falls right in the middle of this zone.

🟡 YELLOW, 100 to 200 Da and 500 to 600 Da, “Caution/Limits. (< 200): ”Fragments." They are often too small to have high potency (they detach from the receptor). (-500 to 600): Gray zone (“Beyond Rule of 5”). Many modern drugs (such as antivirals or chemotherapy) are here. They are oral, but difficult.

🔴 RED, < 100 Da and > 600 Da, "Atypical / Risk. (< 100): Too simple (salts, solvents, gases). (- > 600): Serious oral absorption problems. They are usually injectables (biologics, large peptides) or very complex natural products.

80% values

(10.0%): 170.1 and (90.0%): 484.2 |

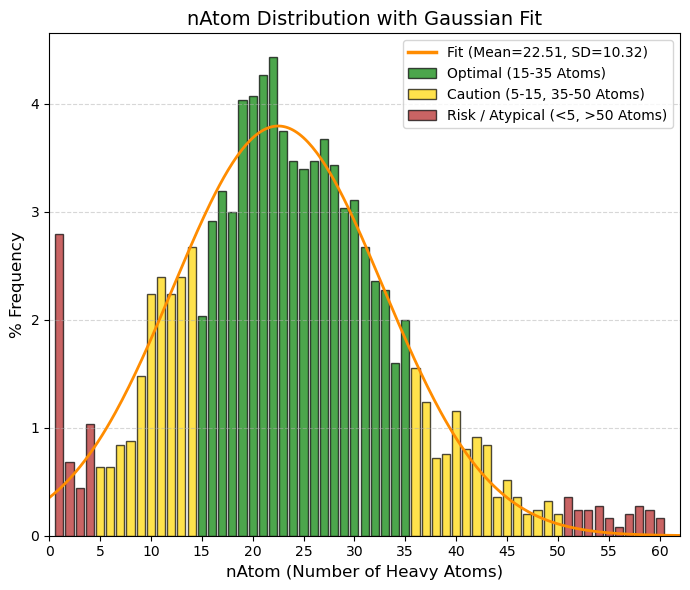

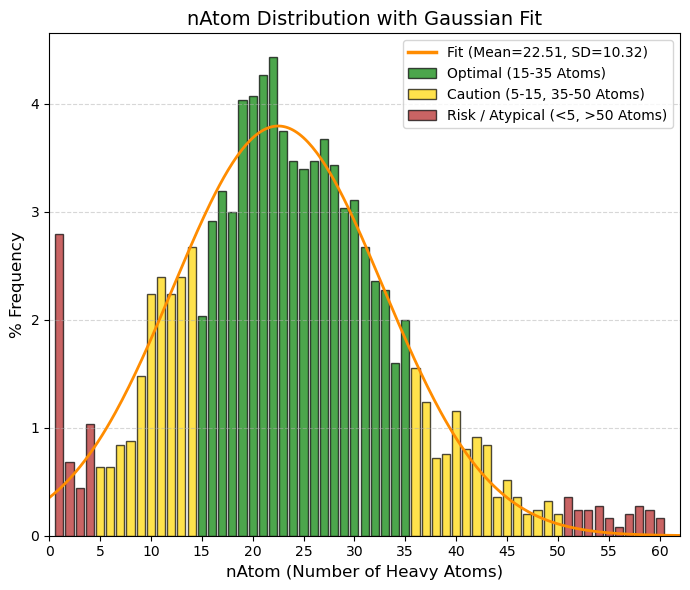

nAtom: Number of atoms (Heavy Atoms).

🟢 GREEN, 15 to 35, “Optimal (”Drug-like“ zone). Corresponds to the center of the graph (the average is 22). These are molecules with sufficient complexity to bind to the receptor, but not so large as to be insoluble.”

🟡 YELLOW, 5 to 15 and 35 to 50, “Caution. (- 5 to 15): ”Fragment-like." Very small molecules (such as Aspirin, nAtom=13). They are useful but sometimes require high doses. (- 35 to 50): Large molecules. Acceptable, but we start to have steric and solubility problems."

🔴 RED, < 5 and > 50, “Atypical / Risk. (< 5): Simple ions, salts, gases (note the peak at value 1 in your data). (- > 50): ”Beyond Rule of 5.“ Macrolides, large peptides, etc. Difficult oral administration.”

80% values

(10.0%): 11.4 and (90.0%): 32.4 |

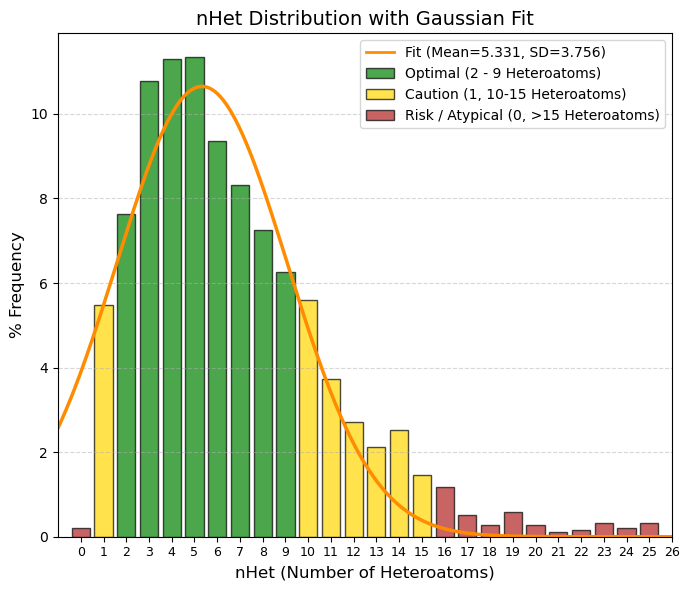

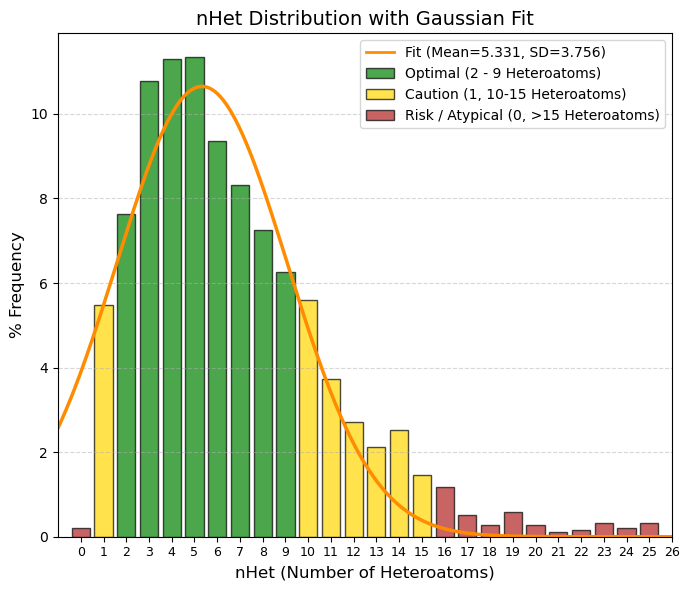

nHet: Number of heteroatoms.

🟢 GREEN, 2 to 9, Optimal Balance. This is where the vast majority of oral drugs are found. They have enough functional groups to be potent and soluble, but retain their lipophilic character. The average (5.3) is right here.

🟡 YELLOW, 1 and 10 to 15, Caution/Limits. 1: Very simple or very lipophilic molecules. Risk of rapid metabolism or low potency. 10-15: Complex molecules (antibiotics, protease inhibitors). Acceptable, but bioavailability begins to suffer.

🔴 RED, 0 and > 15, Risk / Atypical. 0: Pure hydrocarbons. Extremely rare in drugs (except inhaled general anesthetics). >15: Excessively polar or large. Difficult to absorb orally.

80% values

(10.0%): 1.7 and (90.0%): 10.7 |

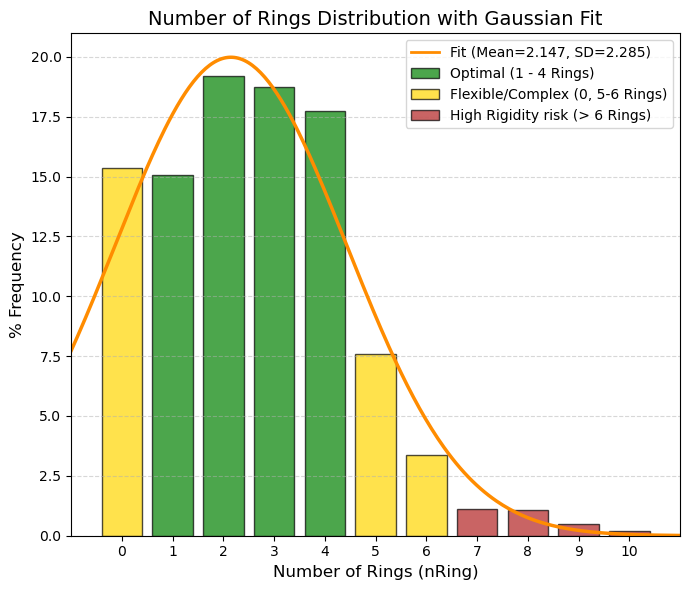

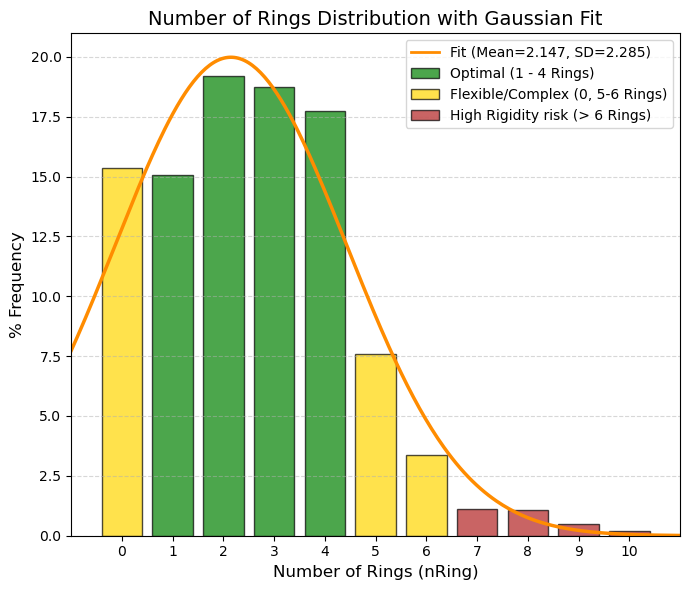

nRing: Number of rings.

🟢 GREEN, 1 to 4, Ideal Zone. They provide the necessary rigidity to fit into the active site without triggering lipophilicity or molecular weight. Almost 70% of the data is concentrated here.

🟡 YELLOW, 0 and 5 to 6, Caution. 0 (Acyclic): These account for 15%. Very flexible molecules (such as metformin). They work, but high flexibility sometimes hinders affinity (entropic penalty). 5-6: Steroids or complex structures. Risk of low solubility.

🔴 RED, > 6, High Risk. Very rigid and heavy molecules. Difficult to synthesize and often with serious solubility problems (“Brick dust”).

80% values

(10.0%): 0 and (90.0%): 5 |

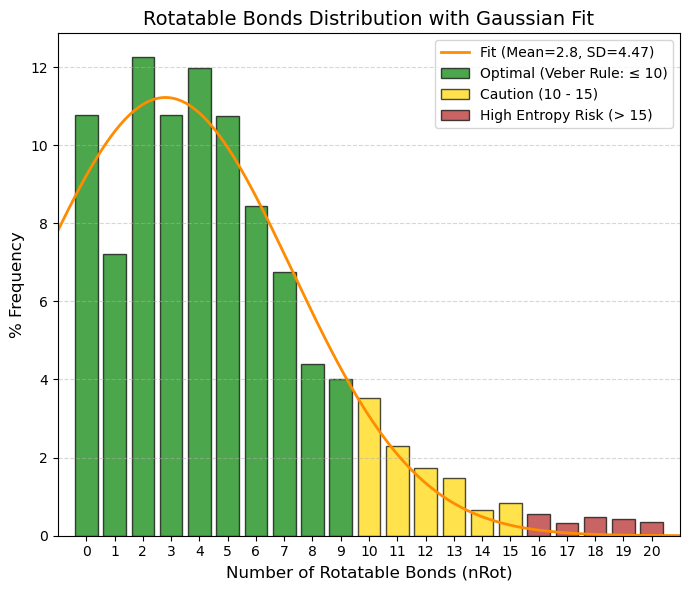

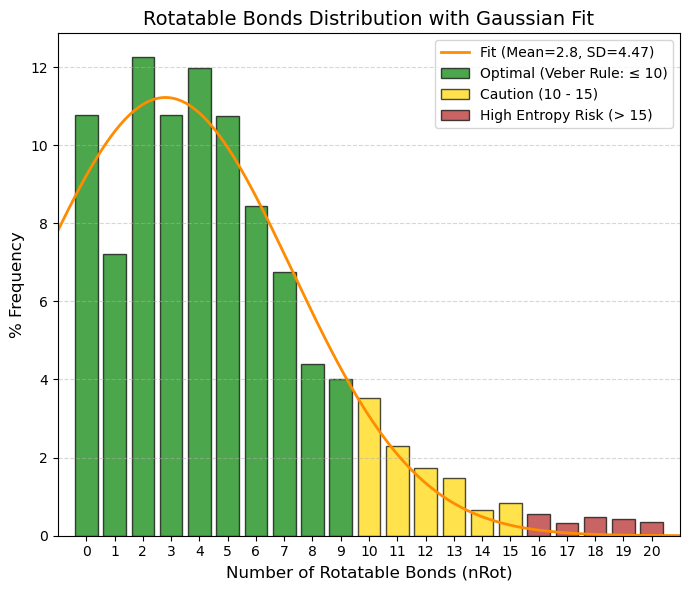

nRot: Number of rotatable bonds.

🟢 GREEN, 0 to 9, Optimal (Veber's Rule). This is where oral bioavailability is highest. The average (2.8) and the vast majority of data (almost 80%) fall within this range. The 0 to 5 range is particularly “sweet” for potent oral drugs.

🟡 YELLOW, 10 to 15, Caution/Limit. The molecule begins to be too flexible. Intestinal absorption declines significantly. It is still viable, but requires high intrinsic potency to compensate for the loss of entropy.

🔴 RED, > 15, High Risk. It is very rare to see oral drugs here. They are usually linear peptides or molecules that only work by injection, as the liver destroys them or they do not cross the membrane.

80% values

(10.0%): 0 and (90.0%): 8 |

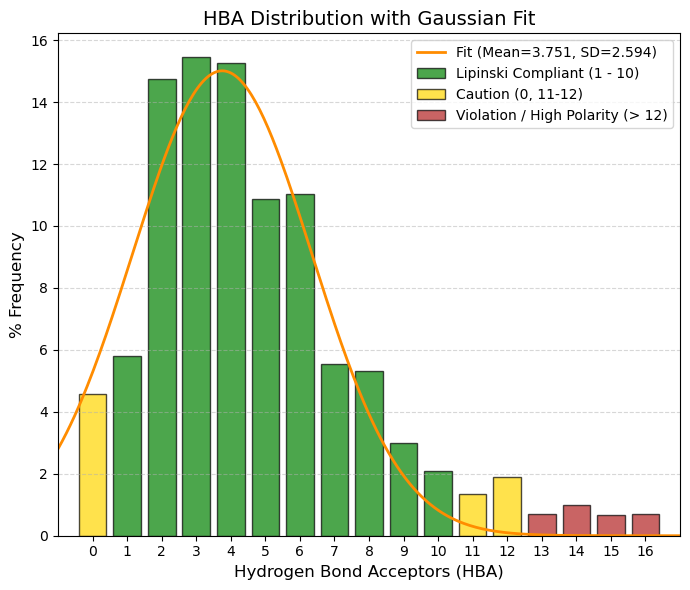

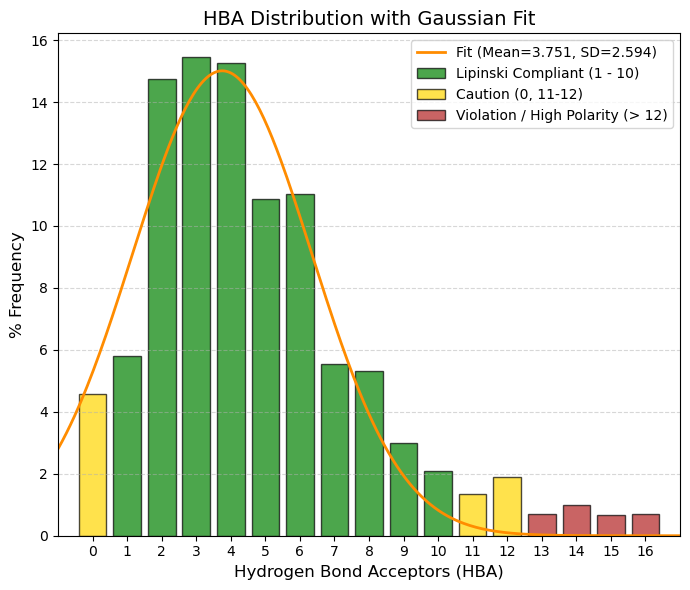

HBA: Number of hydrogen bond acceptors.

🟢 GREEN, 1 to 10, Complies with Lipinski (Safe Zone). Almost 90% of the data is here. This is the range where water solubility and fat permeability are balanced. The average (3.75) is the sweet spot.

🟡 YELLOW, 0 and 11 to 12, Caution. 0: No ability to accept hydrogen (very hydrophobic). May have solubility issues. 11-12: Slight violation of the Rule of 5. Acceptable if other parameters (such as weight) are low.

🔴 RED, > 12, High Risk (Clear Violation). Too many polar groups (too much oxygen/nitrogen). The molecule “clings” so tightly to water that it does not want to cross the lipid membrane of the intestine.

80% values

(10.0%): 1 and (90.0%): 8 |

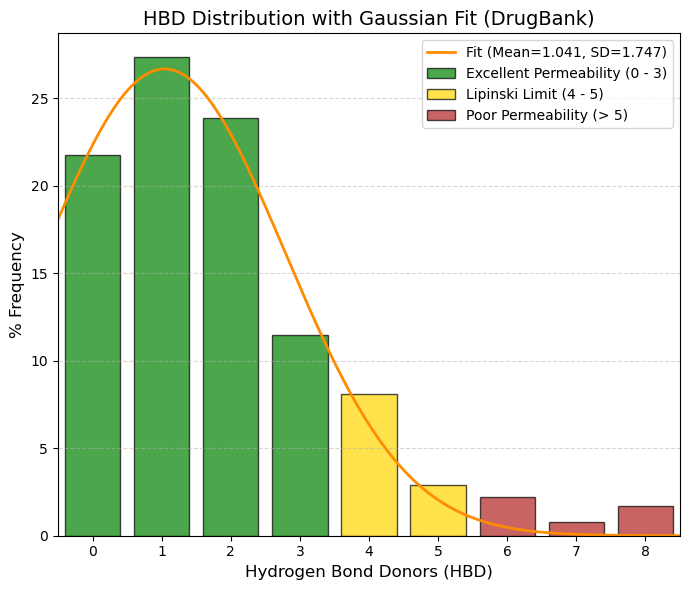

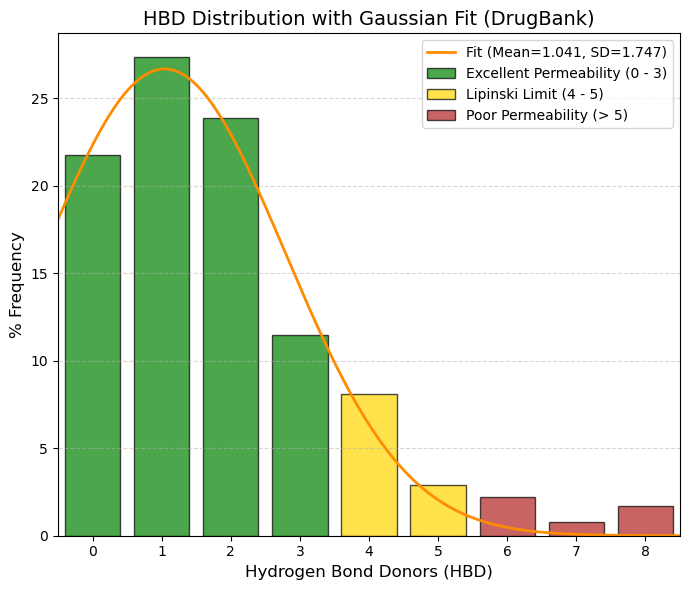

HBD: Number of hydrogen bond donors.

🟢 GREEN, 0 to 3, Zone of Excellence. Adding up the percentages, 84.3% of drugs are here. 0 (21.7%): Drugs without OH or NH. They cross membranes very quickly (if they are not too large). 1 (27.3%): The most common value.

🟡 YELLOW, 4 to 5, Lipinski's Limit. Still acceptable, but permeability begins to suffer. Only ~11% of your data falls here.

🔴 RED, > 5, Violation. Too many “sticky” polar groups (OH/NH). The molecule prefers to stay in water rather than cross the cell's fat. In your data, this is almost non-existent (< 2.5%).

80% values

(10.0%): 0 and (90.0%): 4 |

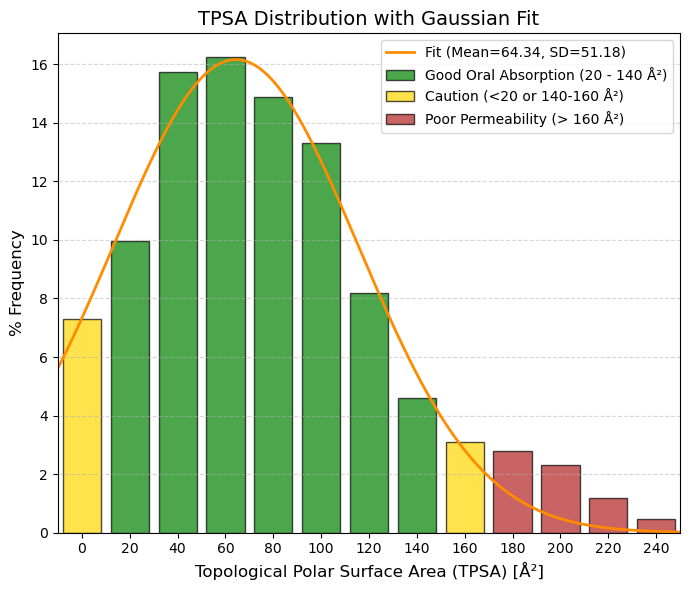

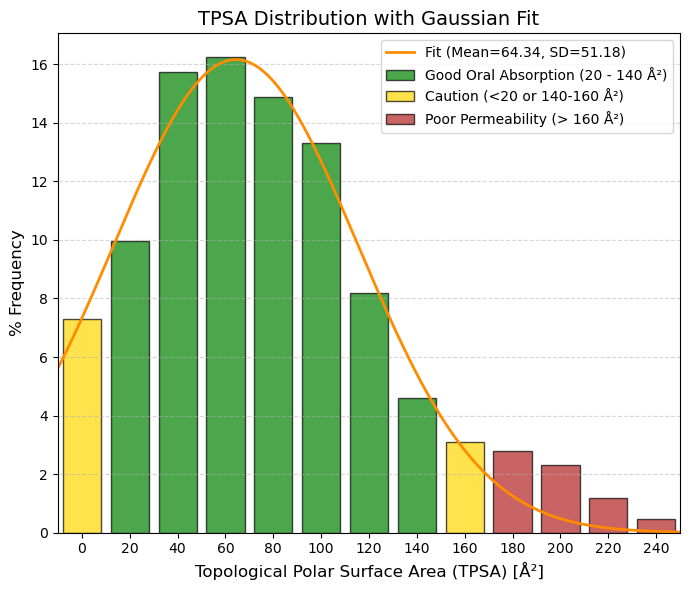

TPSA: Topological polar surface area.

🟢 GREEN, 20 to 140 Ų, Optimal Zone (Oral Absorption). This is where “the magic happens.” 20-90 Ų: High permeability (even brain). 90-140 Ų: Good intestinal absorption. Almost 85% of the data is here.

🟡 YELLOW, 0 to 20 and 140 Ų to 160 Ų, Caution. 0-20 Ų: Very “greasy” (non-polar) molecules. They are well absorbed but bind too much to proteins or are toxic. 140-160 Ų: Absorption begins to drop dramatically.

🔴 RED, > 160 Ų, Poor Absorption. The molecule is too polar to cross the lipid membrane of cells. Only viable for injectables or extracellular targets.

80% values

(10.0%): 13 and (90.0%): 138 |

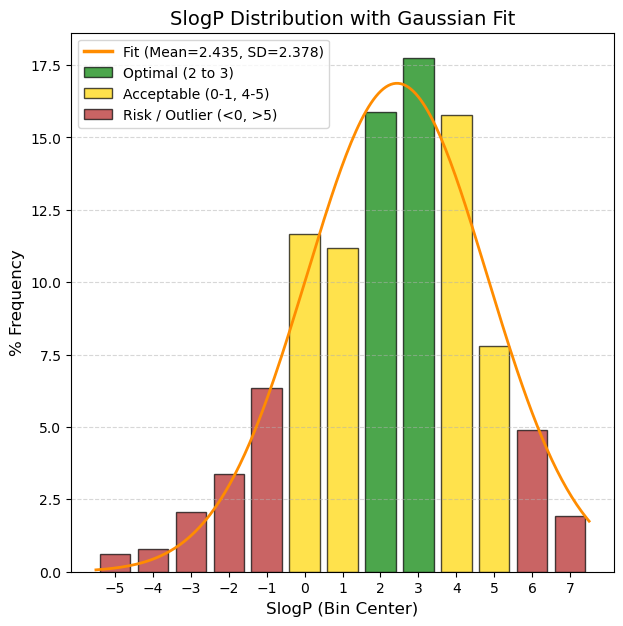

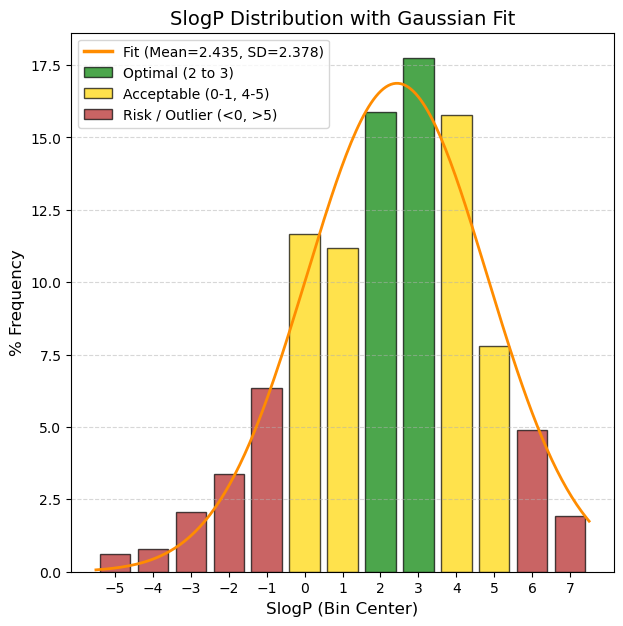

SlogP: The logarithm of the n-octanol/water distribution coefficient. Based on the RDKit calculation.

🟢 GREEN, 1.0 to 3.5, Optimal, “This is the ‘sweet spot’. The molecule is fatty enough to cross membranes, but soluble enough to dissolve in blood. These are the best candidates.”

🟡 YELLOW, 0 to 1.0 and 3.5 to 5.0, Acceptable/Caution, "Low (0-1): Risk of being too polar; may have difficulty entering the cell unless using an active transporter. High (3.5-5): Meets Lipinski's rule (limit 5), but starts to have solubility and metabolic risk issues."

🔴 RED, < 0 and > 5.0, Critical / Discard*, “< 0: Too hydrophilic (like sugar). Difficult passive intestinal absorption. Excreted very quickly by the kidney. > 5: Too lipophilic (like wax). Accumulates in fat, potential toxicity, poor solubility.”

80% values

(10.0%): -0.6 and (90.0%): 4.95 |

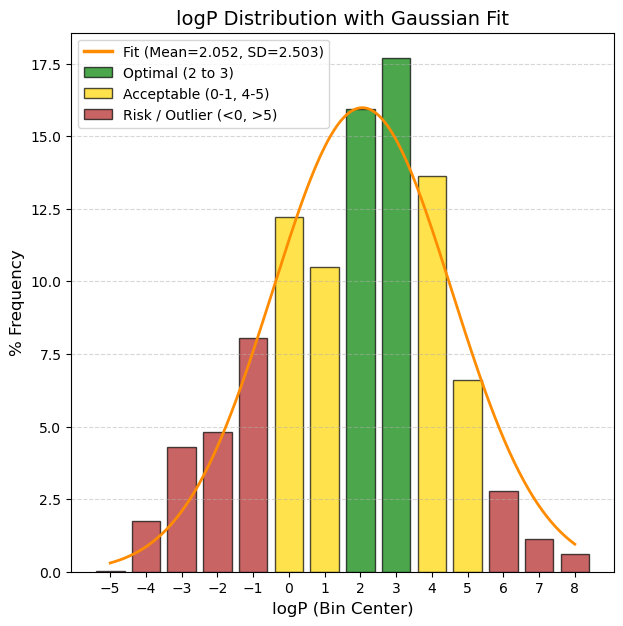

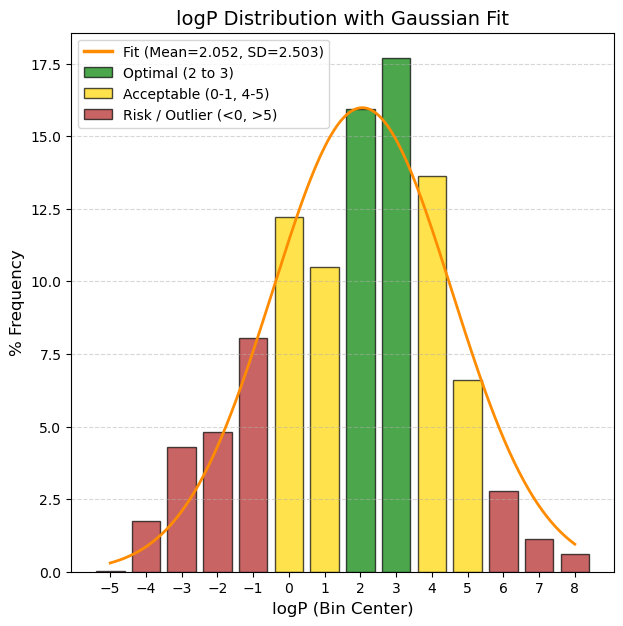

logP: The logarithm of the n-octanol/water distribution coefficient. Based on the CLMGraph model prediction.

🟢 GREEN, 2 to 3, Optimal/Good Solubility. The drug dissolves well in gastrointestinal fluids. This is the ideal range for simple oral formulations.

🟡 YELLOW, 0 <= x < 2 or 4 <= x <= 5: Low solubility. Very common in modern drugs, but requires formulation tricks (salts, special excipients).

🔴 RED, < 0 and > 5, Critical / Difficult development. (< -6): Practically insoluble. High risk of not being absorbed (”brick dust effect“). (> 1): Extremely hydrophilic. It is excreted very quickly or does not enter the cell.

80% values

(10.0%): -1.26 and (90.0%): 4.7 |

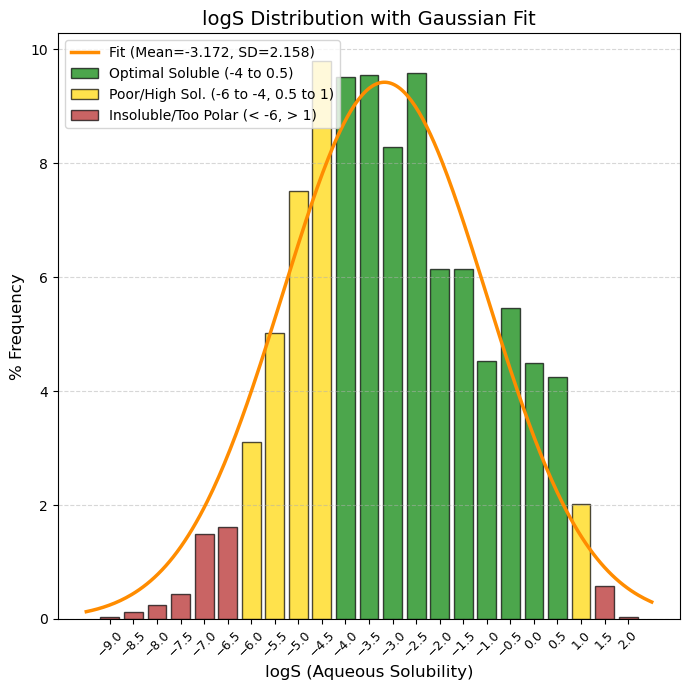

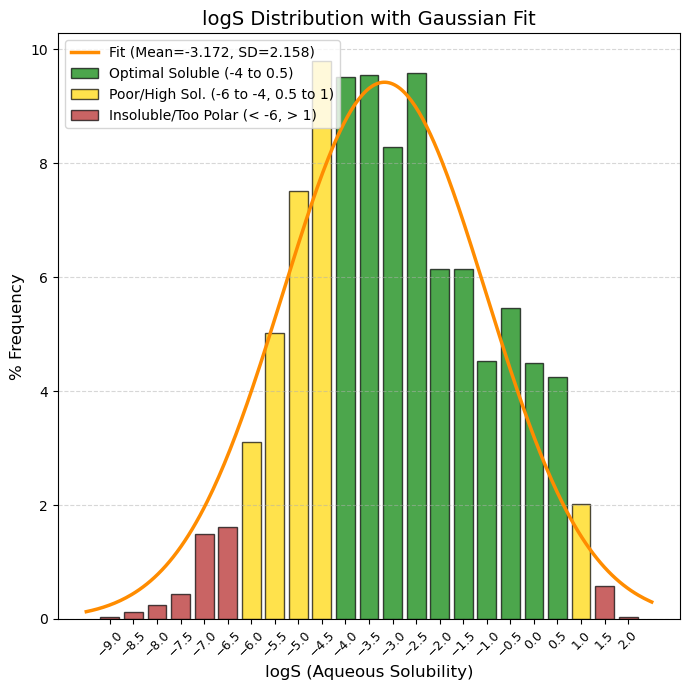

logS: Logarithm of water solubility value. Unit: -log mol/L.

🟢 GREEN, -4 to 0.5, Optimal/Good Solubility. The drug dissolves well in gastrointestinal fluids. This is the ideal range for simple oral formulations.

🟡 YELLOW, -6 to -4 and 0.5 to 1, "Caution. (-6 to -4): Low solubility. Very common in modern drugs, but requires formulation tricks (salts, special excipients). (> 0.5): Very soluble. Sometimes indicates that the molecule is too polar to cross membranes."

🔴 RED, < -6 and > 1, “Critical / Difficult development. (< -6): Practically insoluble. High risk of not being absorbed (”brick dust effect“). (> 1): Extremely hydrophilic. It is excreted very quickly or does not enter the cell.”

80% values

(10.0%): -5.4 and (90.0%): -0.5 |

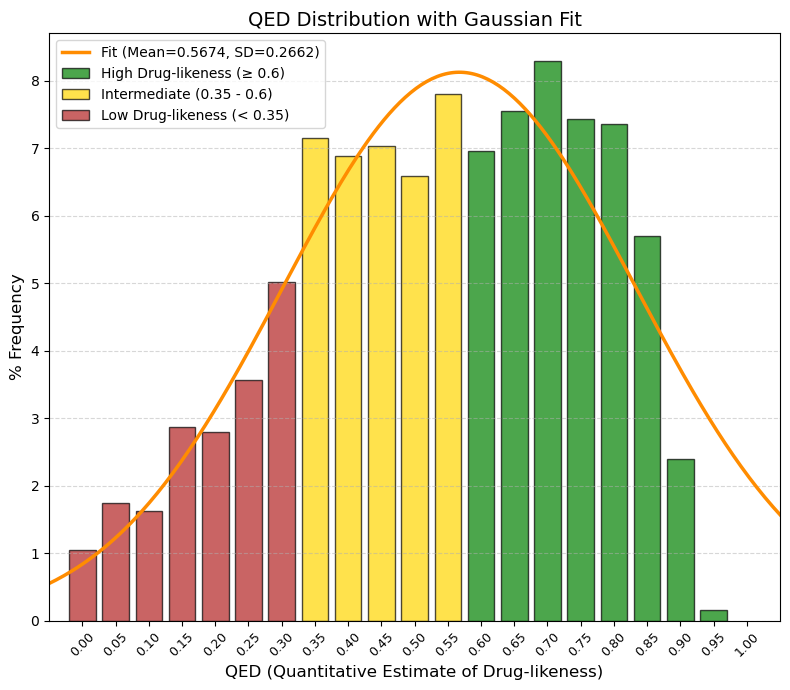

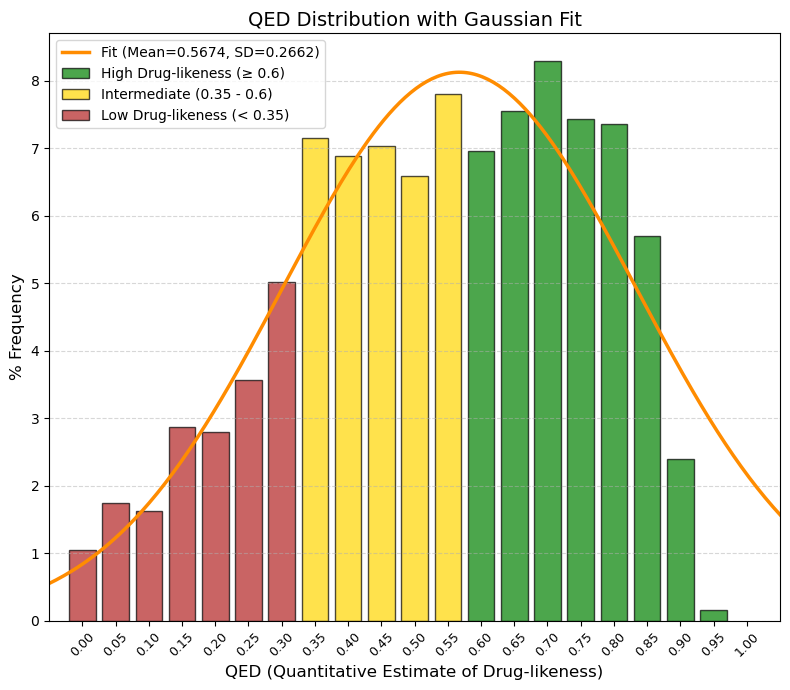

QED: The quantitative estimate of drug-likeness (QED) indicator.

🟢 GREEN, ≥ 0.60, “High Drug-likeness (Ideal). Here are the ‘nice’ molecules: easy to synthesize, good absorption, good distribution. In this data, the frequency rises sharply here (peak at 0.7).”

🟡 YELLOW, 0.35 to < 0.60, “Intermediate / Acceptable. This is where your average falls (0.56). These molecules are a little more complex, perhaps heavier or with more polar groups, but perfectly viable as drugs.”

🔴 RED, < 0.35, “Low Drug-likeness (Complex/Difficult). Very large, very flexible molecules or molecules with poor physicochemical properties. They usually require injection or very complex formulations.”

80% values

(10.0%): 0.26 and (90.0%): 0.81.

Bickerton, G., Paolini, G., Besnard, J. et al. Quantifying the chemical beauty of drugs. Nature Chem 4, 90-98 (2012). 10.1038/nchem.1243 |

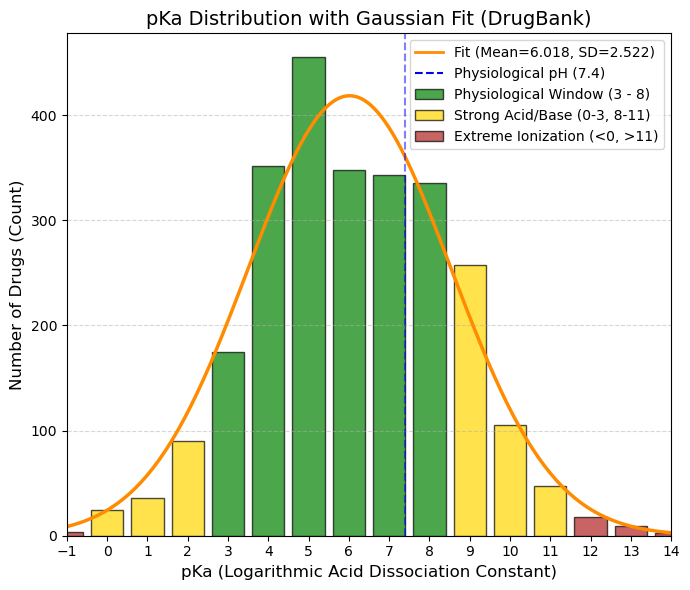

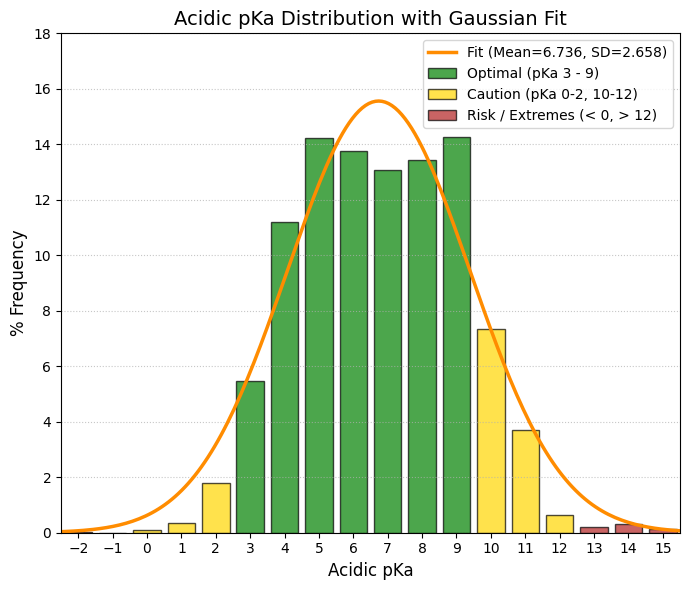

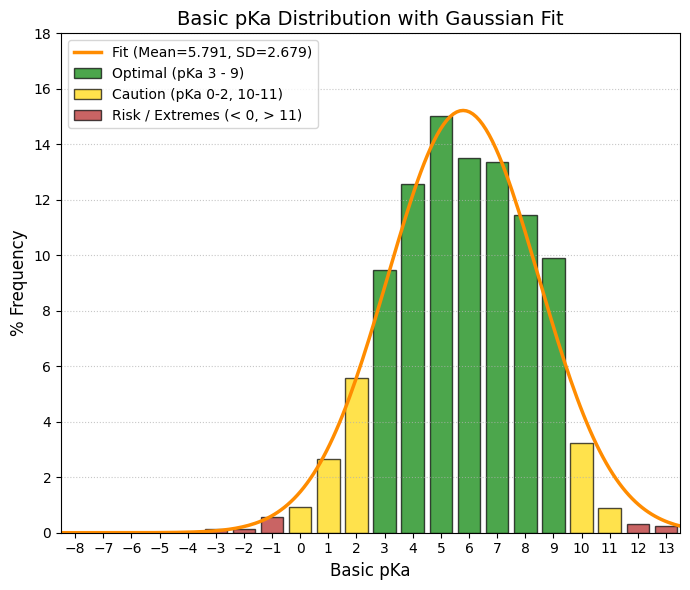

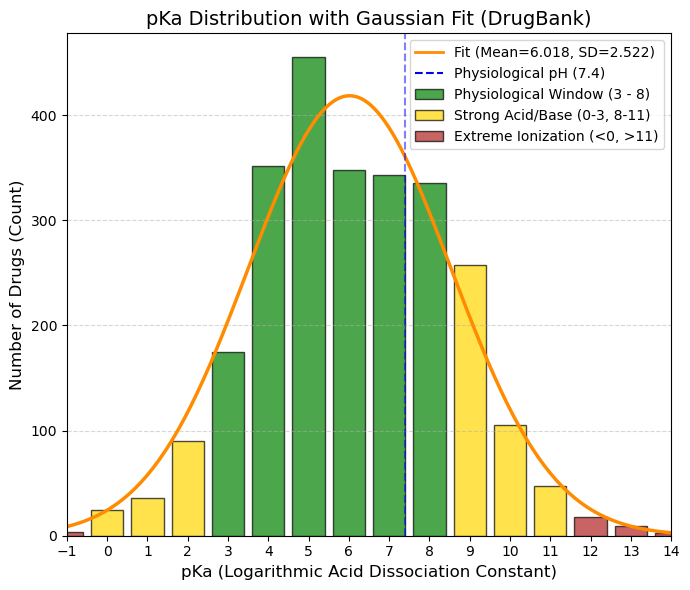

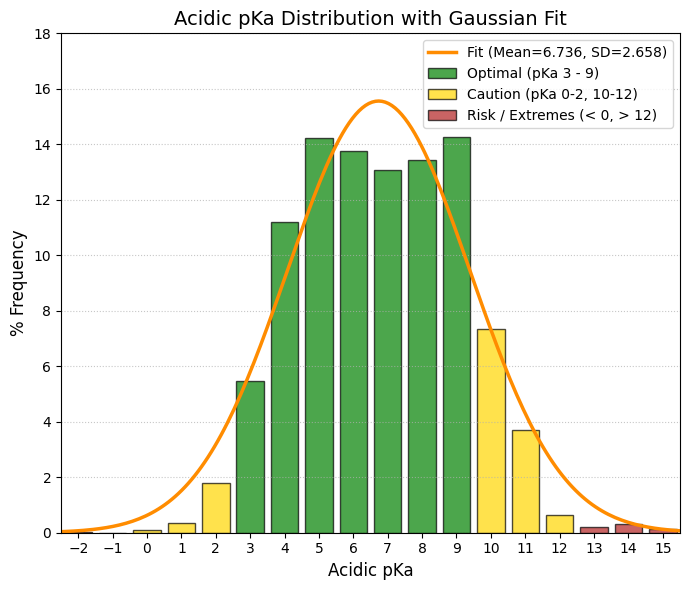

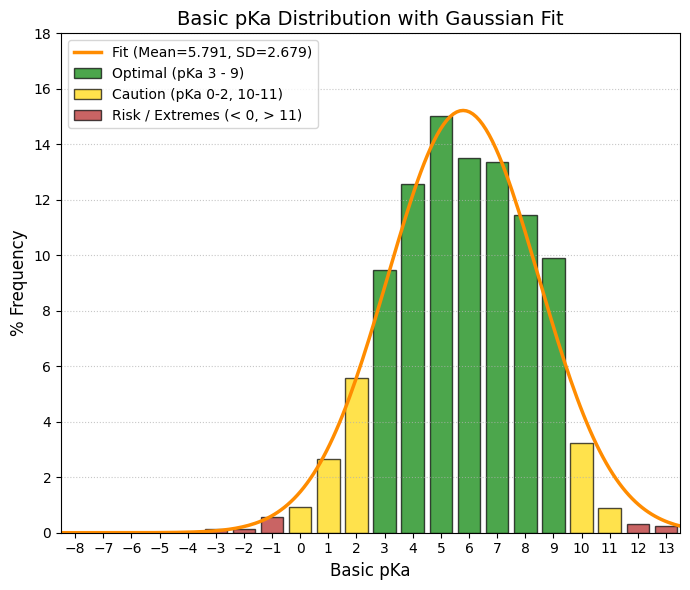

pKa: The logarithmic acid dissociation constant.

🟢 GREEN, 3 to 8, Gold Zone (Physiological Window). This is where the bulk of the data is concentrated (the values of 300-400 drugs). These drugs change their ionization state within the pH range of the human body (stomach pH 2 -> blood pH 7.4).

🟡 YELLOW, 0 to 3 and 8 to 11, Caution/Specific. 0-3: Strong acids. They almost always ionize (such as aspirin, pKa ~3.5). Good for the stomach, but can cause irritation. 8-11: Strong bases (such as antidepressants/beta-blockers). They are well absorbed in the intestine, but can become trapped in lysosomes.

🔴 RED, < 0 and > 11, Extremes (Permanent Ionization). Here the drug is almost always 100% ionized. Very difficult to cross membranes by passive diffusion. They usually require active transport or injection.

(5.0%): 2.12 and (95.0%): 10.06 |

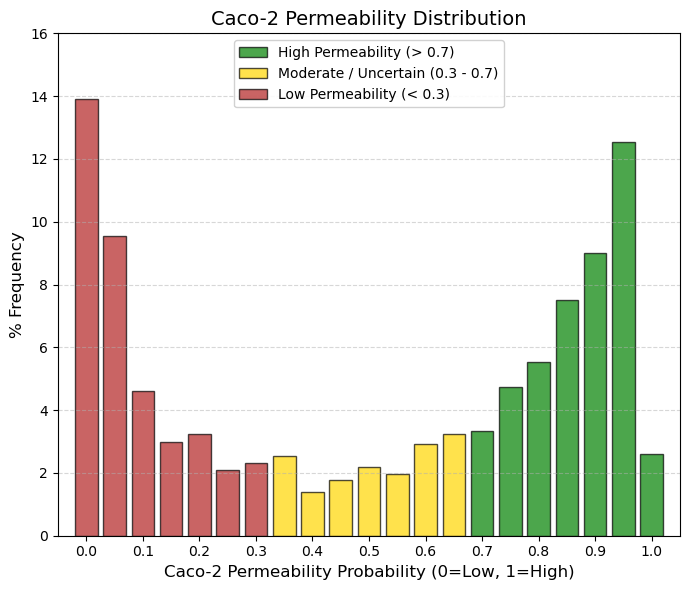

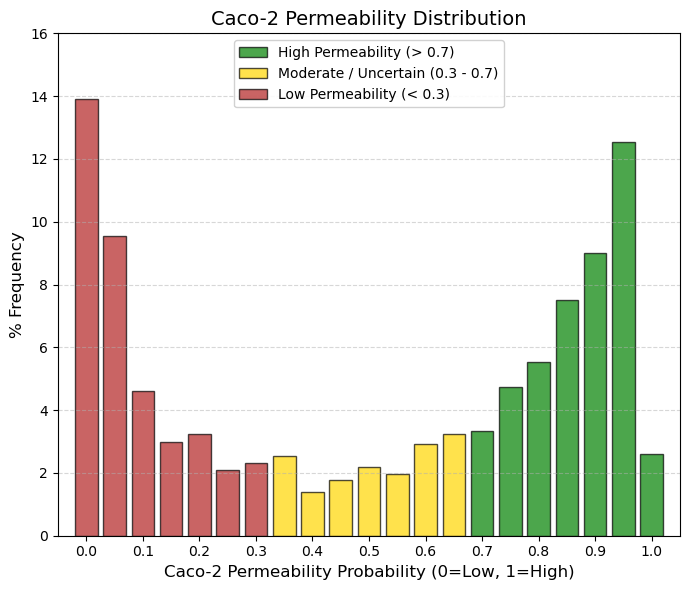

Caco-2: Caco2 cell permeability.

🟢 GREEN, > 0.7, High Permeability (Absorption Zone). These are drugs that easily cross the intestine by passive diffusion. This is ideal for an oral drug (the right peak is here).

🟡 YELLOW, 0.3 to 0.7, Gray Zone/Moderate. The drug has medium permeability or the model is uncertain. It may require higher doses or special formulations.

🔴 RED, < 0.3, Low Permeability. Here we have a huge peak (401 drugs at 0). What is the reason? They are injectable drugs (they do not need Caco-2) and are very large/polar. In addition, they use active transport (Caco-2 sometimes fails to predict specific transporters). |

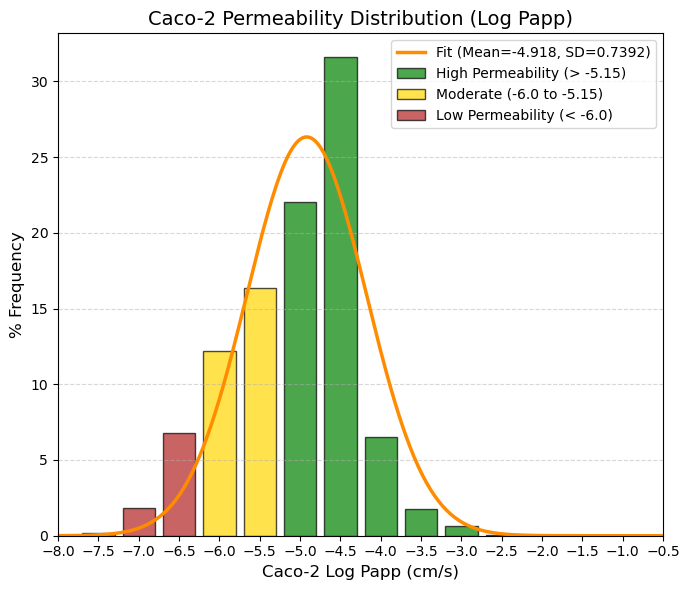

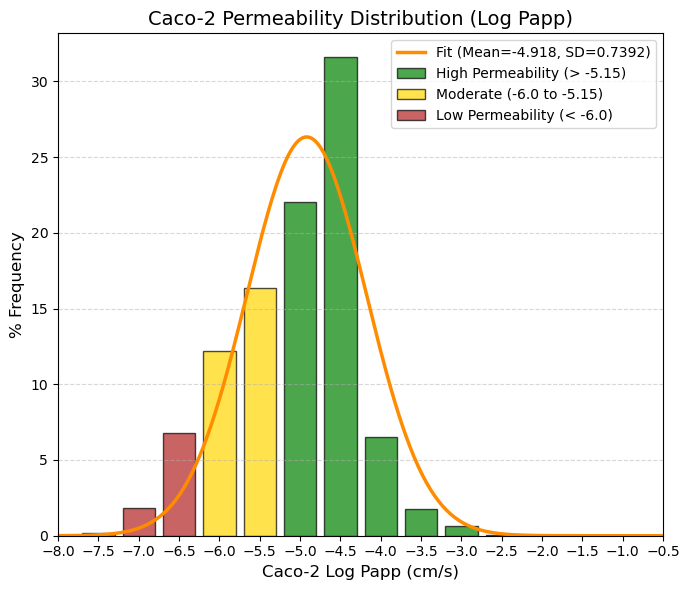

Caco_2_c: Caco2 cell permeability.

🟢 GREEN > -5.15 High permeability. Corresponds to human absorption > 80%. Here you have a big spike: the -4.5 bin has a massive 31.6% and the -5 bin has 22%. Most drugs are found here.

🟡 YELLOW -6.0 to -5.15 Moderate. Variable absorption (20-80%). Requires formulation or high doses. The ADMETsar model shows a transition here.

🔴 RED < -6.0 Low permeability. Absorption < 20%. The data show a tail to the left (-6.5, -7) that probably corresponds to drugs that are administered parenterally or that act locally in the intestine.

80% values

(10.0%): -6 and (90.0%): -4.16. |

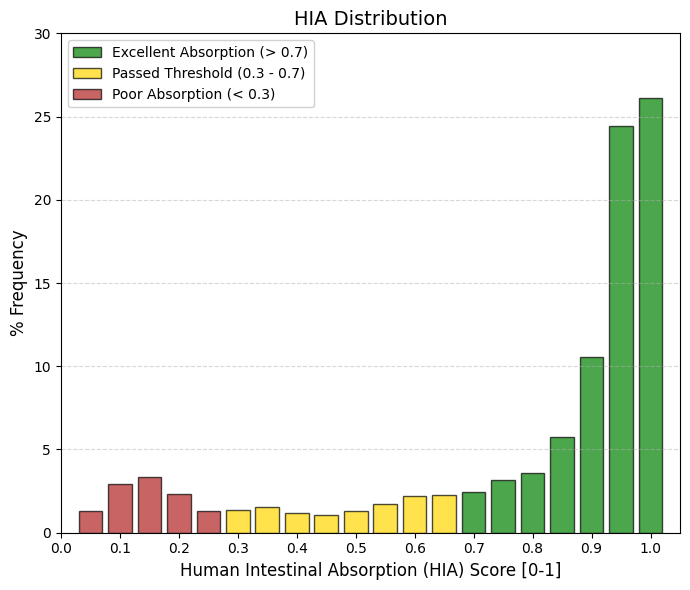

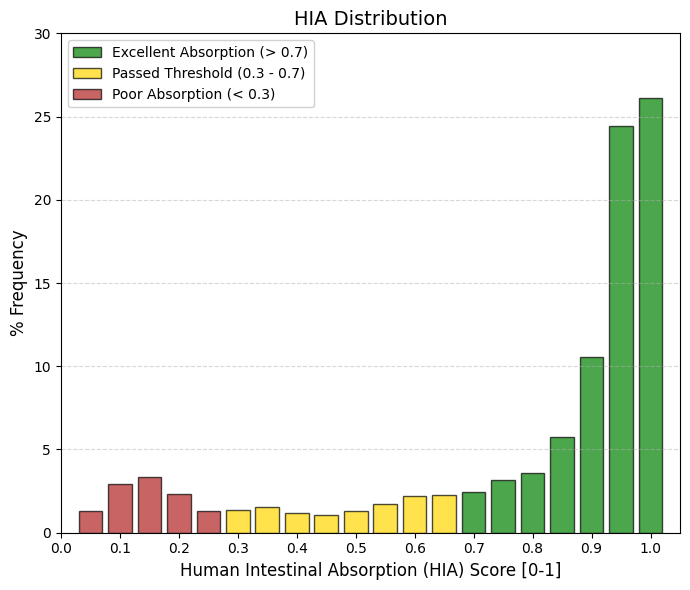

HIA: Human Intestinal Absorption.

🟢 GREEN > 0.7 Optimal Absorption. This is where successful oral drugs “live.” Adding up the frequencies from 0.7 to 1, you have more than 70% of all drugs. The peak is at 1.0 (26%) and 0.95 (24.4%). This indicates that the real standard is not 30%, but closer to 100%.

🟡 YELLOW 0.3 to 0.7 . Acceptable Absorption (Pass Zone). They meet the study criteria (>30%), but are not ideal. Only a small percentage of drugs fall here (~12%). They usually require higher doses to compensate for what is not absorbed.

🔴 RED < 0.3 Poor Absorption. By definition, these are HIA- (negative). Adding up the low frequencies, you have a significant group (approx. 11-12%) in the 0.05-0.2 range. These are almost certainly intravenous, topical, or inhaled drugs. |

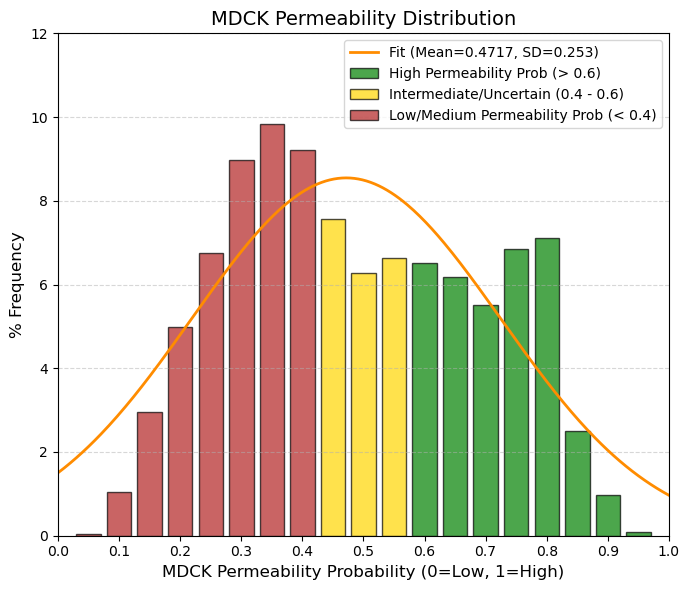

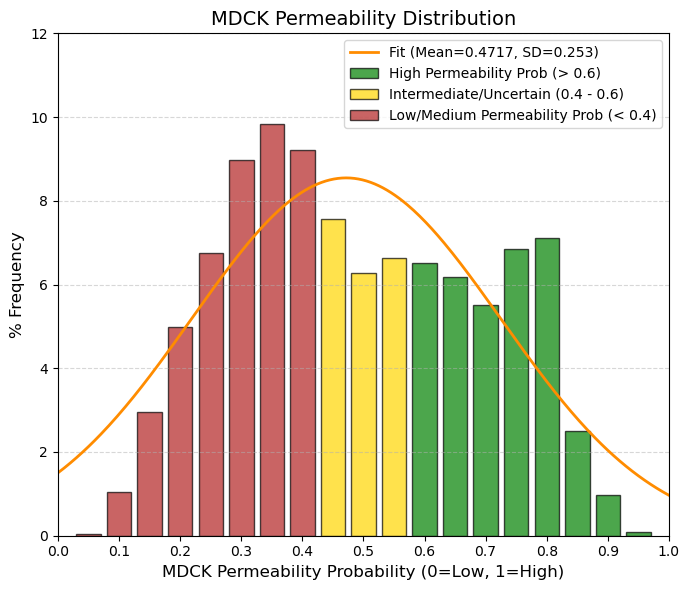

MDCK: Madin-Darby Canine Kidney cells (MDCK) Permeability.

🟢 GREEN, > 0.6, High Permeability (Clear Prediction). The model is confident that the compound exceeds the threshold of 8⋅10-6 cm/s. Adding up the frequencies, approximately 30-35% of drugs are here.

🟡 YELLOW, 0.4 to 0.6, Gray Zone/Uncertainty. This is where the average (0.47) falls. It is the most populated zone. The model cannot be sure whether it is high or low, or whether the compound has intermediate permeability. This is the “standard” behavior of an average drug.

🔴 RED, < 0.4, Medium-Low Permeability. The model confidently predicts that the drug is NOT highly permeable. This is common in drugs that act on peripheral receptors and we do not want them to cross all barriers (e.g., second-generation antihistamines).

80% values

(10.0%): 0.24 and (90.0%): 0.73.

Probabilidad de alta permeabilidad (High MDCK permeability). 0.6 - 1.0 → Alta permeabilidad muy probable. |

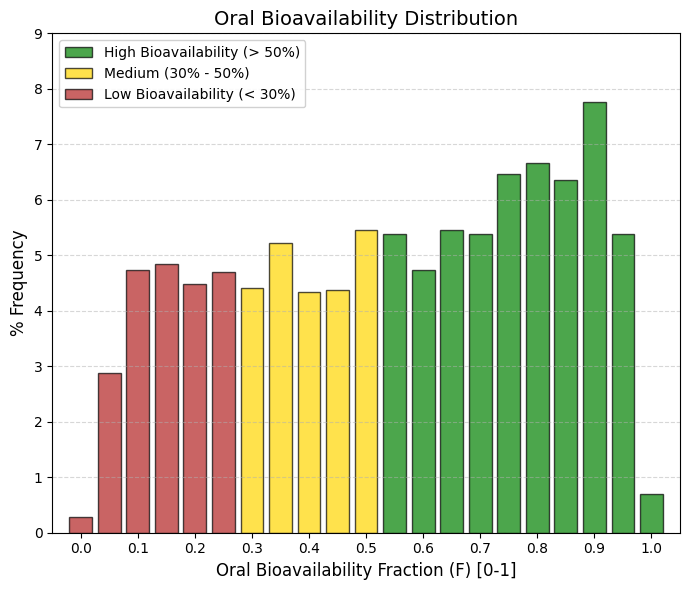

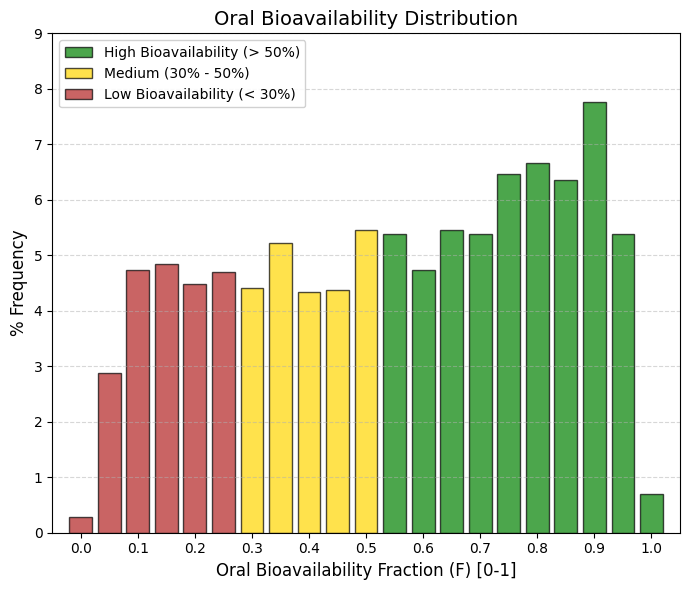

F50: Oral bioavailability (F50).

Bioavailability (F50) measures how much of the drug reaches the bloodstream intact after passing through the liver. The difference between the previous graph (HIA) and this one (F) is the first-pass effect (hepatic metabolism). Many drugs are well absorbed, but the liver destroys them before they can work.

🟢 GREEN, > 0.5, High Bioavailability (>50%). This group includes the majority (~54% of the data). These are robust drugs that survive the liver. The peak is at 0.9 (7.8%), indicating that the most successful drugs are those that manage to evade hepatic metabolism.

🟡 YELLOW, 0.3 to 0.5, Medium/Low. This corresponds to ~19% of the data. These are drugs that require higher doses because a significant portion is lost along the way.

🔴 RED, < 0.3, Very Low (<30%). Approximately 27% of drugs fall into this category. Note: Unlike other parameters, having a low F is not always a “failure.” Many drugs (such as statins or some antihypertensives) have low bioavailability but are very potent, so the small fraction that reaches the blood is sufficient. |

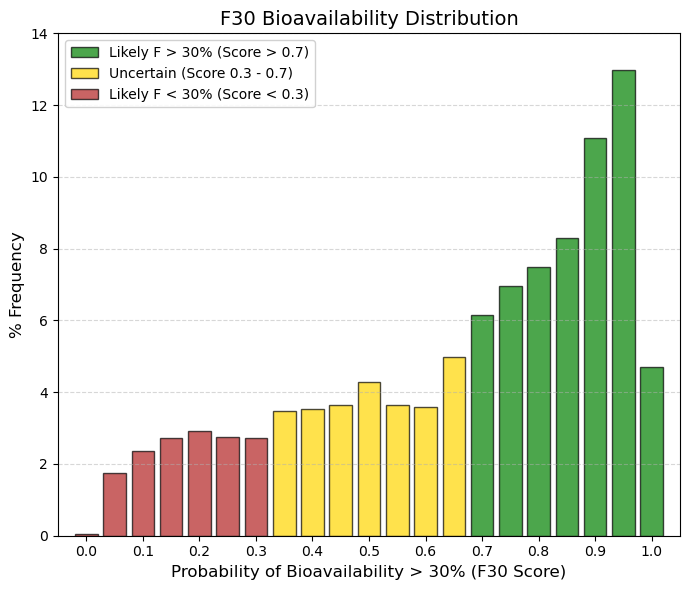

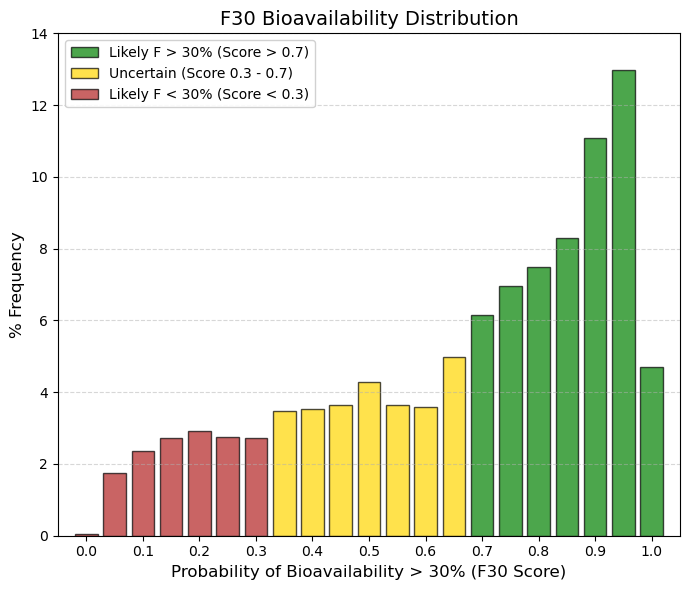

F30: Oral bioavailability (F30).

It is difficult to guarantee more than 50% bioavailability. Drugs are distributed equally; it is not a strict requirement for approval. It is “easy” (and almost mandatory) to exceed 30%. Most approved drugs pass this test with flying colors. |

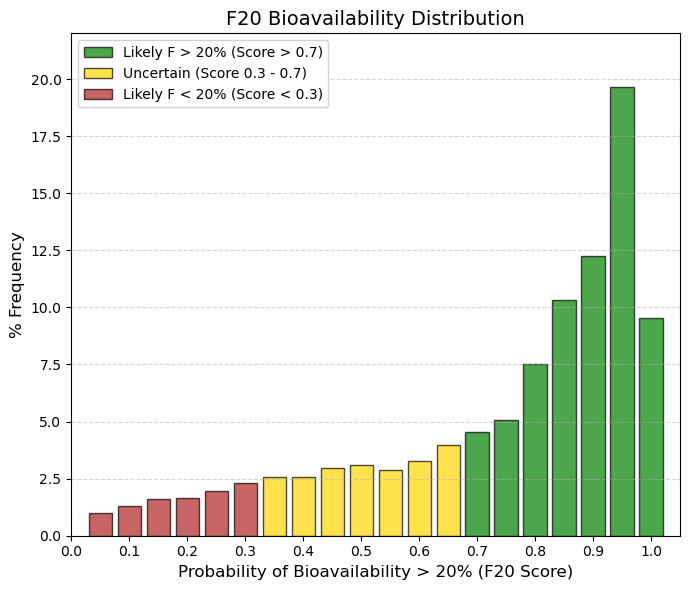

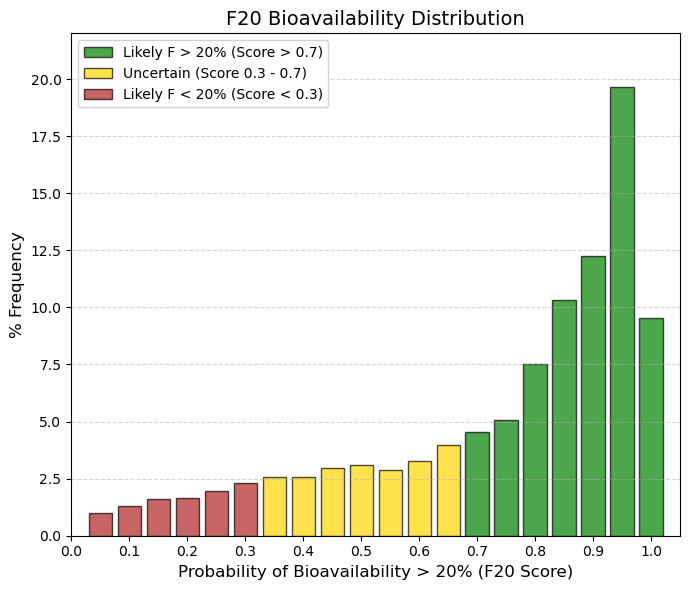

F20: Oral bioavailability (F20).

By lowering the requirement to F20 (Bioavailability > 20%), virtually all drugs “pass” the test. This means that for an FDA/EMA-approved drug, having less than 20% bioavailability is almost unacceptable (except in very powerful cases). The ADMETsar model knows this and therefore assigns very high probabilities to most drugs. |

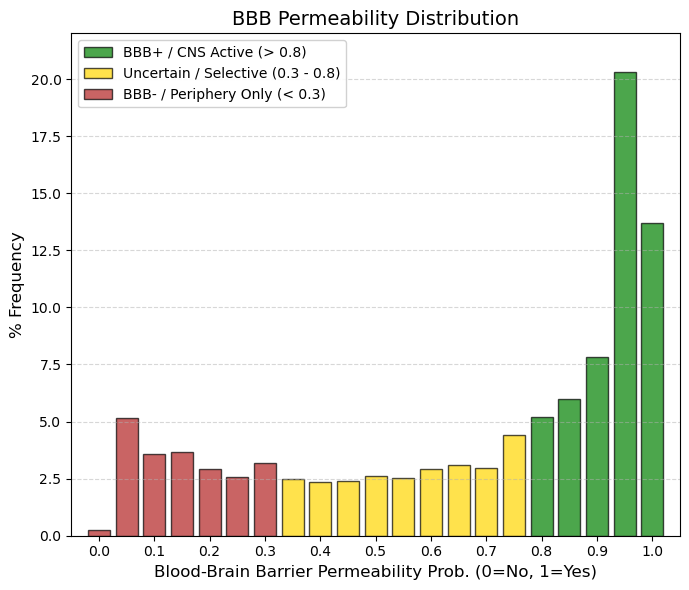

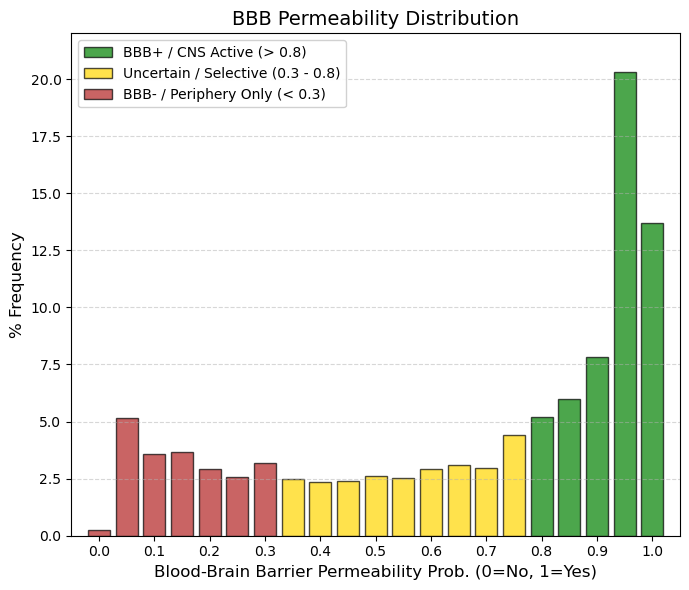

BBB: Blood-Brain Barrier Permeability.

The blood-brain barrier (BBB) protects the central nervous system (CNS) by separating brain tissue from blood as part of absorption. It is formed mainly by the brain endothelium and prevents the entry of larger (≈100%) and smaller (≈98%) molecules into the CNS, allowing only water- and lipid-soluble molecules and selective transporters to cross itself, while the channel expresses a number of active transporters, such as P-glycoprotein and glucose transporters, preventing the entry of lipophilic potential neurotoxins.

🟢 GREEN, > 0.8, BBB+ (High Permeability). This accounts for ~47% of the data. These are molecules that easily enter the Central Nervous System (CNS). The peak at 0.95 suggests that many approved drugs are highly penetrant.

🟡 YELLOW, 0.3 to 0.8, Intermediate/Selective Zone. This is a very flat “no man's land” (low frequencies of 2-3%). These are probably drugs that partially enter or are substrates of efflux pumps (P-gp) that remove them.

🔴 RED, < 0.3, BBB- (Low Permeability). Here there is a small group (~15%) concentrated at 0 and 0.05. These are drugs that do not affect the brain. Ideal for systemic antibiotics or cardiovascular drugs that want to avoid neurotoxicity. |

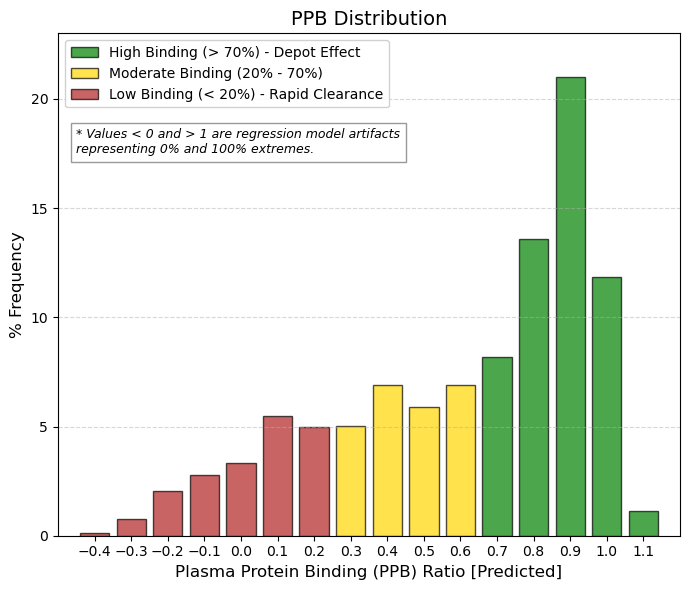

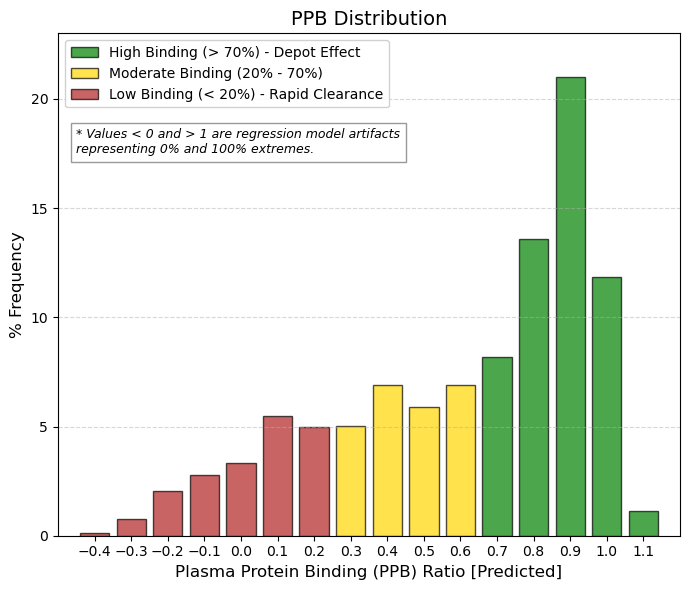

PPB: Plasma protein binding ratio.

Plasma protein binding is a very important pharmacokinetic property, which is expressed as the binding affinity of a drug to plasma proteins, and can effectively regulate the effective concentration of a drug at a pharmacological target. When a drug is absorbed by the body into the blood circulation, it selectively binds to plasma proteins. However, only the unbound portion of the drug can reach the specific target and produce therapeutic effects. Therefore, when a drug has a high affinity for plasma proteins, it means that it often requires a higher dose to reach a therapeutic concentration at the target and to exert its effect.

🟢 GREEN, > 0.7, High Union (Standard). This is where the vast majority of drugs are found (~55% of the data). The peak at 0.9 (21%) is the highest. Why? Because for a drug to be well absorbed (HIA+), it tends to be lipophilic. And albumin loves lipophilic compounds. It is a “toll” that must be paid for good oral absorption.

🟡 YELLOW, 0.2 to 0.7, Moderate Binding. Represents ~35% of drugs. These are more balanced compounds, with a higher free fraction, which allows for lower doses, but they tend to be eliminated more quickly.

🔴 RED, < 0.2, Low Binding / Artifacts. Values close to 0 or negative are rare (~10%). These are usually very polar (hydrophilic) drugs that are eliminated very quickly by the kidney unless they have special mechanisms. |

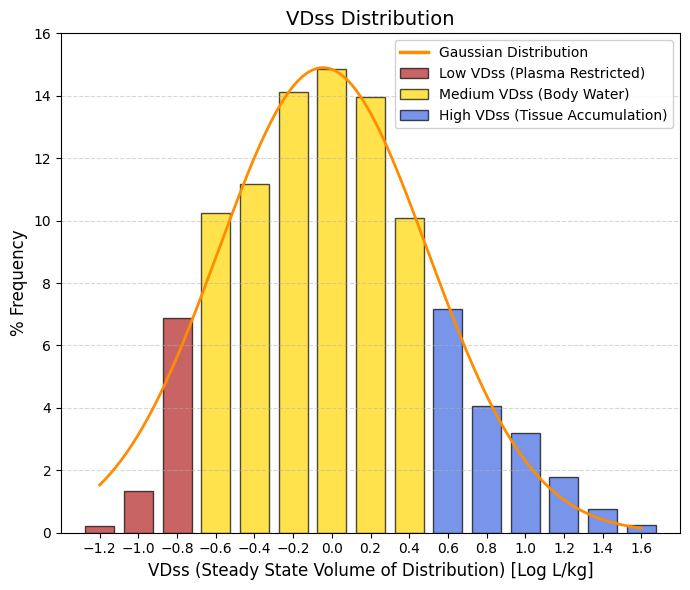

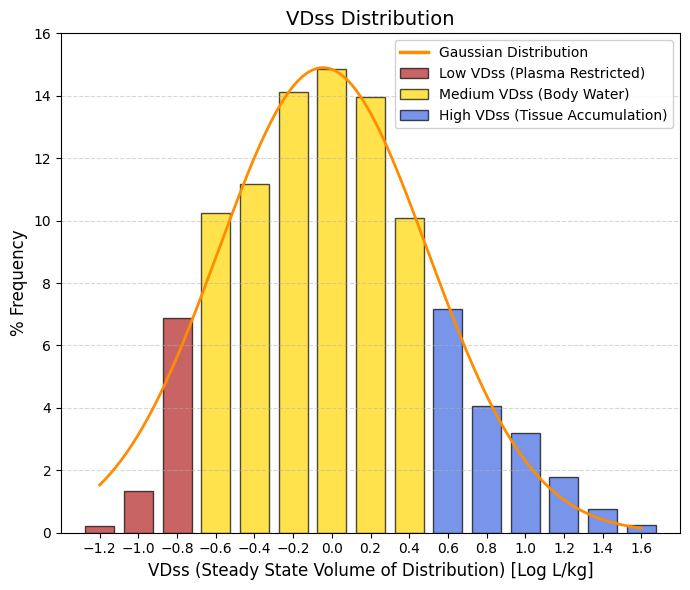

VDss: Steady state volume of distribution. Unit: -log L/kg.

🔴 Low VDss, < -0.6, “Confined to Plasma (< 0.25 L/kg). Drugs that do not leave the blood (too large or too tightly bound to albumin). They represent the left tail of the bell curve. Useful for blood infections, but poor for reaching deep tissues.”

🟡 Medium VDss, -0.6 to 0.5, Total Body Water (0.25 - 3 L/kg). This is where the bulk of the bell curve is (including the mean). The drug is well distributed throughout the body's water and enters the tissues moderately. This is standard behavior.

🔵 High VDss, > 0.5, Tissue Accumulation (> 3 L/kg). Lipophilic drugs that “disappear” from the blood and hide in fat or muscle (e.g., antidepressants, antipsychotics). They represent the right tail. They have very long half-lives. |

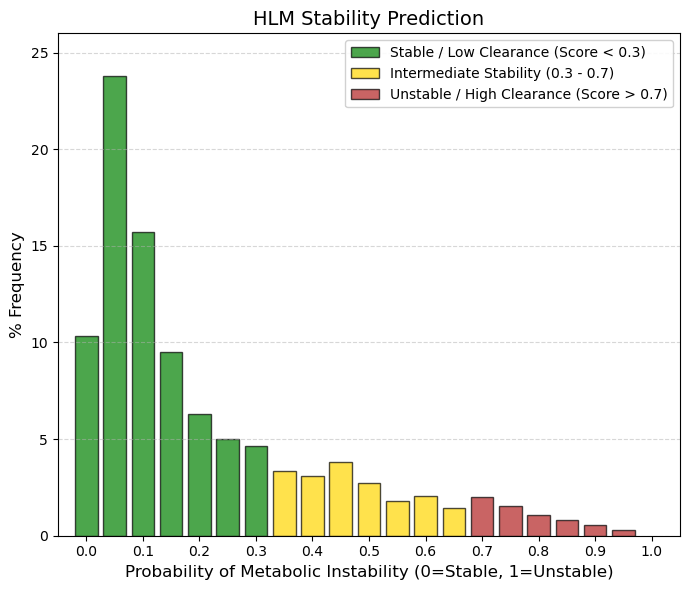

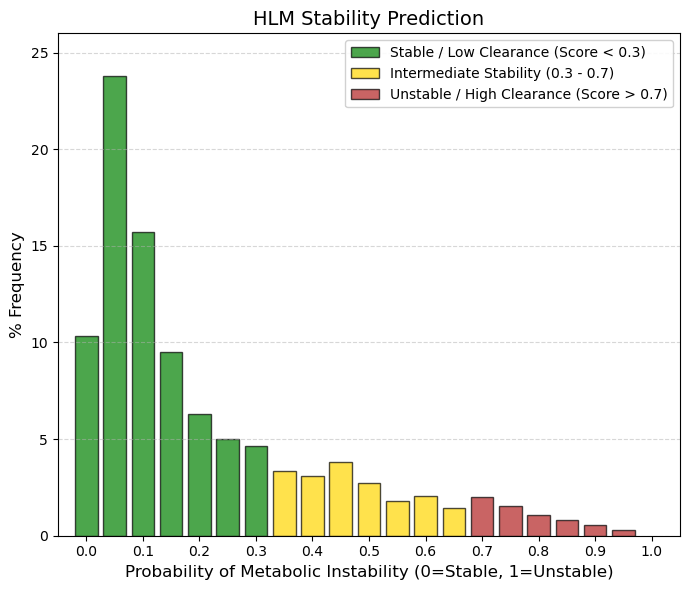

HLM: Human liver microsomal stability predictor.

🟢 GREEN, < 0.3, Stable (Slow/Moderate Metabolism). Approximately 65% of drugs fall into this category. They have a low probability of being rapidly degraded by hepatic microsomes. This allows for a reasonable half-life for dosing.

🟡 YELLOW, 0.3 to 0.7, “Intermediate Zone.” Represents ~29% of drugs. These are compounds that undergo significant but manageable metabolism.

🔴 RED, >0.7, Unstable (Rapid Metabolism). This is the least populated zone (~6%). Drugs that fall here disappear very quickly from the blood. They are usually prodrugs (designed to break down) or ultra-short-acting drugs (such as some anesthetics or hypnotics). |

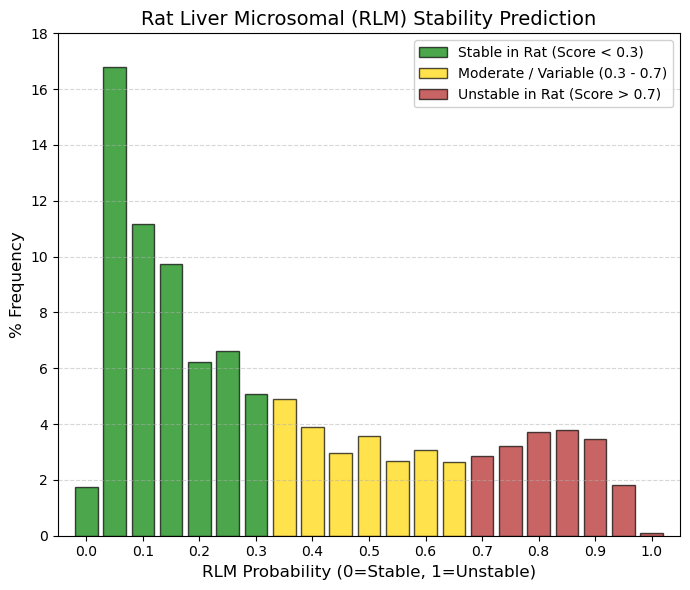

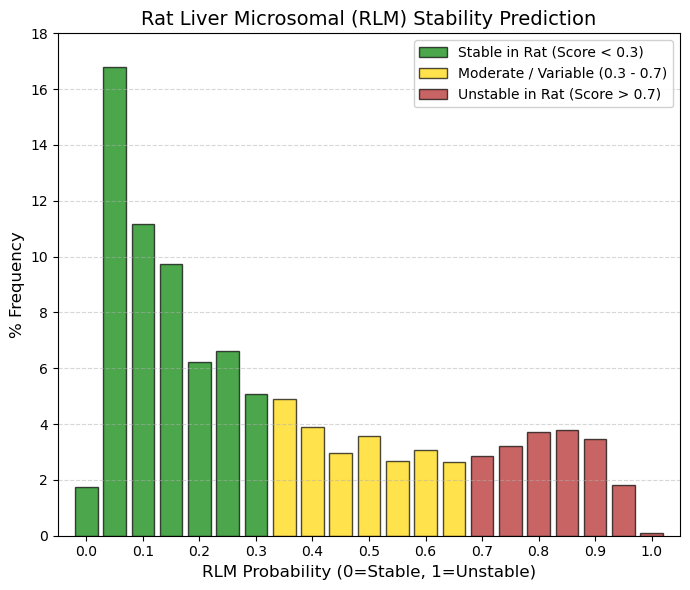

RLM: Rat liver microsomal stability predictor.

While in HLM (Humans) the vast majority was concentrated in 0 - 0.15, RLM (Rats), although the peak remains at 0.05 (16.8%), the tail to the right is much thicker. Look at the 0.8-0.9 range: in humans there was almost nothing (~1%), but in rats there is almost 11% accumulated in that area of instability. Rats have a much faster metabolism and a more aggressive enzyme profile (cytochromes P450) than humans. This means that many drugs that are stable in humans (low HLM) appear unstable in rats (high RLM). This is a classic “false negative” problem in drug development: compounds are discarded because rats destroy them, even though they would have worked well in humans.

🟢 GREEN, < 0.3, Stable in Rats. Groups ~50% of the data (in HLM it was 65%). If a drug is stable here, it will almost certainly be stable in humans. This is the ideal scenario for preclinical trials.

🟡 YELLOW, 0.3 to 0.7, Moderate Metabolism. A very populated area (~30%). Many drugs that require dose adjustments between species (allometric scaling) are found here.

🔴 RED, > 0.7, Unstable in Rats. There is a significant group here (~19-20%). Many of these drugs are approved. This shows that a drug can be approved even if rats metabolize it quickly, as long as it is stable in humans (HLM). |

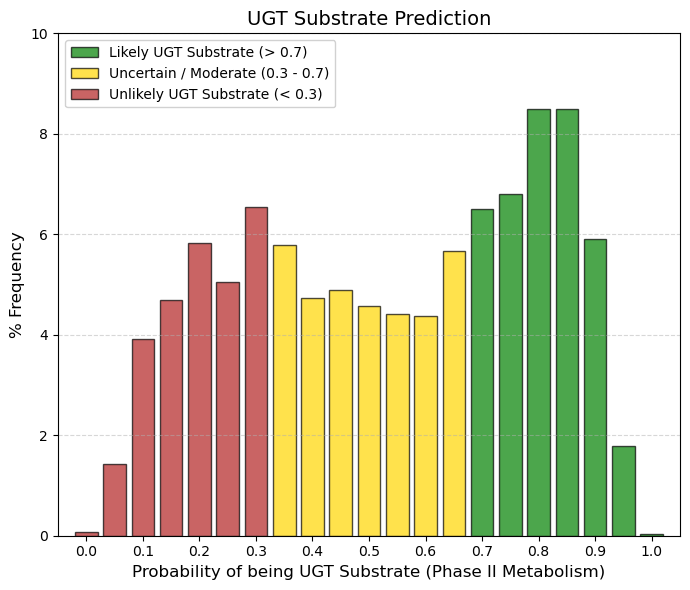

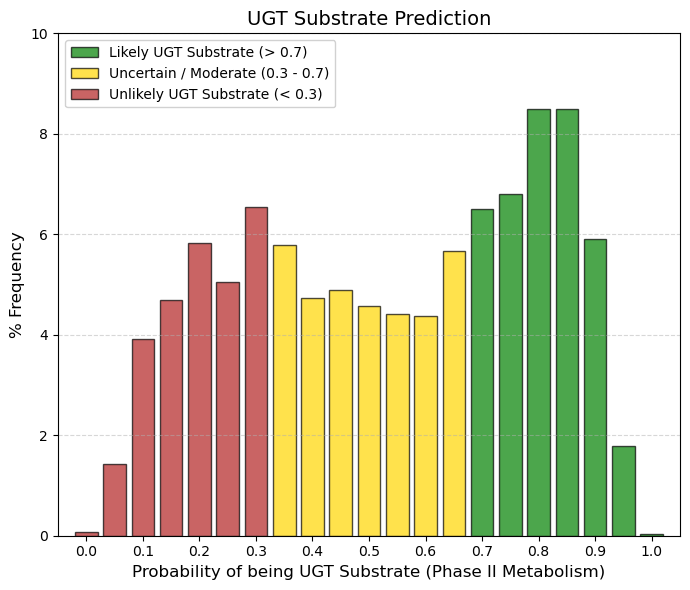

UGT substrate: UGT enzyme substrate predictor.

UDP-glucuronosyltransferases (UGTs) have gained increasing attention as they play important roles in the phase II metabolism of drugs.

🟢 GREEN, > 0.7, Probable UGT Substrate. This is where ~31% of the data is concentrated at the peak on the right. These are drugs that the body eliminates by attaching sugar (glucuronic acid) to them. It is a safe and common route of elimination.

🟡 YELLOW, 0.3 to 0.7, Mixed Zone. The bulk of the population (~51%) is in this central plateau. The model is inconclusive or the drug has moderate conjugation.

🔴 RED, < 0.3, Not UGT Substrate. Represents ~18% of the data (left side). These drugs are eliminated by other routes (direct unchanged urine or exclusive CYP metabolism). |

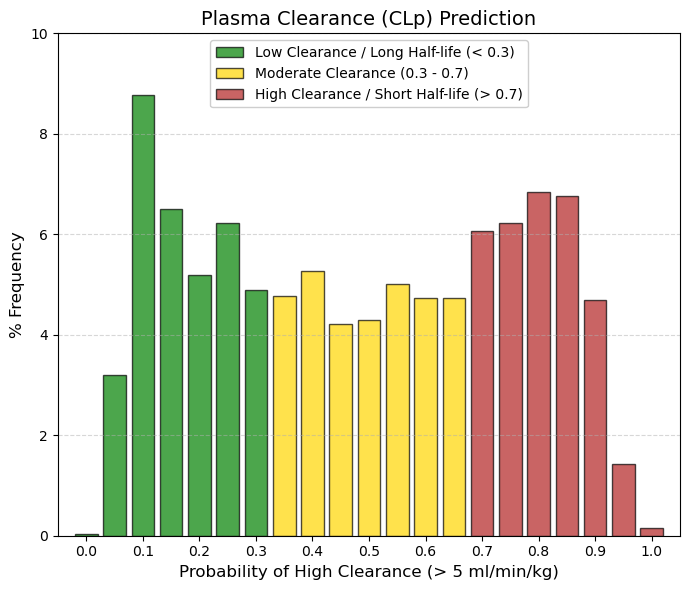

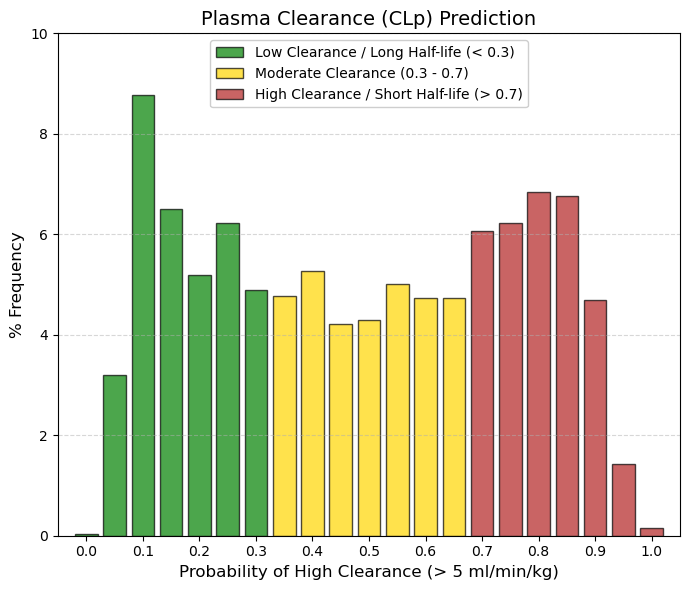

CLp: Plasma clearance is the sum of hepatic and renal and other drug clearance.

🟢 GREEN, < 0.3, Low Clearance (< 5 mL/min/kg). This is where ~24% of drugs fall (left peak). They are ideal for once-daily dosing or long half-life. The body takes a long time to get rid of them.

🟡 YELLOW, 0.3 to 0.7, Moderate Clearance. The vast majority (~40%) fall here. The drug is eliminated at a steady rate. It will likely require dosing every 8-12 hours.

🔴 RED, > 0.7, High Clearance (> 5 mL/min/kg). This represents a large block (~36%). These are drugs that the body eliminates efficiently. It is not “bad” (it prevents toxic accumulation), but it requires frequent doses or extended-release formulations. |

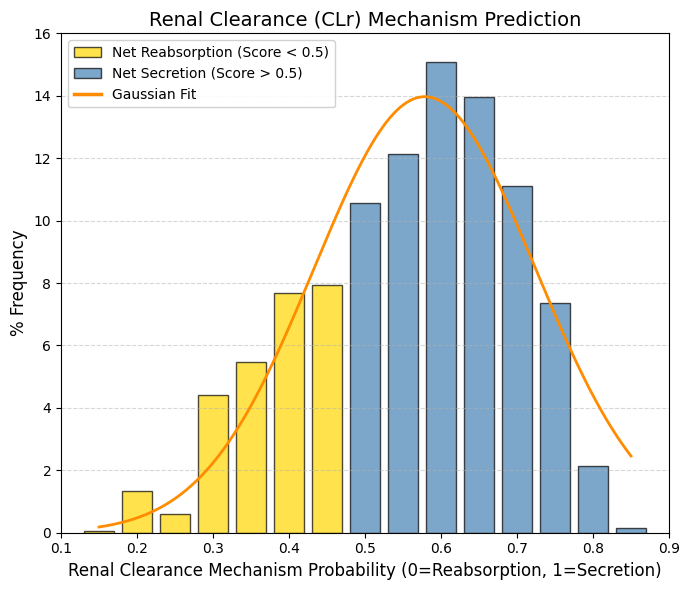

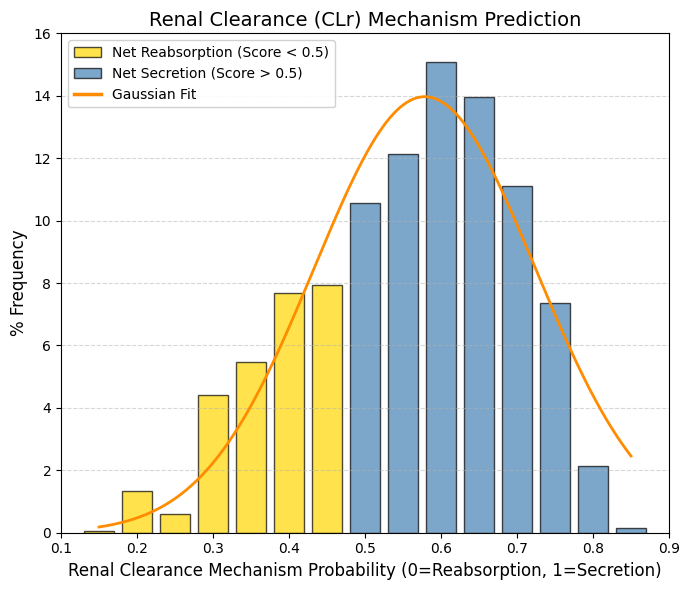

CLr: Renal Clearance.

🟡 Net Reabsorption, < 0.5, The drug returns to the bloodstream. This represents the left tail. These are drugs that the body tries to retain. They tend to have longer half-lives unless they are metabolized by the liver.

🔵 Net secretion, > 0.5, The drug is actively expelled. Represents the majority of the bell curve (including the mean). The renal transport system (OAT/OCT) recognizes the drug and eliminates it.

The observed bias toward active secretion (Score > 0.5) suggests that successful drugs are often substrates of specialized renal transport systems. As described by Giacomini et al. (2010), the proximal tubule functions as an organ of active elimination. Specifically, organic anions are cleared by the OAT family (Nigam et al., 2015), whereas cations exploit the electrochemical gradient via OCT transporters (Koepsell, 2020). This evolutionary machinery enables efficient clearance of xenobiotics, thereby reducing systemic toxicity, but it also introduces the risk of drug-drug interactions due to competition for shared transporters. |

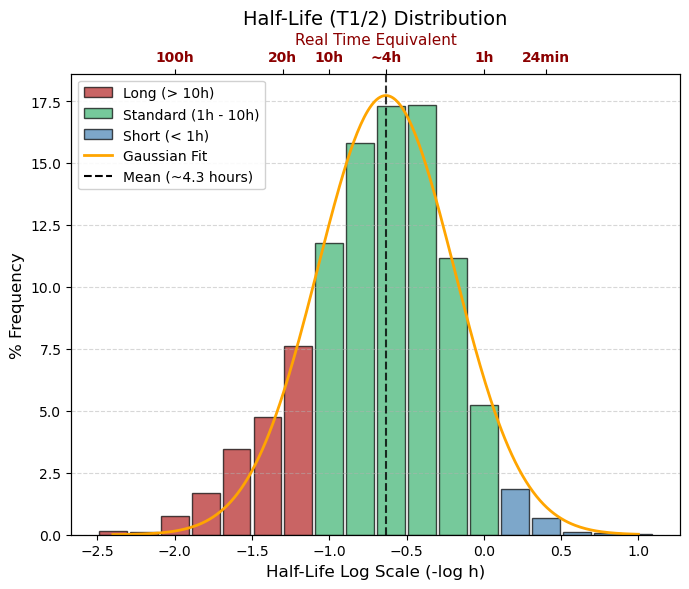

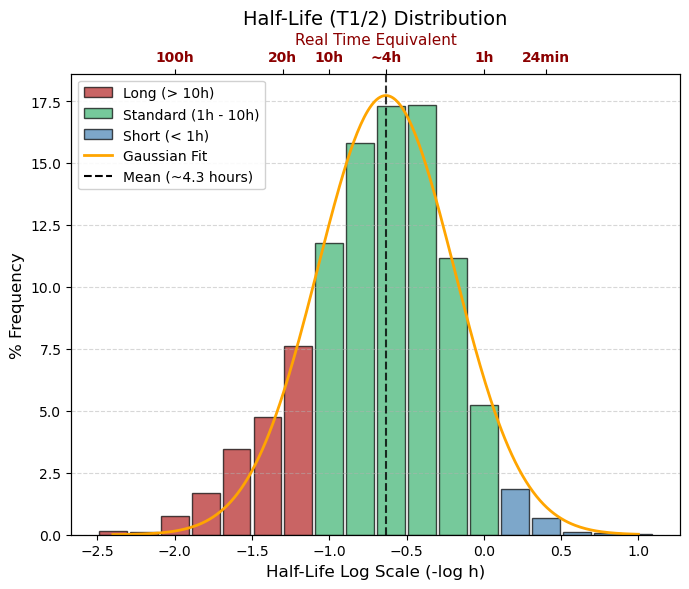

T1/2 (=T50): -log10 (hour)

🔵 Logarithmic range > 0, real time < 1 hour, Ultra-Short, (~7% of data). Eliminated very quickly. Typical of anesthetics or emergency drugs that must disappear quickly.

🟢 Logarithmic range -1.0 to 0, real time 1 to 10 hours, Standard (Average), The Vast Majority. Ideal for doses 3 or 4 times a day (TID/QID). This is where the peak of the graph falls (4 hours).

🔴 Logarithmic range < -1.0, real time > 10 hours, Long Duration, (~30% of data). Ideal for once a day (QD). If it is very negative (e.g., -2.0 = 100 hours), there is a risk of accumulation. |

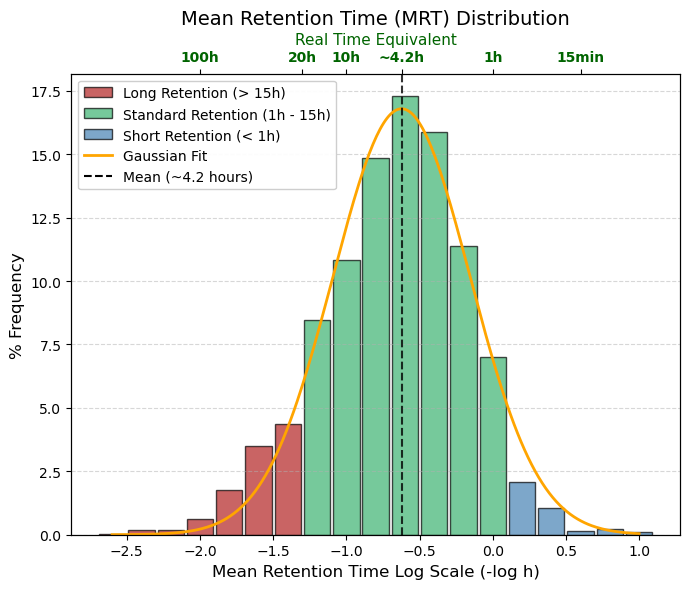

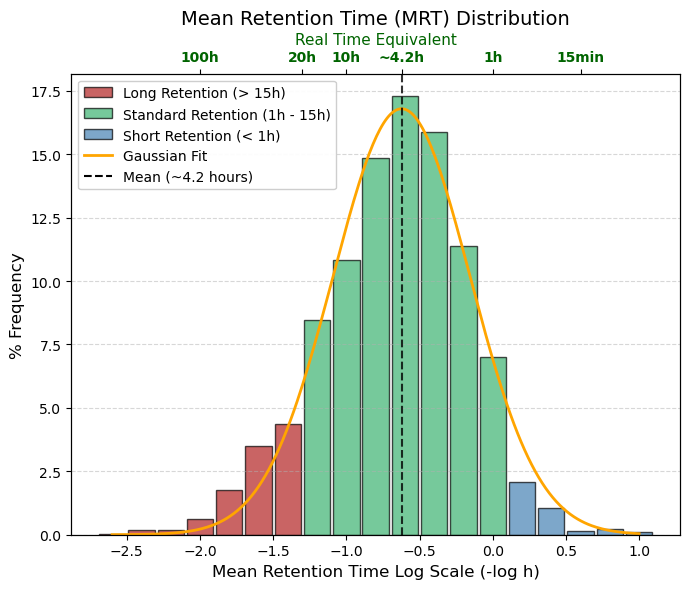

MRT: -log10 (hour), Mean Retention Time

🔵 Rapid elimination, Logarithmic range > 0, Estimated time in hours < 1 hour, Very short-acting drugs. Useful for acute effects, but inconvenient for chronic treatments.

🟢 Standard (Optimal Zone), Logarithmic range -1.2 to 0, Estimated time in hours 1 to 15 hours, The bulk of the bell curve. Allows for convenient dosing (every 8, 12, or 24 hours). The body has time to act on the drug and eliminate it before the next dose.

🔴 Long-acting, Logarithmic range < -1.2, Estimated time in hours > 15 hours, Drugs that “stick” to tissues or recirculate. They have a risk of accumulation if taken too often, but are excellent for single daily doses. |

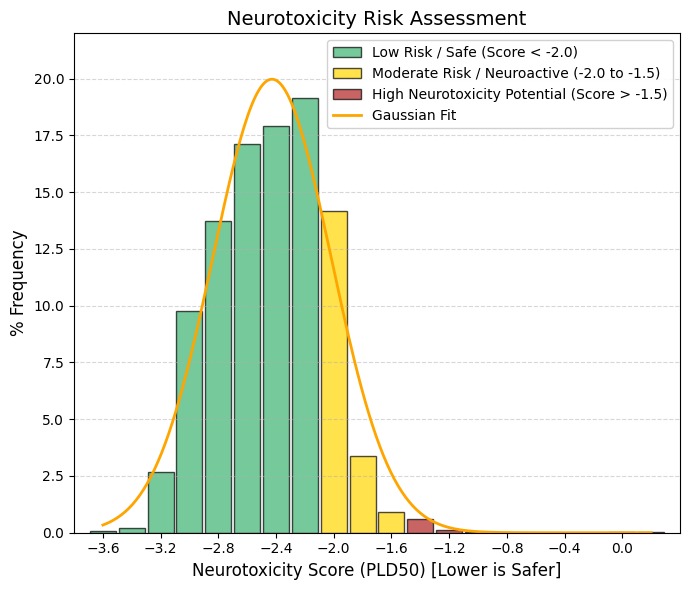

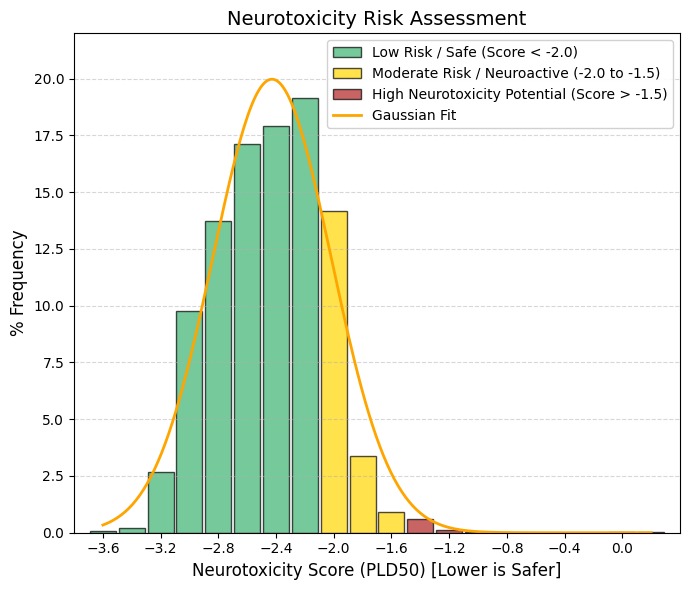

Neurotoxicity.

Neurotoxicity is one of the main reasons for drug discontinuation and there is a need for risk assessment of neurotoxicity of drugs or compounds. Drugs cause neurotoxicity mainly by affecting mitochondrial respiration, immune-mediated responses and inhibiting neuronal activity. Drugs with neurotoxicity can be broadly categorized into three groups, including antibacterials, antifungals, and antidepressants.

🟢 GREEN, < -2.0, Safe Zone (Low Risk). The vast majority (~85%) are concentrated here. The drug is very safe for nerve tissue at therapeutic doses.

🟡 YELLOW, -2.0 to -1.5, Moderate Risk / Neuroactive. A transition zone. Drugs that may have mild side effects on the nervous system (dizziness, drowsiness).

🔴 RED, > -1.5, High Neurotoxic Potential. There are very few cases (the flat right tail). These are generally chemotherapy drugs or powerful psychiatric drugs where the effect on neurons is part of the mechanism or an accepted risk. |

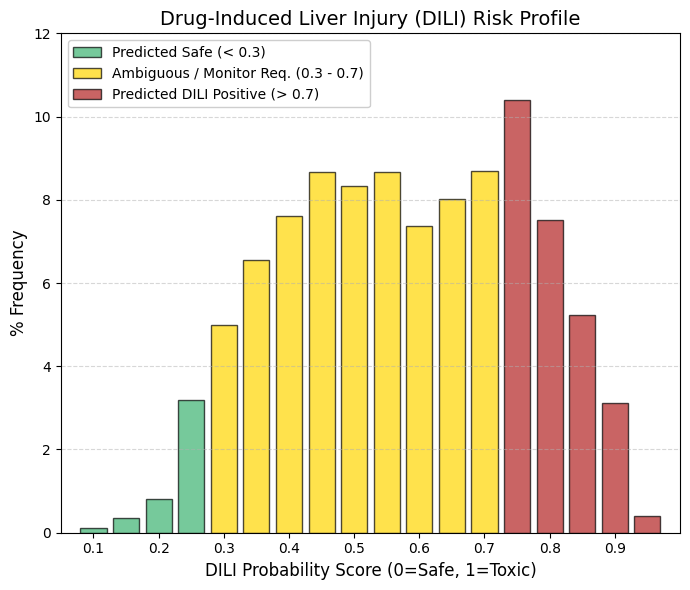

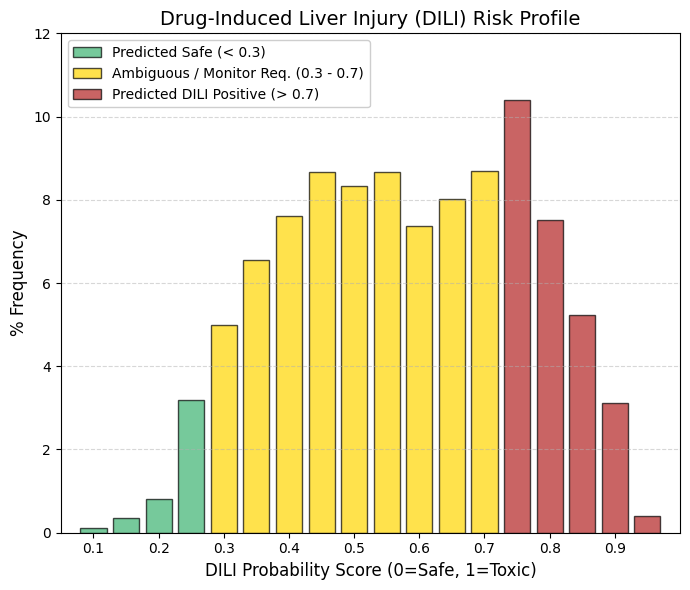

DILI: Drug-induced hepatotoxicity, also known as drug-induced liver injury.

🟡 The “Gray Zone” (Yellow Bar): The vast majority of drugs cluster in the central range (0.4-0.7). This indicates that hepatotoxicity is rarely a strictly “black-or-white” phenomenon. Instead, it depends on dose, patient genetics, and metabolic pathways.

🔴 The High-Risk Peak: The presence of a relatively high frequency (nearly 10%) around 0.75 (red zone) confirms that hepatic risk is often the “price to pay” for many effective therapies. A drug is not necessarily discarded solely because it shows a DILI signal; rather, the risk is managed—for example, by recommending that clinicians monitor patient transaminase levels.

Whereas neurotoxicity and cardiotoxicity (hERG liability) act as stringent filters—where a positive signal often leads to termination during preclinical development—hepatotoxicity is more frequently regarded as a manageable risk that the pharmaceutical industry is willing to accept. |

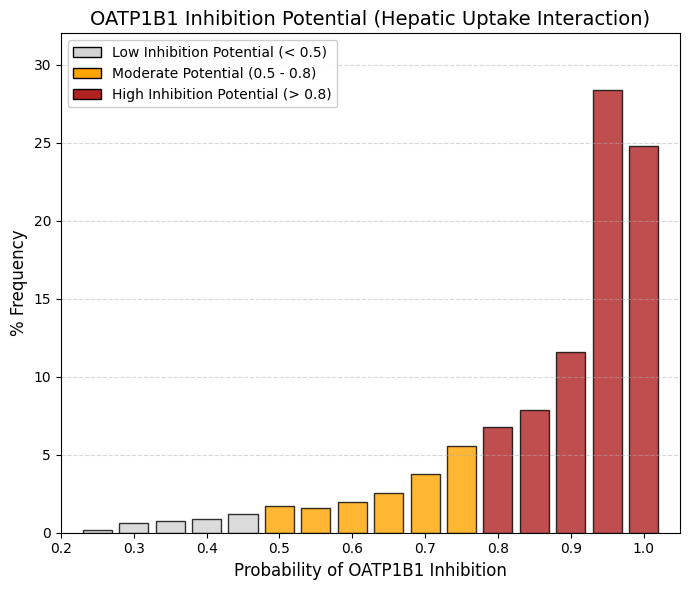

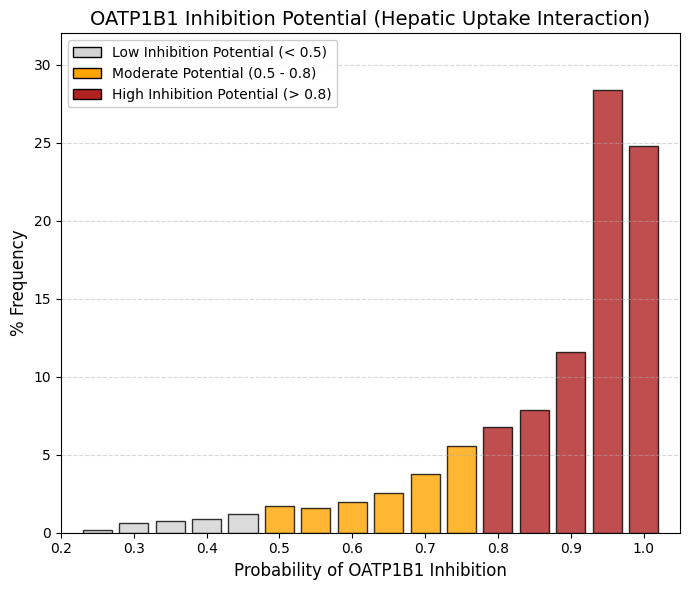

OATP1B1 inhibitor: Organic anionic polypeptide 1B1 inhibitor predictor.

The organic anion transporting polypeptide OATP1B1 is a membrane transporter that facilitates hepatic uptake of drugs, enabling their subsequent conjugation and biliary excretion, a critical step in drug elimination in the human body.

“If it enters, it interferes”: For a drug to be eliminated by the liver, it often must be transported by OATP1B1. If the drug binds to the transporter to enter, it automatically acts as a “competitive inhibitor” for other drugs.

The Successful Drug Profile: As we saw in your previous data (MW, LogP), approved drugs tend to be somewhat lipophilic and moderate in size. Those are exactly the chemical characteristics recognized by OATP1B1.

Risk of Interaction (DDI): This graph clearly shows us that most drugs have the potential to cause interactions. If you take two drugs from this red zone (e.g., a statin and gemfibrozil), they will compete for OATP1B1, one will be left out and cause muscle toxicity. |

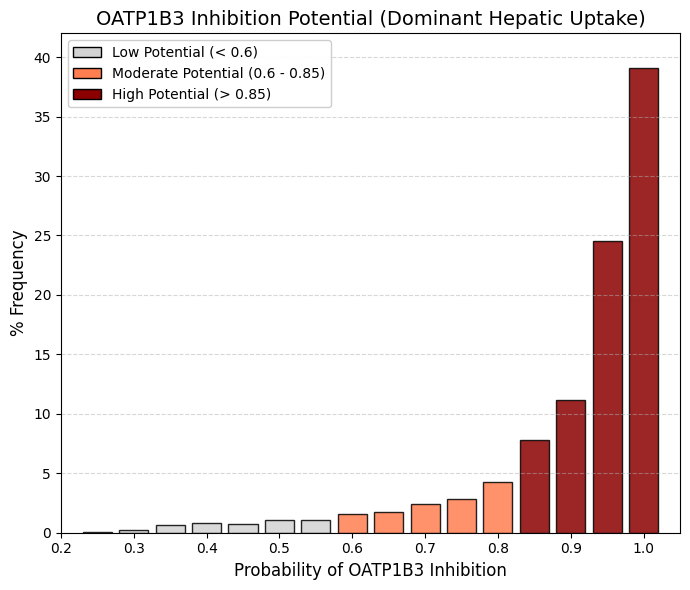

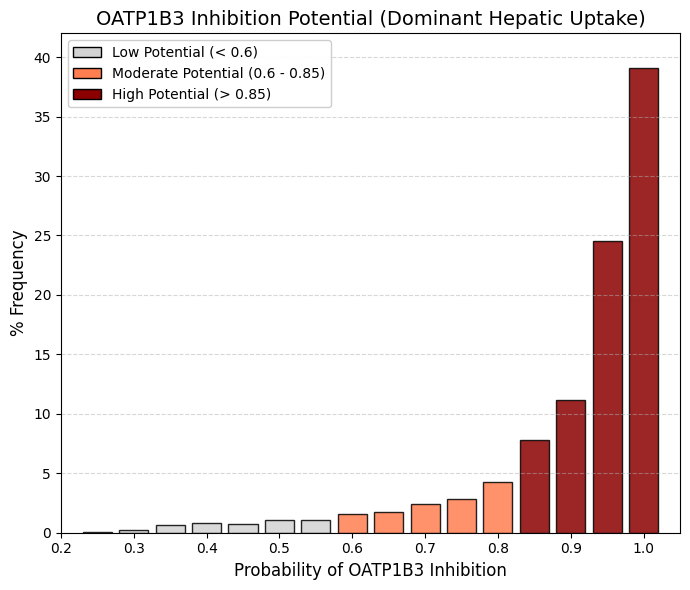

OATP1B3 inhibitor: Organic anionic polypeptide 1B3 inhibitor predictor.

OATP1B1 and OATP1B3 constitute the principal “gateways” for drug entry into the liver and are localized to the sinusoidal (blood-facing) membrane of hepatocytes. Although they share a high degree of sequence identity, OATP1B3 exhibits a broader substrate profile and preferentially accommodates larger and more lipophilic molecules, such as digoxin, paclitaxel, and certain peptide-based drugs, compared with OATP1B1.

The high proportion of drugs testing positive (~75%) can be rationalized mechanistically: for a compound to undergo hepatic elimination—via cytochrome P450-mediated metabolism or biliary excretion—it must first be taken up into hepatocytes. ADMETsar predicts transporter “inhibition,” which at the molecular level reflects binding affinity to the transporter. Consequently, compounds that bind efficiently to gain hepatic entry may be classified as strong competitors and thus appear as inhibitors in in silico predictions. |

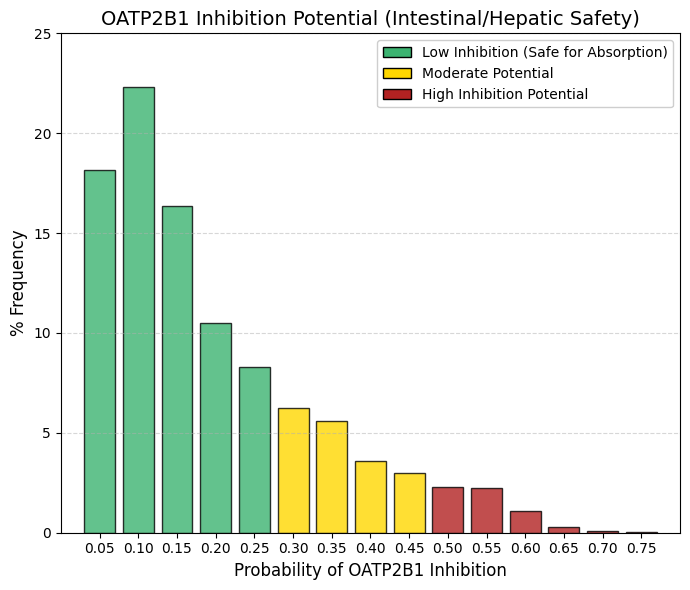

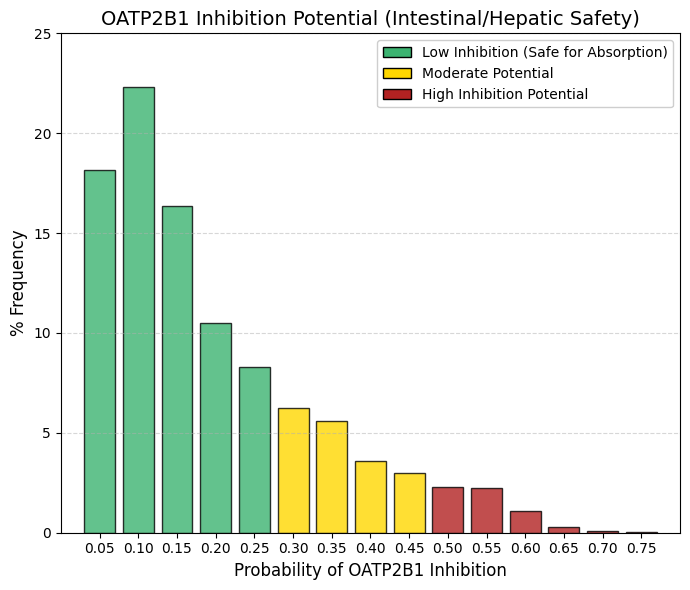

OATP2B1 inhibitor: Organic anionic polypeptide 2B1 inhibitor predictor.

Intestinal OATP2B1 facilitates the uptake of drugs and dietary nutrients from the intestinal lumen into the systemic circulation. The design of compounds that strongly inhibit OATP2B1 carries the risk of impairing nutrient absorption and precipitating complex gastrointestinal drug-drug interactions, thereby altering the oral bioavailability of co-administered therapies.

In contrast to the liver -where hepatic uptake is desirable to enable metabolism and elimination- intestinal transport processes must remain unobstructed to ensure efficient absorption. Accordingly, successful orally administered drugs tend to avoid significant inhibition of OATP2B1, thereby preserving predictable pharmacokinetics and minimizing adverse gastrointestinal effects. |

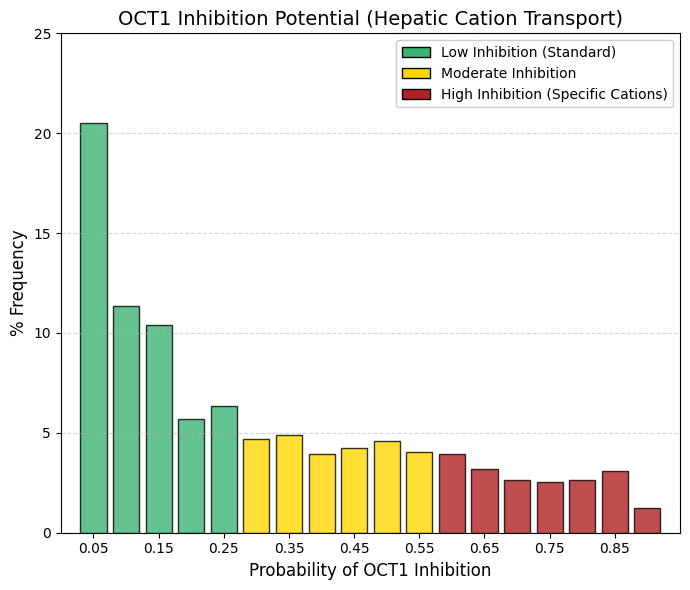

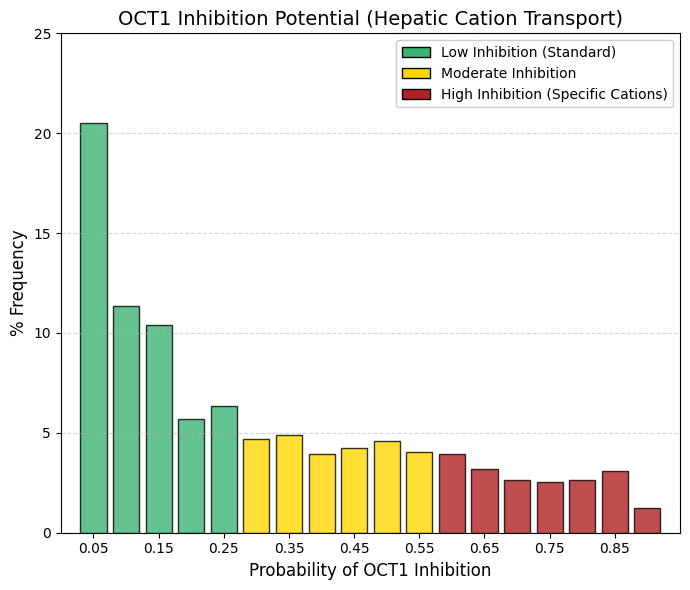

OCT1 inhibitor: Organic cation transporter 1 inhibitor predictor.

Whereas OATP1B1/1B3 (anion transporters) show a pronounced accumulation toward the high-interaction region, OCT1 (a cation transporter) is heavily skewed toward the low-interaction end of the distribution. Approximately 42% of drugs exhibit a very low probability (< 0.15) of inhibiting OCT1. Although the distribution displays a long, shallow tail, it lacks a distinct high-interaction peak. This pattern highlights the chemical selectivity of hepatic uptake mechanisms. OCT1 primarily transports small, often hydrophilic, positively charged organic cations, such as metformin and morphine. In contrast, as demonstrated in the LogP analysis, most successful drugs are predominantly lipophilic. Such compounds can access hepatocytes via passive diffusion or OATP-mediated transport and therefore neither rely on nor substantially inhibit OCT1 to the same extent as anionic drugs.

As a consequence of reduced competition at this transporter, the statistical risk of OCT1-mediated drug-drug interactions is lower than that associated with OATP1B1-mediated interactions. |

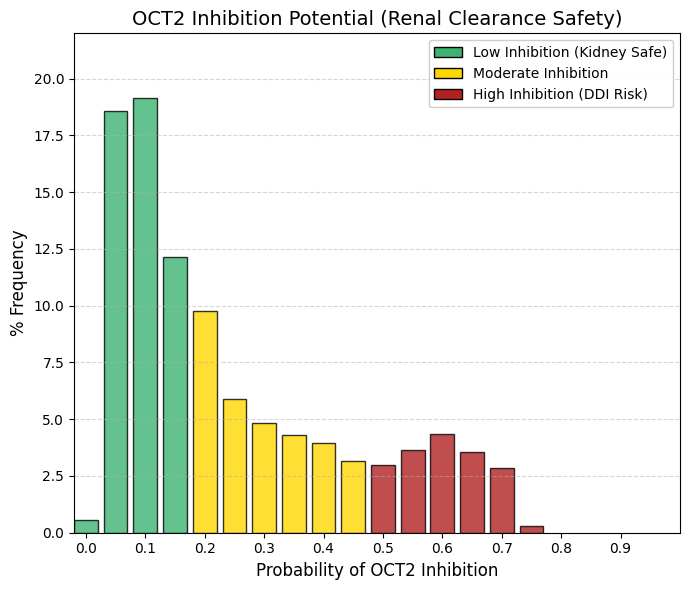

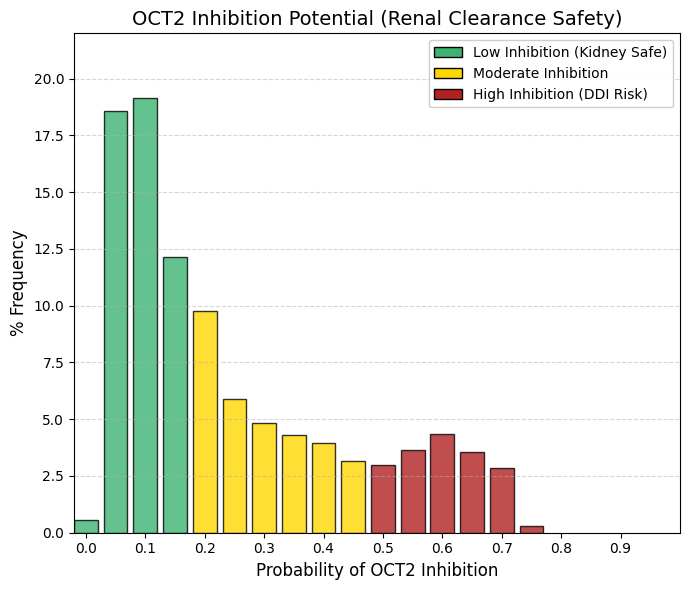

OCT2 inhibitor: Organic cation transporter 2 inhibitor predictor.

OCT2 is expressed on the basolateral membrane of proximal renal tubule cells, where it mediates the uptake of organic cations from the blood into tubular epithelial cells, constituting the primary renal secretory pathway for cationic drugs and endogenous toxins. Inhibition of OCT2 impairs renal clearance by preventing the efficient elimination of co-administered substrates.

For example, cimetidine is a known OCT2 inhibitor and reduces the renal elimination of drugs such as metformin or procainamide, thereby increasing systemic exposure and the risk of toxicity. Consequently, pharmaceutical development programs preferentially advance compounds that do not significantly inhibit this critical transporter, in order to minimize the potential for cumulative renal toxicity and clinically relevant drug-drug interactions. |

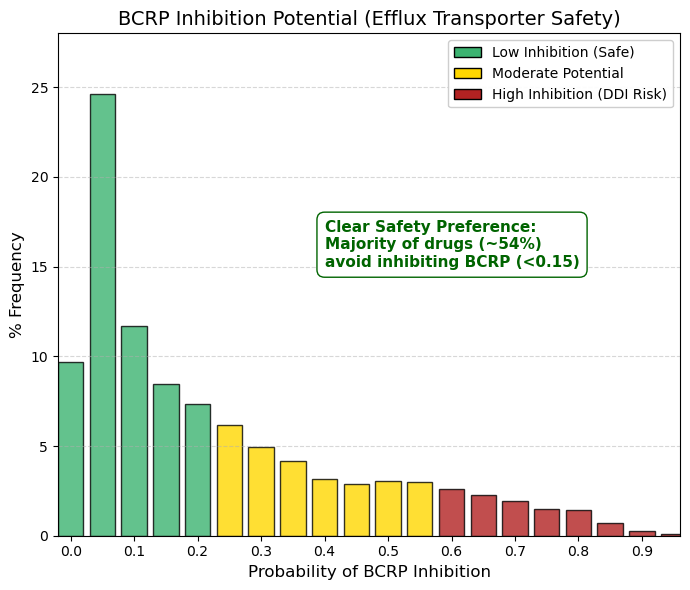

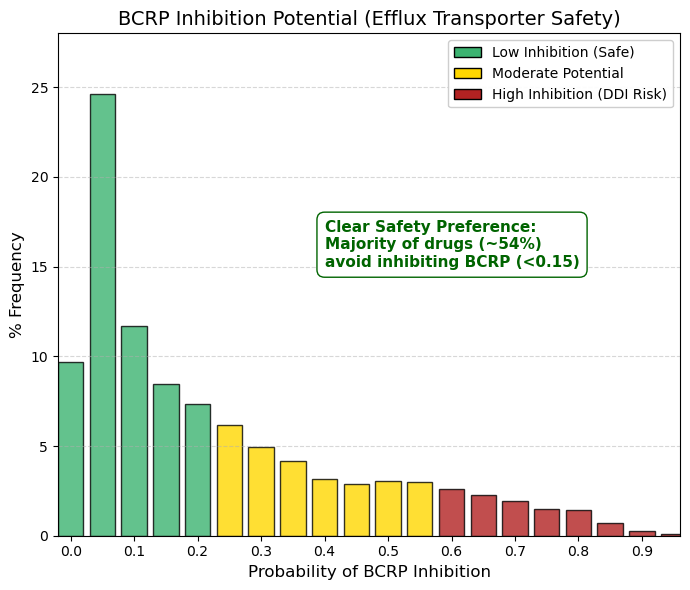

BCRP inhibitor: Breast cancer resistant protein inhibitor predictor.

As observed for renal organic cation transporters (OCTs) and intestinal absorption via OATP2B1, the distribution here is strongly left-skewed. The primary peak occurs at 0.05 (24.6%), and cumulative values from 0 to 0.15 account for approximately 54% of the dataset. Thus, more than half of approved drugs exhibit no or only a very low propensity to inhibit the breast cancer resistance protein (BCRP).

BCRP, encoded by the ABCG2 gene, functions as an ATP-dependent efflux transporter that actively expels substrates from cells. It plays a critical protective role at key biological barriers, including the blood-brain barrier, testes, and placenta, by limiting tissue exposure to xenobiotics. Inhibition of BCRP can lead to the accumulation of co-administered drugs —such as statins or chemotherapeutic agents— to potentially toxic levels.

Accordingly, these data indicate that pharmaceutical discovery programs deliberately avoid designing compounds that inhibit BCRP. In contrast to hepatic OATPs, where transporter interaction is frequently tolerated or even desirable, BCRP inhibition is generally regarded as a safety liability. |

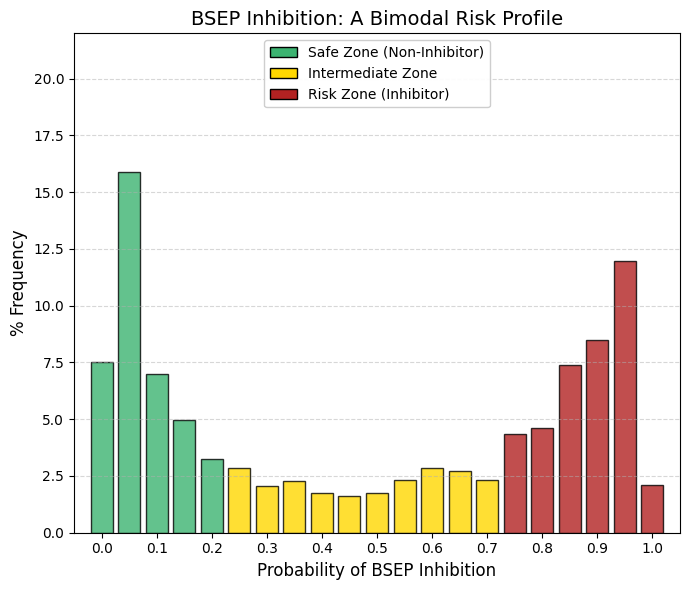

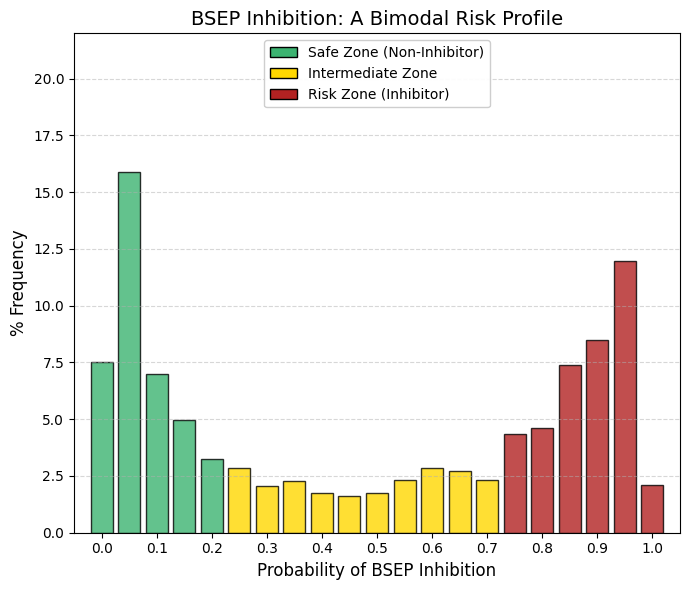

BSEP inhibitor: Bile acid salt efflux pump inhibitor predictor.

In contrast to pure toxicity endpoints (such as hERG liability or AMES mutagenicity) where approved drugs overwhelmingly cluster at the low-risk (left) end of the distribution, and transport-related parameters such as OATPs, which tend to accumulate at the high-interaction (right) end, BSEP displays a strikingly bimodal pattern among approved drugs.

The low-risk group (left): A pronounced peak is observed at approximately 0.05, indicating that roughly 30% of approved drugs exhibit minimal or no interaction with this critical transporter.The “valley of death” (center): Very few compounds show intermediate levels of BSEP interaction, suggesting limited pharmacological space for partial inhibition. The high-risk group (right): A second, substantial peak appears between 0.85 and 0.95. When considering the high-risk region (>0.75), nearly 35-40% of approved drugs demonstrate a strong likelihood of BSEP inhibition.

BSEP (bile salt export pump) is the primary transporter responsible for the canalicular efflux of bile salts from hepatocytes into bile. Its inhibition leads to intracellular bile acid accumulation and can precipitate cholestatic liver injury. Structurally, BSEP possesses a large, lipophilic substrate-binding pocket. While lipophilicity is a common and often necessary feature of successful drugs to enable cellular entry, this same property predisposes many compounds to unintended binding and inhibition of BSEP.

Collectively, these data identify BSEP inhibition as an “Achilles’ heel” of modern pharmacology. Numerous clinically successful agents—including statins, antidiabetic drugs, and certain antibiotics—are known BSEP inhibitors and therefore require hepatic monitoring in clinical use. Although drug discovery efforts attempt to favor compounds in the low-interaction regime, complete avoidance of BSEP inhibition is frequently incompatible with maintaining therapeutic efficacy, as reflected by the persistent high-risk peak. |

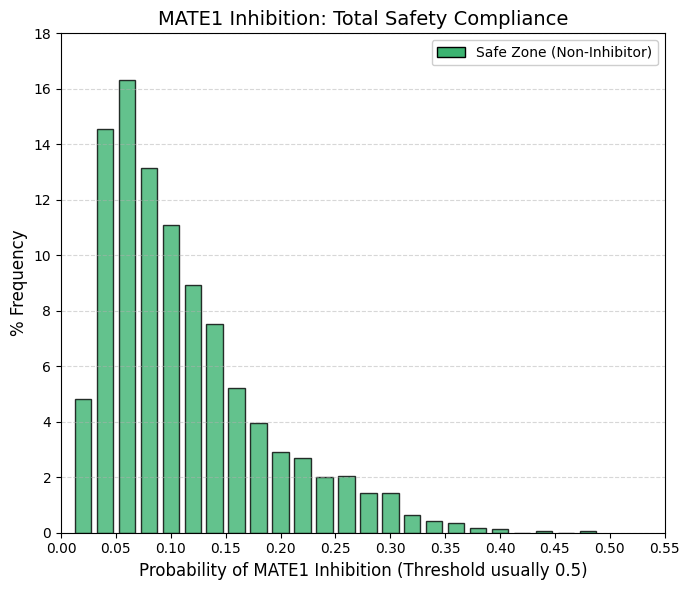

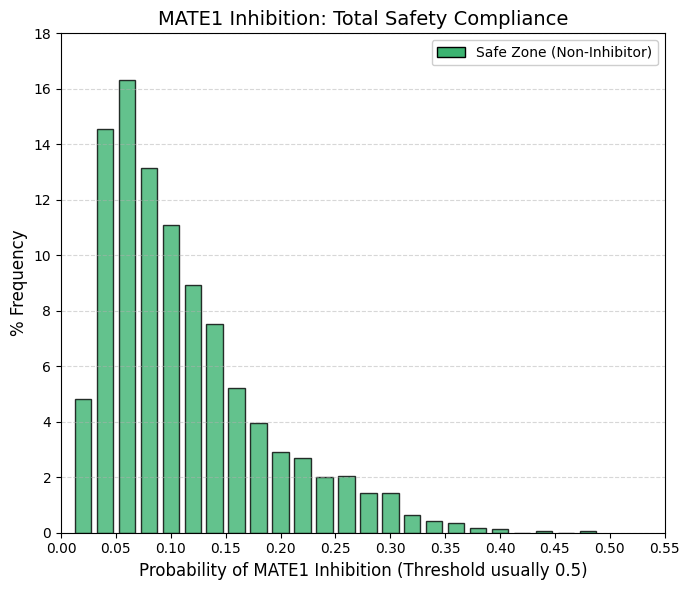

MATE1 inhibitor: Multidrug and toxin efflux transporter 1 inhibitor predictor.

The distribution terminates at 0.48. Given that probabilistic models such as ADMETsar typically define transporter inhibition using a threshold of 0.5 (50%), these data indicate that virtually no approved drug is a potent inhibitor of MATE1. MATE1 functions as the functional counterpart of OCT2 in renal drug handling.

OCT2 (uptake) mediates the transport of cationic drugs from the bloodstream into proximal tubular cells, whereas MATE1 (efflux) exports these compounds from the cells into the tubular lumen for urinary excretion. When a drug is efficiently taken up via OCT2 but inhibits MATE1, intracellular efflux is impaired, leading to accumulation within renal epithelial cells, elevated intracellular concentrations, and subsequent nephrotoxicity.

Consistent with this mechanistic liability, pharmaceutical development programs have learned to stringently avoid MATE1 inhibition. As a result, MATE1 acts as an almost absolute safety filter: compounds that significantly block this efflux pathway are rarely, if ever, advanced to approval due to unacceptable renal toxicity risk. |

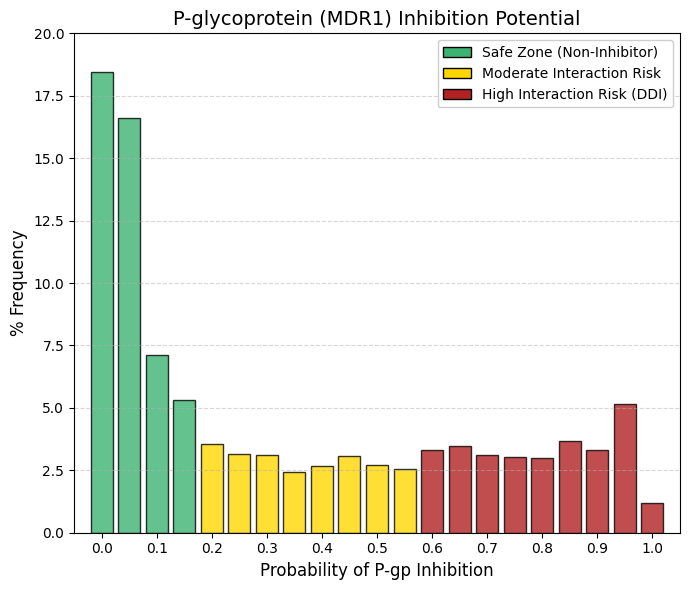

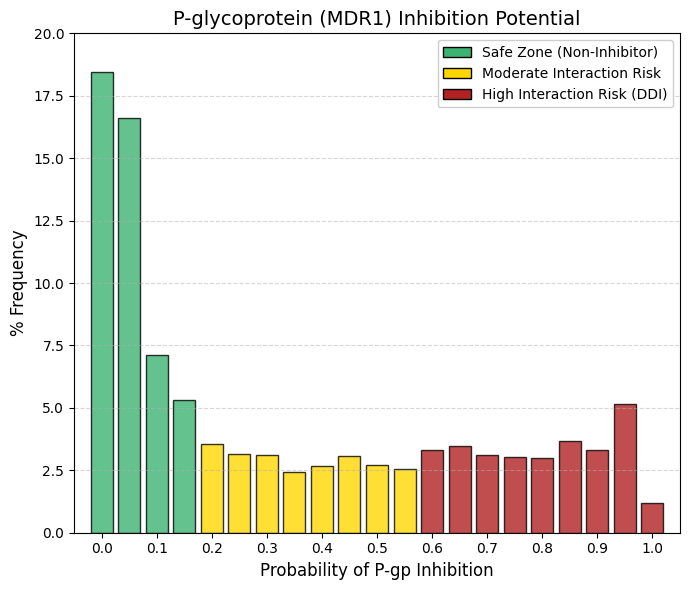

Pgp inhibitor: P-glycoprotein inhibitor predictor.

Similar to other efflux transporters such as BCRP and MATE1, P-glycoprotein (P-gp) exhibits a right-skewed, approximately exponential distribution. However, a key distinction is the presence of a heavier and more persistent high-interaction tail compared with MATE1.

Safety zone (< 0.1): Aggregating the bins at 0, 0.05, and 0.1 accounts for approximately 42% of approved drugs, indicating that nearly half of marketed compounds exhibit minimal or no P-gp inhibition. Persistent tail: In contrast to MATE1, whose distribution rapidly approaches zero, P-gp displays a relatively constant frequency (≈2.5-3.5%) across a broad interaction range (0.2-0.9). This pattern reflects the fact that P-gp inhibition is a frequent and often unavoidable property of large, lipophilic molecules.

P-gp is highly expressed at the blood-brain barrier, where it actively limits neuronal exposure by exporting xenobiotics from the brain. Inhibition of P-gp compromises this protective function, effectively removing a critical barrier to central nervous system entry. Consequently, co-administered drugs that are P-gp substrates—such as digoxin—may accumulate to toxic concentrations. Clinically, macrolide antibiotics such as clarithromycin exemplify this risk through their inhibitory effects on P-gp, which can precipitate digoxin toxicity.

From a drug development perspective, avoidance of P-gp inhibition is preferred, as reflected by the left-hand peak of the distribution. Nevertheless, partial inhibition, represented by the extended tail, is often tolerated when the therapeutic benefit is substantial, provided that the interaction risk is well characterized and clearly communicated in clinical use. |

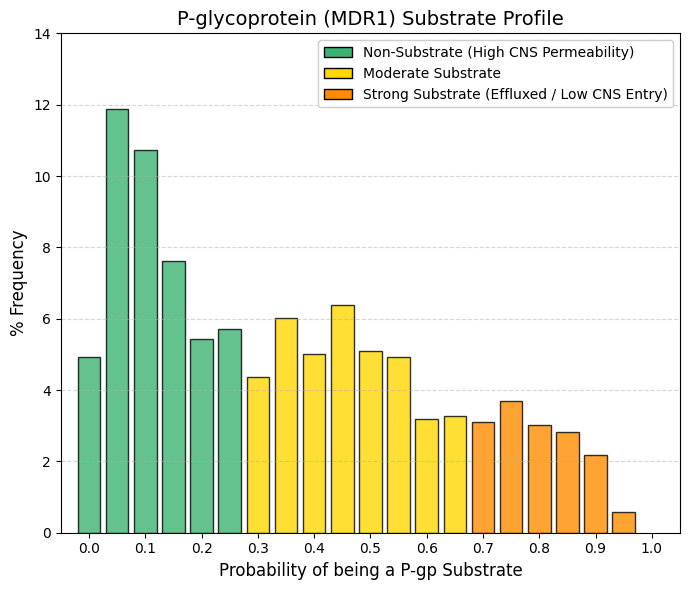

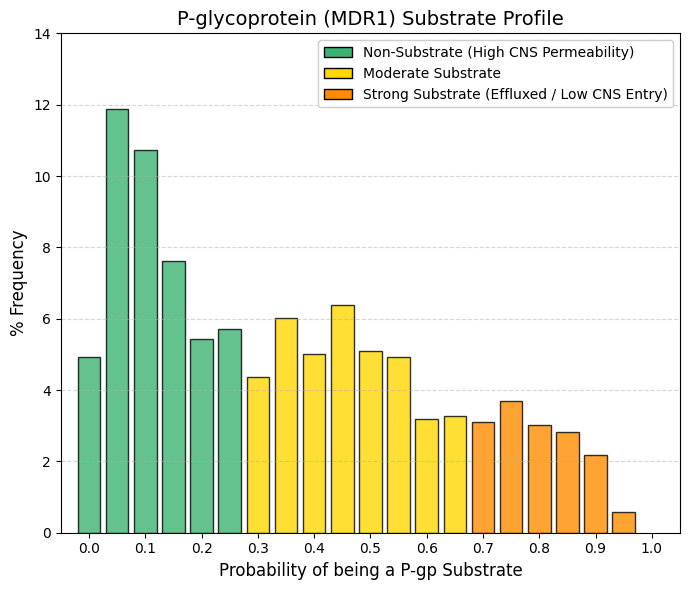

Pgp substrate: P-glycoprotein substrate predictor.

Whereas inhibition of P-glycoprotein (P-gp) primarily constitutes a toxicity risk for co-administered drugs, being a P-gp substrate directly influences the efficacy and tissue distribution of the drug itself. High-permeability group (< 0.2): Aggregation of the lowest bins accounts for approximately 35-40% of approved drugs. These compounds effectively “fly under the radar” of P-gp and are not efficiently recognized or expelled by the transporter. Central plateau (0.2-0.6): Across this range, the frequency remains relatively constant (≈5-6%), indicating that moderate P-gp substrate behavior is common and not intrinsically detrimental. The clinical relevance of P-gp substrate status is therefore context dependent.

For central nervous system (CNS) indications, such as brain infections or depression, strong P-gp substrate properties are incompatible with efficacy, as active efflux at the blood-brain barrier prevents sufficient drug accumulation in the brain. Conversely, for peripherally acting therapies, such as non-sedating antihistamines (e.g., loratadine), P-gp substrate behavior is desirable, as it limits CNS penetration and minimizes neurological side effects. At the intestinal level, P-gp reduces oral absorption by exporting substrates back into the lumen, whereas at the blood-brain barrier it serves a protective role by restricting xenobiotic entry into the brain. Accordingly, drugs intended for CNS activity must reside on the low-substrate end of the distribution, whereas peripherally acting drugs can tolerate or even benefit from higher P-gp substrate liability, thereby enhancing safety by reducing central exposure. |

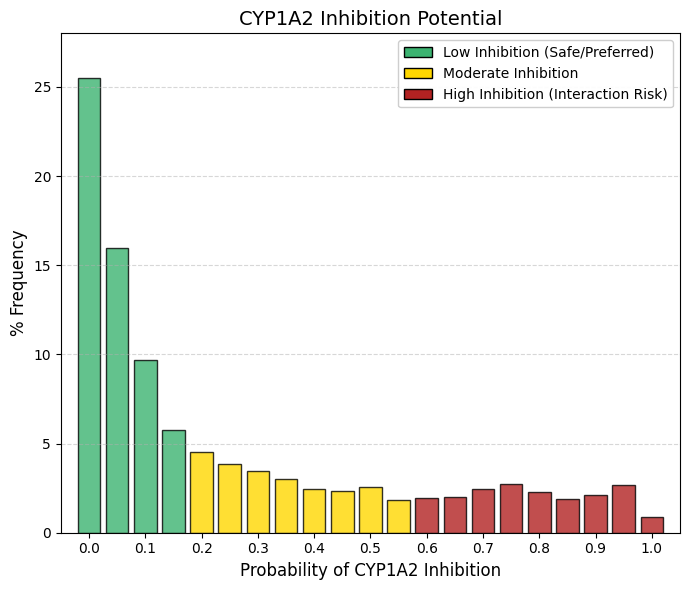

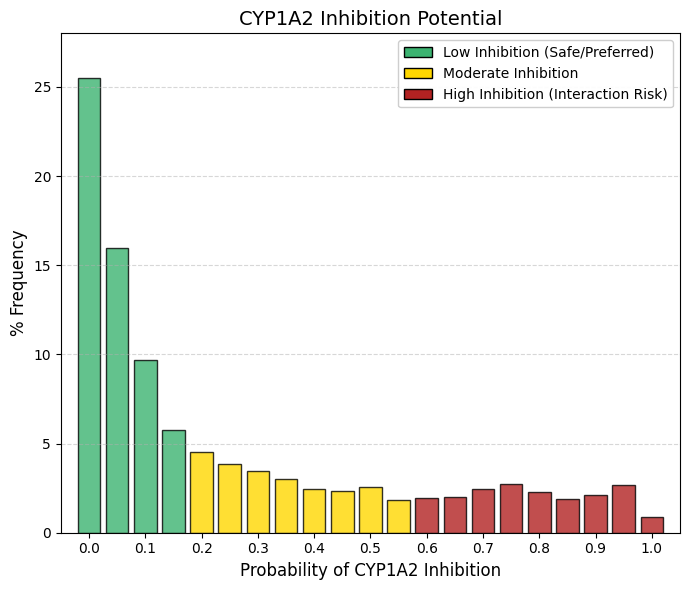

CYP1A2 inhibitor: CYP1A2 inhibitor predictor.

As observed for renal efflux transporters such as MATE1 and for stringent cardiac safety endpoints, the distribution of CYP1A2 inhibition probabilities is strongly right-skewed. The safety wall: The most prominent feature is bin 0, which accounts for 25.5% of approved drugs, indicating that one in four compounds has essentially no likelihood of inhibiting CYP1A2. When probabilities up to 0.15 are aggregated, approximately 57% of drugs fall within a low-risk inhibition zone. The remaining compounds form a long, shallow tail, with low but relatively constant frequencies (≈2-3% per bin) extending across higher probability values.

CYP1A2 is a clinically relevant hepatic cytochrome P450 enzyme, although it is less abundant than CYP3A4. Its substrates include caffeine, theophylline, melatonin, and several widely used psychotropic agents, such as clozapine and olanzapine. Inhibition of CYP1A2 can therefore impair caffeine clearance, leading to symptoms such as nervousness or tachycardia after minimal intake, and, more critically, can precipitate toxic accumulation of antipsychotic drugs.

Structurally, the active site of CYP1A2 is relatively flat and narrow, conferring a preference for planar, polycyclic aromatic substrates. In contrast, contemporary drug design increasingly favors three-dimensional, sp³-rich molecular architectures to enhance solubility and developability. As a result, modern drug candidates tend to be intrinsically mismatched to the CYP1A2 binding pocket. Consequently, CYP1A2 inhibition is minimized not only for safety considerations, but also as a natural outcome of prevailing structural design principles that yield more globular molecules with reduced affinity for this planar enzyme. |

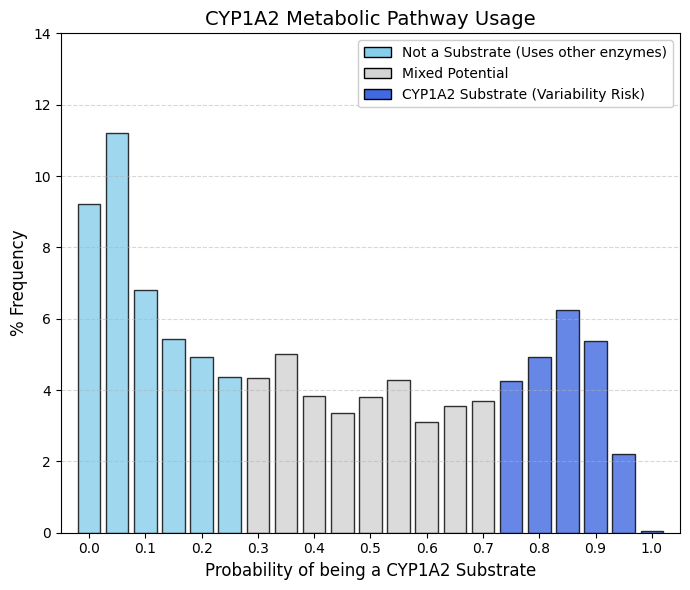

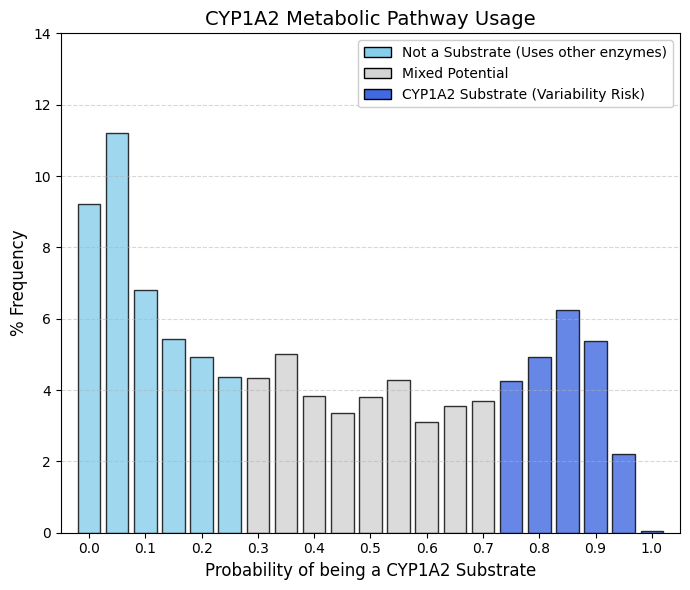

CYP1A2 substrate: CYP1A2 substrate predictor.

Whereas drug discovery efforts actively minimize CYP1A2 inhibition to avoid clinically relevant drug-drug interactions, the corresponding substrate profile is considerably more permissive and broadly distributed. Non-substrate peak (left): Approximately 32% of approved drugs fall below a probability of 0.2, indicating that they are not appreciably metabolized by CYP1A2. Central plateau: The mid-range of the distribution is wide and relatively flat, reflecting the fact that moderate CYP1A2 substrate liability is common and generally acceptable. Substrate group (right): A pronounced increase is observed at high probabilities (>0.8), encompassing approximately 16-17% of drugs that are clearly metabolized by CYP1A2.

The structural basis for this pattern lies in the unusually narrow and planar active site of CYP1A2, which preferentially accommodates flat, aromatic substrates such as caffeine and theophylline. Consequently, many contemporary drug molecules, designed to be more three-dimensional and globular, are intrinsically poor CYP1A2 substrates and therefore populate the low-probability region of the distribution.

When a drug is intentionally or unavoidably designed as a CYP1A2 substrate, clinically relevant sources of variability must be anticipated. Induction of CYP1A2 by tobacco smoking can markedly accelerate drug clearance and reduce therapeutic exposure, while dietary components such as cruciferous vegetables (e.g., broccoli or Brussels sprouts) exert similar inductive effects. In addition, caffeine competes for the same metabolic pathway. Thus, although CYP1A2 substrate status is not inherently disqualifying, it introduces additional clinical “noise.” For this reason, alternative metabolic routes, most notably CYP3A4, are generally preferred when feasible, although reliance on CYP1A2 metabolism remains unavoidable for certain therapeutic classes, particularly psychotropic agents and some analgesics. |

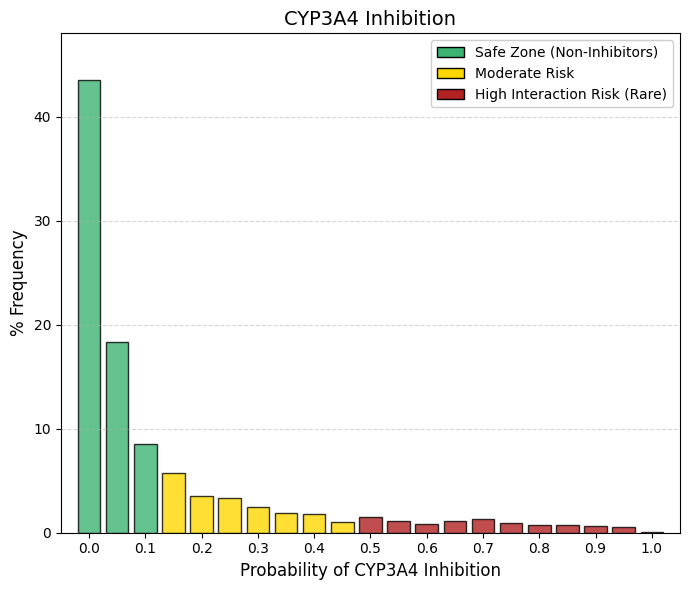

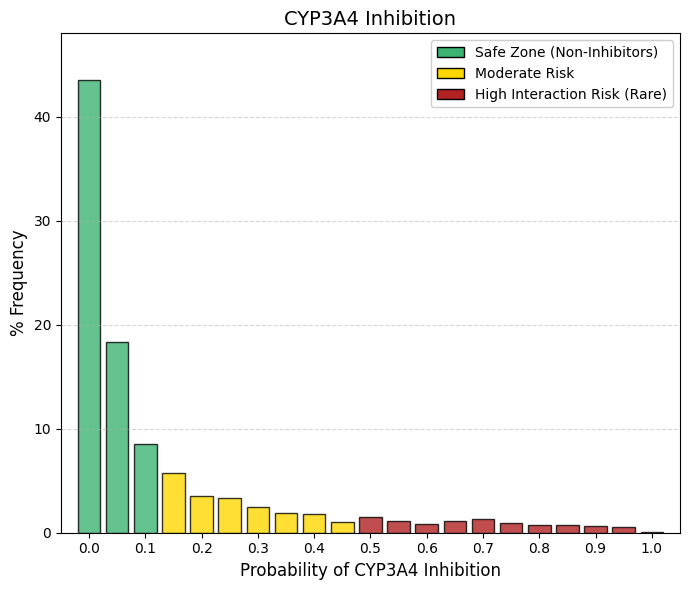

CYP3A4 inhibitor: CYP3A4 inhibitor predictor.

CYP3A4 is the predominant drug-metabolizing enzyme in humans, responsible for the biotransformation of approximately 50% of marketed drugs. A pronounced “safety wall” is evident at low inhibition probabilities: summing bins 0 and 0.05 (43.5% and 18.3%, respectively) indicates that roughly 62% of approved drugs have an almost negligible likelihood of inhibiting this enzyme. In comparison with CYP1A2—which already exhibited a conservative inhibition profile—CYP3A4 is subject to even stricter avoidance. The high-probability tail essentially vanishes, and beyond a probability of 0.5 the frequency drops below 1%, underscoring the industry’s near-absolute intolerance of CYP3A4 inhibition. The clinical rationale for this stringency is clear: inhibition of CYP3A4 compromises the clearance of a wide range of essential medications, including statins, benzodiazepines, anticoagulants, and antiretroviral agents. Such interactions can result in dramatic increases in systemic drug exposure, leading to severe adverse effects, exemplified by statin-induced rhabdomyolysis.

Consequently, a drug candidate that is identified as a potent CYP3A4 inhibitor is highly unlikely to progress to regulatory approval, reflecting CYP3A4’s role as one of the most stringent metabolic safety filters in modern drug development. |

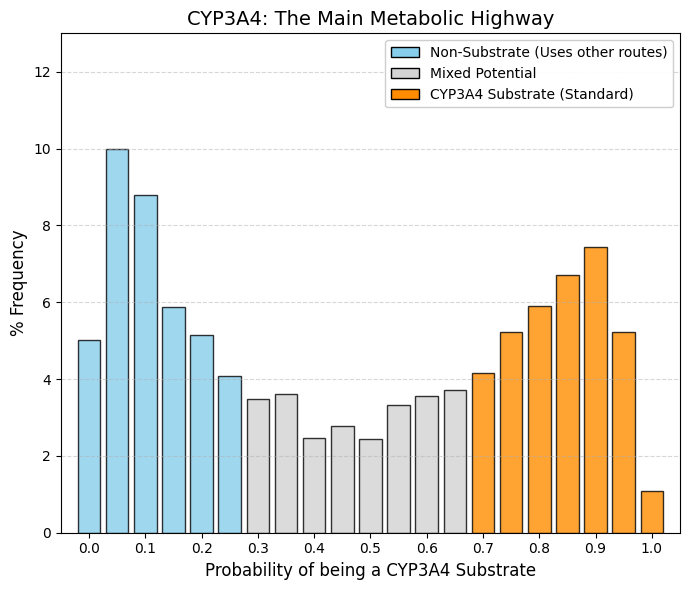

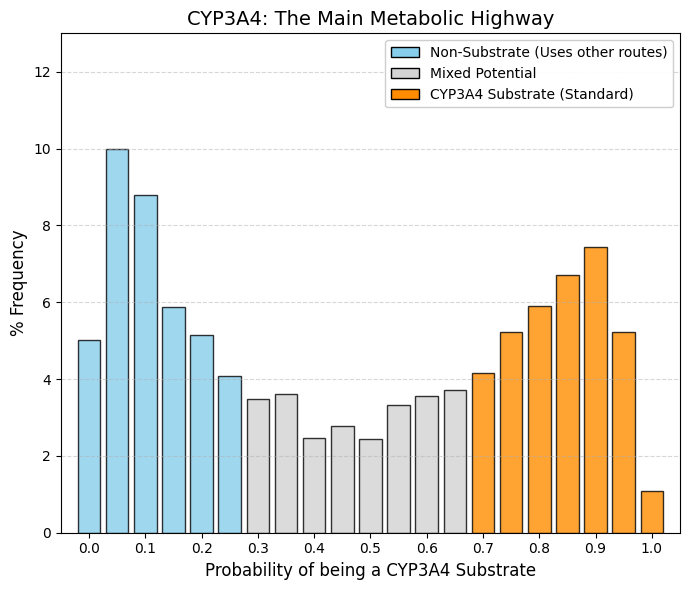

CYP3A4 substrate: CYP3A4 substrate predictor.

Whereas drug discovery programs rigorously exclude CYP3A4 inhibition, substrate liability for this enzyme is widely accepted. The resulting distribution is therefore bimodal. Left peak (non-substrates, ~30%): This group comprises predominantly hydrophilic drugs that are eliminated unchanged via renal excretion or are cleared through more selective metabolic pathways, such as CYP2D6. Right peak (substrates, ~35-40%): A pronounced accumulation is observed at high substrate probabilities (0.7-0.95), reflecting a substantial fraction of marketed drugs that are efficiently metabolized by CYP3A4. This pattern arises from the exceptional catalytic “promiscuity” of CYP3A4, which possesses a large, flexible active site capable of accommodating structurally diverse, bulky, and lipophilic molecules. Lipophilicity is often a prerequisite for oral bioavailability and cellular uptake; however, the same property makes compounds readily recognizable by CYP3A4.

Drug design must therefore navigate an inherent trade-off. Compounds can be engineered to resist CYP3A4-mediated metabolism to prolong systemic exposure, or CYP3A4 clearance can be accepted, with therapeutic efficacy maintained through appropriate dose and dosing frequency adjustments. As a result, a substantial proportion of approved drugs are intentionally or unavoidably substrates of CYP3A4, reflecting its central role in human xenobiotic metabolism. |

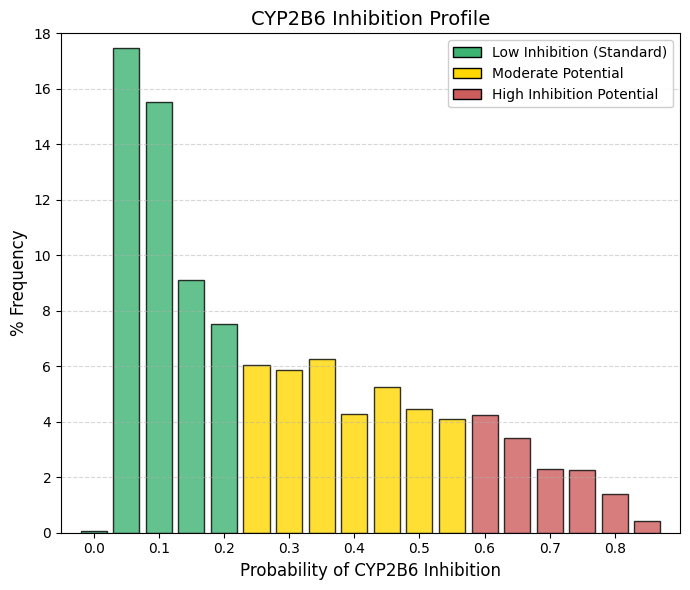

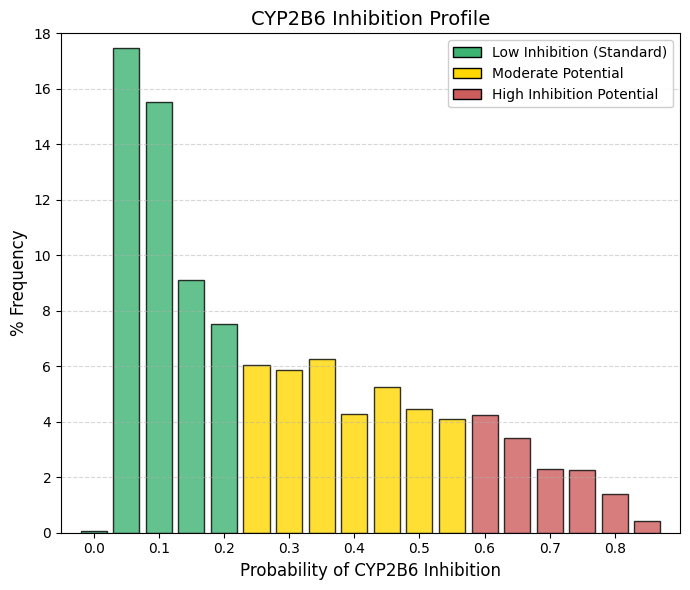

CYP2B6 inhibitor: CYP2B6 inhibitor predictor.

The inhibition profile of CYP2B6 closely resembles that of CYP1A2, albeit with a subtle but informative distinction at the lower end of the distribution. Unlike other safety-related parameters, where bin 0 is dominant, CYP2B6 shows a negligible frequency at bin 0 (0.08%). Instead, the largest accumulation occurs immediately at low but nonzero probabilities (0.05 and 0.1), which together account for nearly 33% of approved drugs. The distribution then declines gradually, such that cumulative probabilities up to 0.35 encompass approximately 65-70% of compounds. Thus, while most drugs exhibit a low likelihood of inhibiting CYP2B6, true zero-risk profiles are uncommon.

Although CYP2B6 contributes to the metabolism of only a small fraction of marketed drugs (approximately 2-5%), its role is clinically critical for several high-impact therapies, including efavirenz (HIV treatment), bupropion (antidepressant and smoking cessation aid), methadone, and the anesthetic propofol.

Because CYP2B6 is not a dominant clearance pathway for the majority of drug candidates, medicinal chemistry programs typically do not apply strong selective pressure to eliminate CYP2B6 interactions, in contrast to stringent optimization against liabilities such as hERG blockade or CYP3A4 inhibition. The resulting distribution therefore reflects a largely unforced chemical landscape: most compounds simply exhibit poor complementarity with the CYP2B6 active site, leading to generally low levels of inhibition without the need for aggressive structure-based avoidance strategies. |

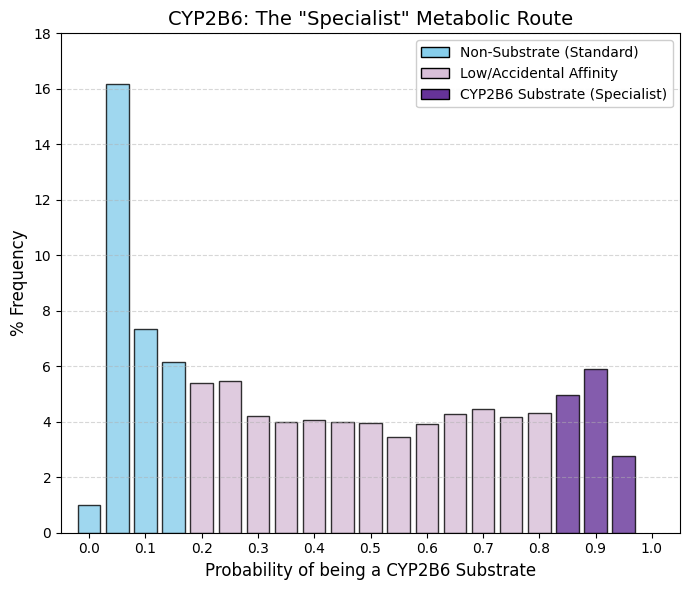

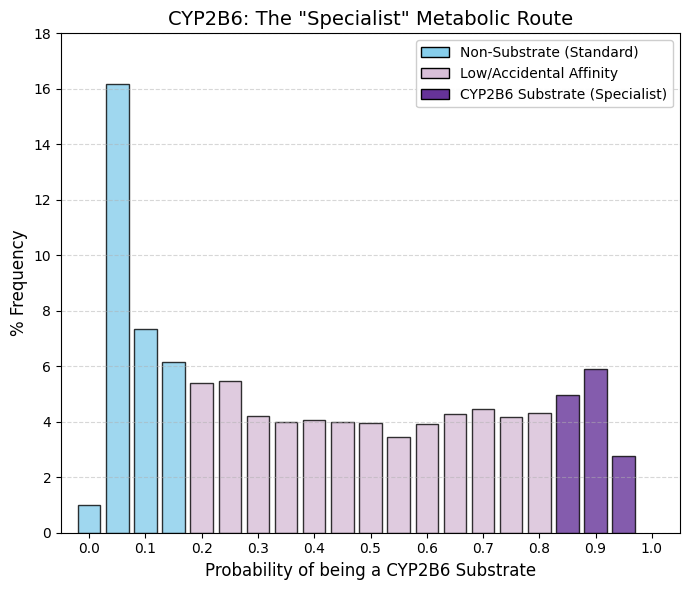

CYP2B6 substrate: CYP2B6 substrate predictor.

A pronounced peak is observed at bin 0.05 (16.18%), and when adjacent bins are included, the data indicate that for approximately 30% of approved drugs CYP2B6 is functionally irrelevant. In contrast to other inhibition profiles that decay toward zero at higher probabilities or exhibit a pronounced rebound at the upper end (as seen for CYP3A4), this distribution remains relatively flat across the entire range, with a nearly constant frequency of approximately 4-5%. This pattern confirms that CYP2B6 is not a preferred metabolic pathway for the majority of drugs. CYP2B6 is a quantitatively minor hepatic enzyme, with substantially lower expression levels than CYP3A4 or CYP1A2. As a result, drug candidates are rarely designed to rely on CYP2B6-mediated clearance, as this pathway would be readily saturable. The observed flat plateau suggests that many compounds display incidental or moderate affinity for CYP2B6, while relatively few act as dominant or exclusive substrates.

The clinical relevance of CYP2B6 arises from its role in the metabolism of a limited but important set of drugs, including cyclophosphamide (oncology), efavirenz (HIV therapy), and methadone. Outside of these therapeutic classes, most drugs are unlikely to depend on CYP2B6 as a primary route of elimination. |

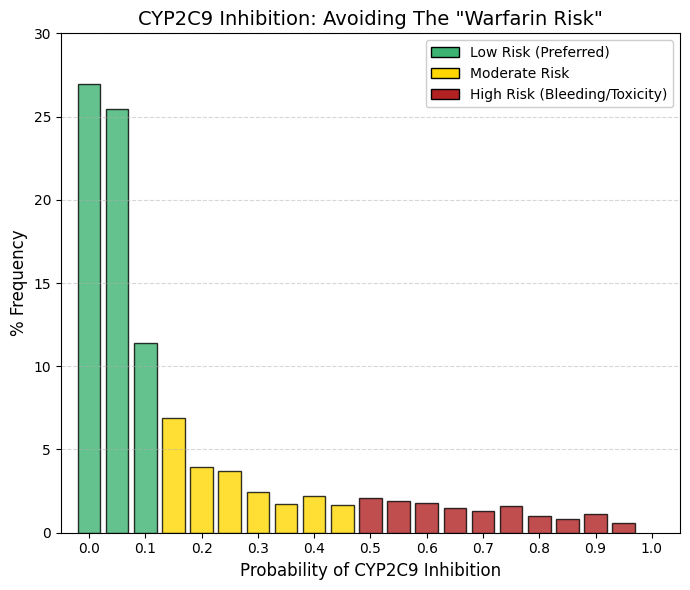

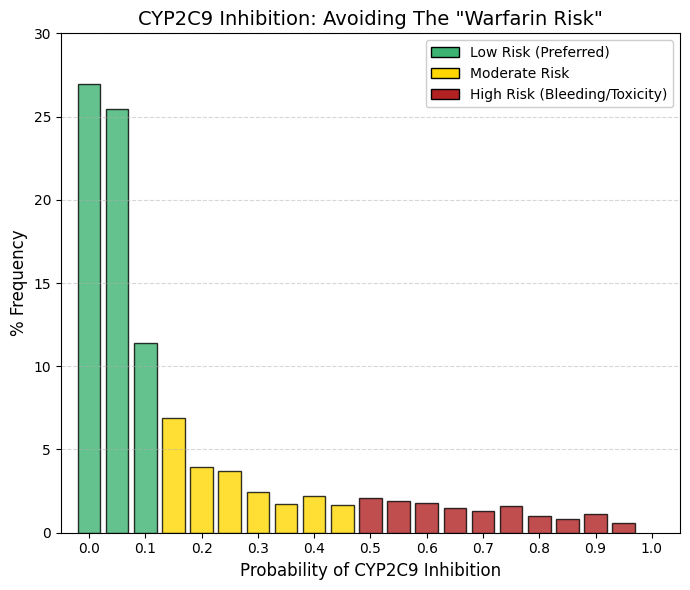

CYP2C9 inhibitor: CYP2C9 inhibitor predictor.

As observed for CYP3A4, the inhibition profile of CYP2C9 exhibits a pronounced exponential decline. Summation of the first three bins (26.9%, 25.4%, and 11.4%) indicates that approximately 64% of approved drugs have a zero or negligible probability of inhibiting CYP2C9. Beyond a probability of 0.2, the frequency drops below 4% and continues to decrease steadily across higher bins.

From a drug design perspective, inhibition of CYP2C9 is actively avoided, given the enzyme’s central role in the metabolism of clinically critical agents such as warfarin and sulfonylureas. Warfarin, in particular, has a very narrow therapeutic index; inhibition of CYP2C9 can markedly impair its clearance, leading to excessive anticoagulation and a high risk of severe internal bleeding.

In addition, several widely used nonsteroidal anti-inflammatory drugs (NSAIDs), including ibuprofen and diclofenac, are CYP2C9 substrates. Co-administration with potent CYP2C9 inhibitors can increase systemic exposure to these agents and exacerbate their gastrointestinal toxicity. Accordingly, except in highly justified and carefully managed cases, new drug candidates are designed to avoid significant CYP2C9 inhibition in order to minimize drug-drug interactions involving cardiovascular therapies and commonly used analgesics. |

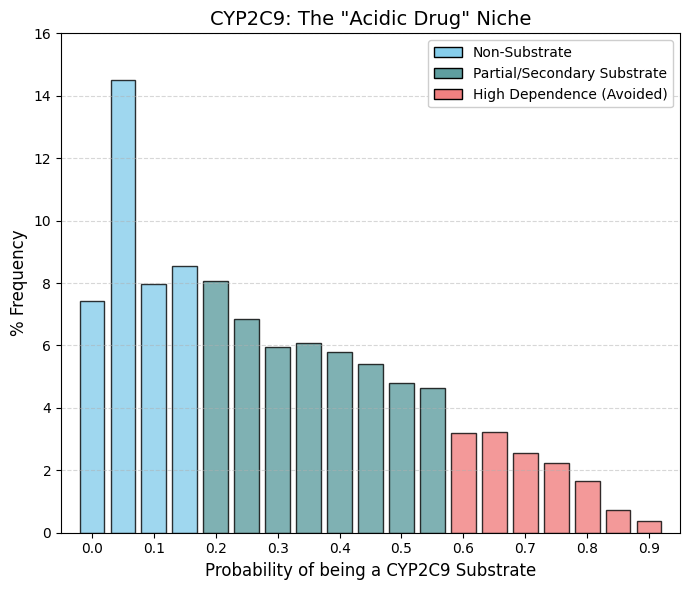

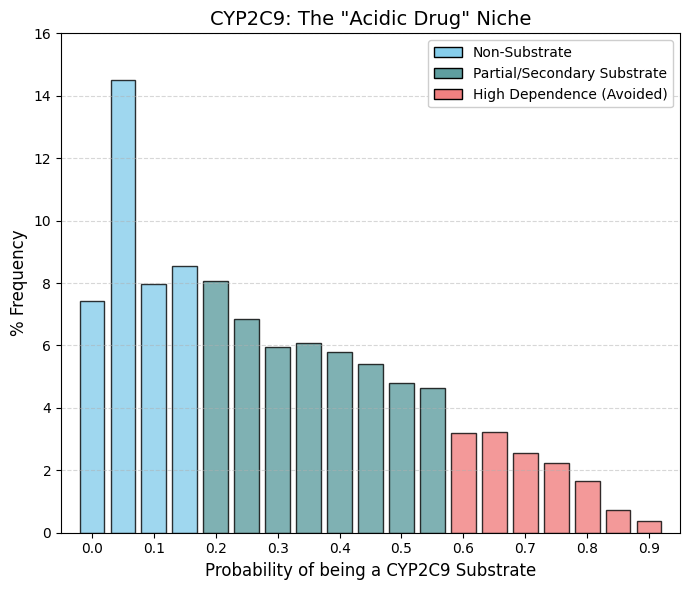

CYP2C9 substrate: CYP2C9 substrate predictor.

A striking contrast is observed when comparing this profile with that of CYP3A4, which displayed a characteristic U-shaped distribution. In the case of CYP2C9, the substrate distribution is right-skewed with a pronounced central “bulge.” Approximately 22% of approved drugs (bins 0 and 0.05) are not substrates of CYP2C9. In contrast, a high density of compounds populates the intermediate probability range (0.1-0.5), indicating that many drugs undergo partial metabolism by CYP2C9 without relying on it as an exclusive clearance pathway. Unlike CYP3A4, where a distinct high-probability peak is observed, the frequency here declines sharply beyond 0.7, and very few contemporary drugs appear to depend predominantly on CYP2C9-mediated metabolism.

This pattern reflects the well-defined chemical selectivity of CYP2C9, which preferentially metabolizes weak acids and molecules containing specific polar functional groups. Representative substrates include nonsteroidal anti-inflammatory drugs such as ibuprofen and diclofenac, as well as antidiabetic agents like glibenclamide. Compounds lacking acidic character rarely engage this enzyme, accounting for the substantial non-substrate population at the low-probability end of the distribution.

The paucity of high-probability substrates is further explained by the pronounced genetic polymorphism of CYP2C9. A significant fraction of the population, particularly among individuals of European ancestry, carries reduced-function (“slow metabolizer”) alleles. Drugs that depend almost exclusively on CYP2C9 for clearance (probabilities >0.9) would therefore pose a substantial overdose risk in these patients. Consequently, CYP2C9 is generally utilized as a secondary or shared metabolic pathway, and modern drug design deliberately avoids exclusive reliance on this enzyme. |

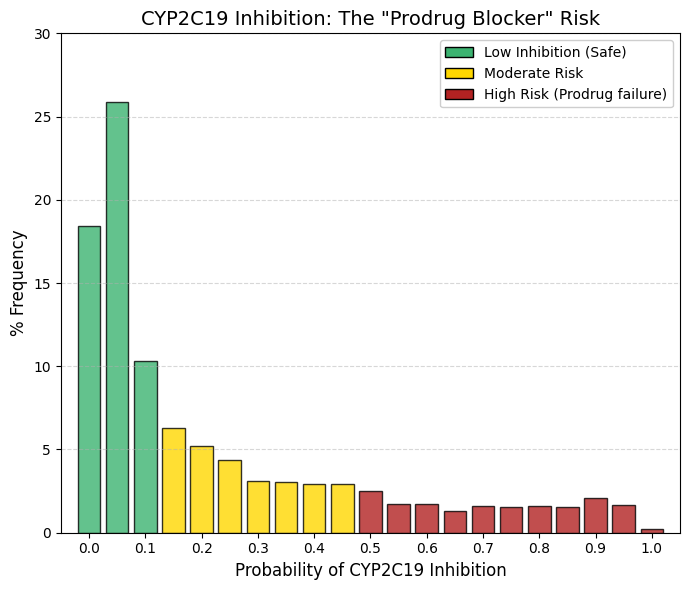

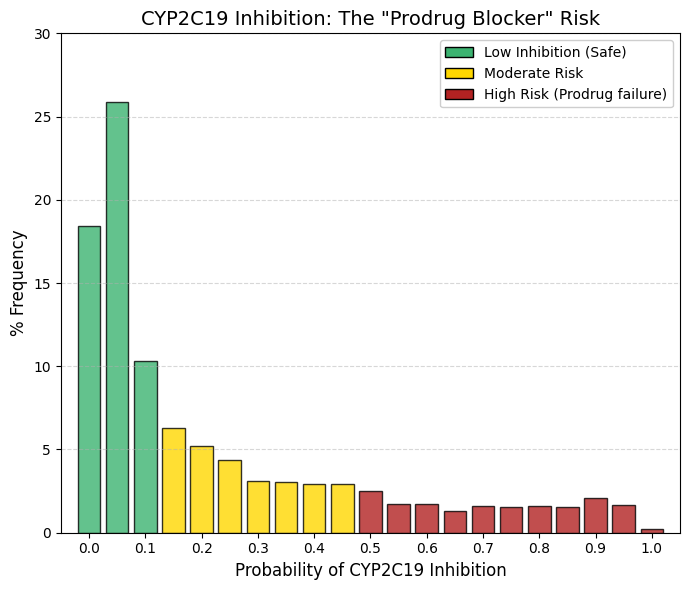

CYP2C19 inhibitor: CYP2C19 inhibitor predictor.

The observed distribution again follows an exponential decay, closely resembling the inhibition profiles of CYP3A4 and CYP2C9, but with a clinically distinctive feature. A large safety zone is evident in the lowest bins (0-0.05): summing 18.4% and 25.9% indicates that approximately 44% of marketed drugs are essentially unaffected by CYP2C19-mediated metabolism. By bin 0.2, the cumulative frequency already encompasses the vast majority of compounds. Unlike CYP3A4, where the distribution tail nearly vanishes, the CYP2C19 curve maintains a persistent low, level “background” of approximately 1.5-2% across the higher probability range. This suggests that it is intrinsically more difficult to design molecules that are completely inert toward CYP2C19, although medicinal chemistry efforts consistently aim to minimize such interactions.

The clinical relevance of CYP2C19 inhibition is unusual in that it is driven primarily by prodrug activation rather than by impaired clearance. A paradigmatic example is clopidogrel (Plavix), an essential antiplatelet agent that is pharmacologically inactive until bioactivated by CYP2C19. Co-administration of a CYP2C19 inhibitor with clopidogrel can severely reduce its conversion to the active metabolite, leading to therapeutic failure, increased platelet aggregation, and a heightened risk of myocardial infarction.

This risk is compounded by the high prevalence of CYP2C19 genetic polymorphisms. A substantial proportion of patients are poor metabolizers with intrinsically low CYP2C19 activity. In these individuals, even moderate inhibition can effectively abolish enzymatic function, rendering clopidogrel largely ineffective. For this reason, modern drug development treats CYP2C19 inhibition as a critical liability, particularly for compounds intended for chronic use in cardiovascular populations. |

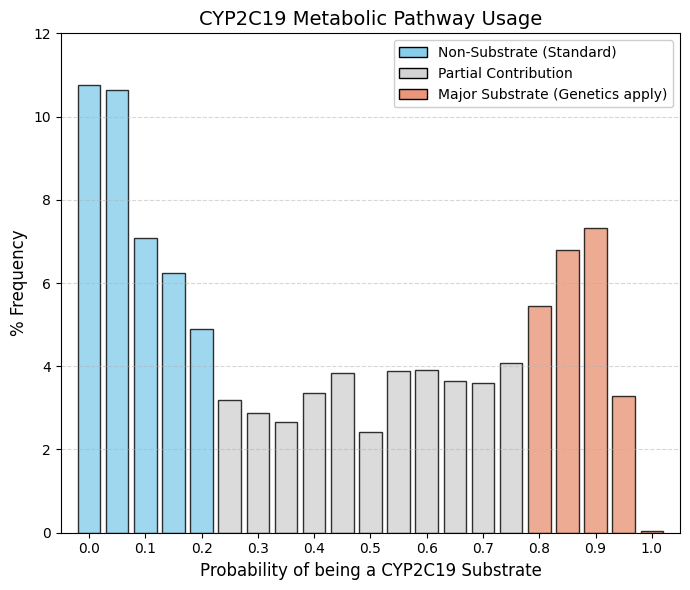

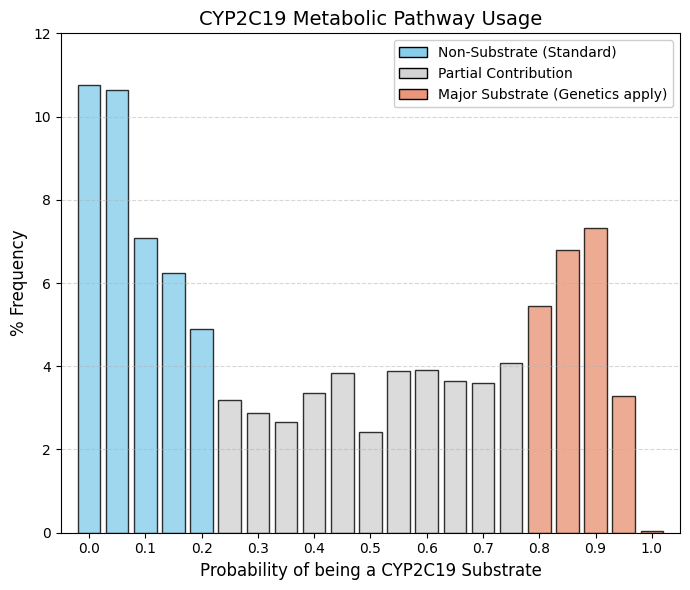

CYP2C19 substrate: CYP2C19 substrate predictor.

As with CYP3A4, the distribution is clearly bimodal, with two well-defined peaks, but with an important distinction: the central “valley” is noticeably shallower. This indicates a larger fraction of drugs with intermediate probabilities of interaction rather than a strict separation between non-substrates and strong substrates. Outlier Group (Bins 0-0.1). Summing the initial bins shows that approximately 28% of marketed drugs exhibit little to no interaction with CYP2C19, forming a substantial non-substrate population. Final Surge (Bins 0.8-0.9). In contrast to CYP2C9, where the right tail collapses, the distribution here rises again toward the end. From bin 0.8 to 0.9, there is sustained growth, reaching 7.3% in bin 0.9. This demonstrates that drugs designed to be strong CYP2C19 substrates are not only tolerated but deliberately accepted in modern pharmacotherapy.

CYP2C19 represents a preferred metabolic route for two major and commercially dominant drug classes. The first is proton pump inhibitors (PPIs) such as omeprazole and pantoprazole. These compounds have wide therapeutic windows and excellent safety profiles, making interindividual variability in CYP2C19 activity clinically manageable. The second class is antidepressants, notably citalopram and escitalopram, which also rely significantly on CYP2C19 for clearance.

Although CYP2C19 is highly polymorphic, encompassing poor, extensive, and ultra-rapid metabolizers, dependence on this pathway is considered acceptable because these drugs generally lack the narrow therapeutic margins seen with CYP2C9 substrates such as warfarin. Consequently, variability in exposure rarely translates into catastrophic toxicity, allowing CYP2C19 to remain a viable and strategically used metabolic route in drug design. |

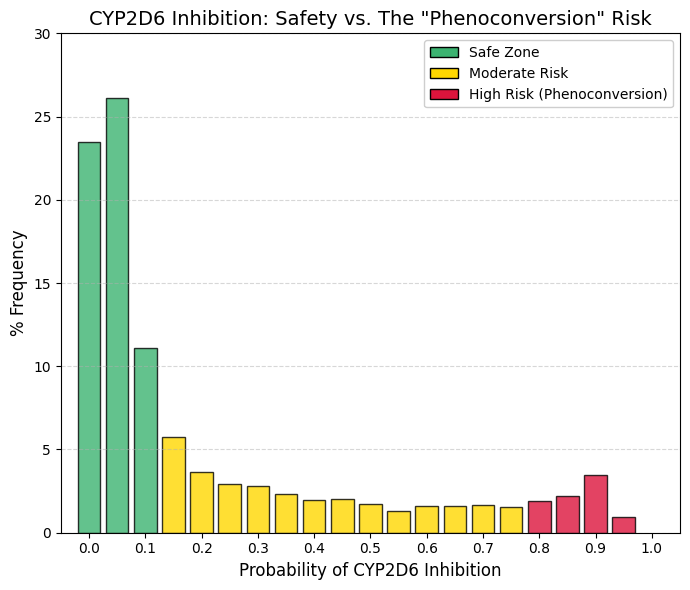

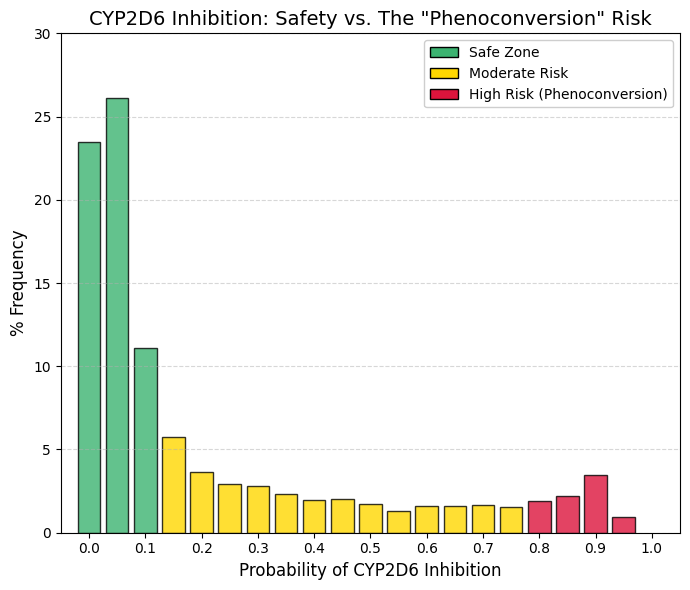

CYP2D6 inhibitor: CYP2D6 inhibitor predictor.

The observed frequency distribution exhibits an exponential decay pattern similar to that of CYP2C9; however, a distinct terminal feature differentiates this enzyme from comparable isoforms. Cumulative analysis of the initial three bins indicates that approximately 60% of the screened compounds exhibit negligible inhibition. In the interval between 0.2 and 0.8, the frequency remains low and stable.Conversely, a significant anomaly is observed in Bin 0.9 (3.44%), characterized by a marked increase from the 1.9% observed at 0.8. This subset corresponds to known potent inhibitors, such as fluoxetine, paroxetine, and quinidine. This finding highlights the clinical risk of phenoconversion. Patients identified as genotypic extensive metabolizers who concomitantly administer a potent inhibitor (Bin >0.9) may exhibit a phenotype functionally equivalent to that of a poor metabolizer. For instance, tamoxifen is a prodrug requiring bioactivation via CYP2D6. Consequently, the presence of a strong inhibitor compromises metabolic activation and therapeutic efficacy. Therefore, compounds falling within the >0.8 range warrant a high-priority warning: 'High Risk: Potential failure of prodrug therapies (e.g., tamoxifen). |

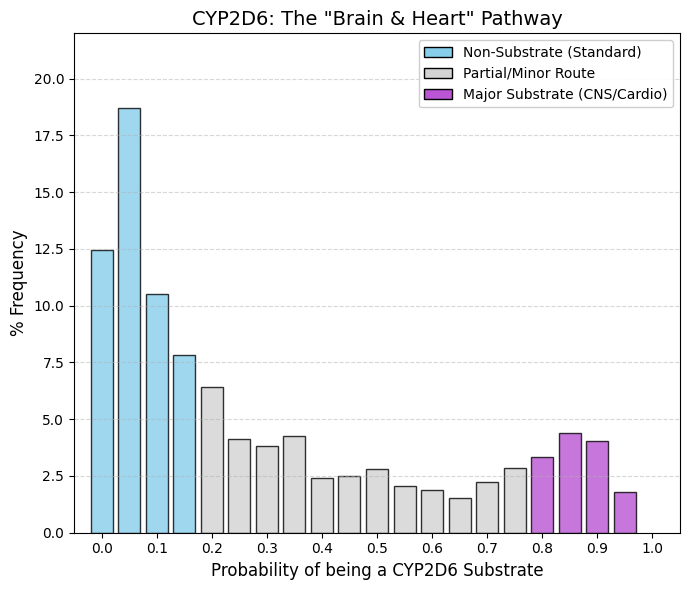

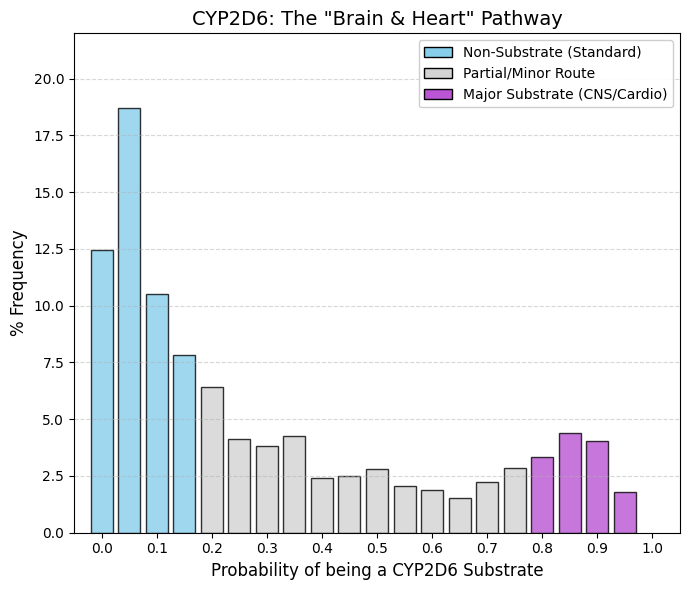

CYP2D6 substrate: CYP2D6 substrate predictor.

In the 0-0.15 range, approximately 50% of screened compounds exhibit negligible affinity for the enzyme; these agents typically possess therapeutic indications unrelated to the Central Nervous System (CNS) or cardiovascular targets. However, the distribution exhibits a distinct elevation in the 0.8-0.95 range, where 12-15% of drugs are identified as substrates. Unlike CYP2C9, where substrate frequency diminishes to near-zero at higher ranges, CYP2D6 retains a robust substrate population.

This phenomenon is driven by structural determinants. CNS penetration generally necessitates lipophilicity and the presence of a basic nitrogen moiety (amine). The catalytic core of CYP2D6 contains an aspartic acid residue that facilitates electrostatic interactions with these basic amines. Consequently, it is challenging to engineer psychotropic agents (e.g., antidepressants or antipsychotics) that achieve CNS penetration without also serving as CYP2D6 substrates. The observed terminal spike, therefore, reflects this structural constraint, representing a niche dominated by psychiatric medications and beta-blockers. |

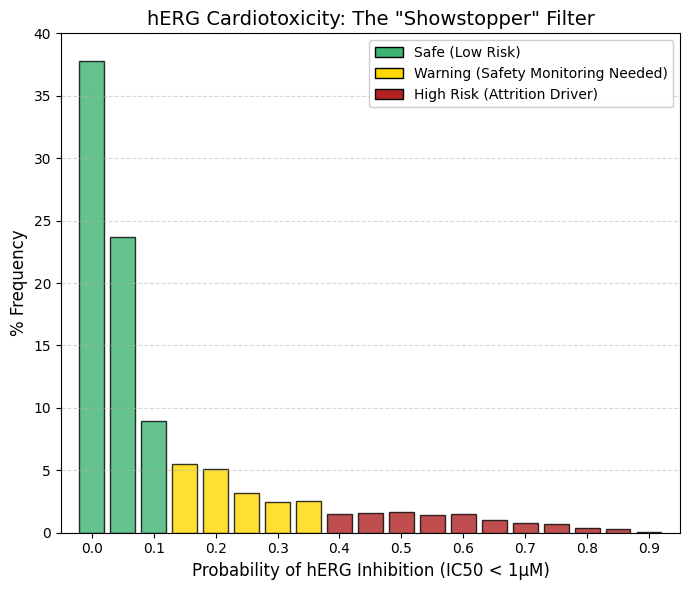

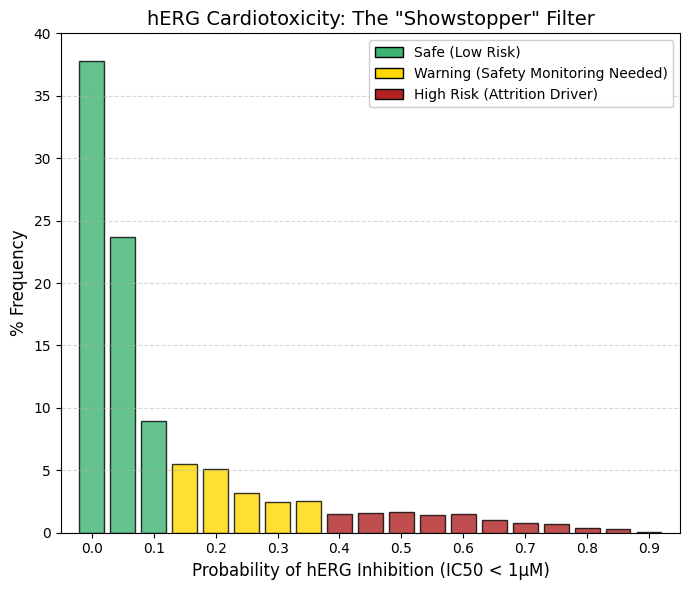

hERG (1 µM): A threshold value of 1 µM was used.

Inhibition of the hERG channel (Kv11.1) is a decisive determinant of compound viability in drug development. Cumulative analysis of the initial bins (0-0.1) reveals that 70.4% of approved drugs exhibit negligible hERG inhibition at 1 µM concentrations. Above a probability threshold of 0.5, the frequency declines to <1.5% and approaches zero. In contrast to CYP inhibition, which can often be managed via dose titration, potent hERG blockade typically necessitates project termination due to the associated risk of fatal arrhythmias. Functionally, hERG mediates cardiac repolarization; blockade delays this 'reset' phase, manifesting clinically as QT interval prolongation. This state creates a window of vulnerability where premature depolarization can trigger Torsades de Pointes, a chaotic polymorphic ventricular tachycardia associated with sudden cardiac death. Structurally, the hERG channel features a voluminous, hydrophobic central cavity that readily accommodates bulky, lipophilic molecules, particularly those containing basic amines. The limited number of compounds occupying the high-risk 'tail' of the distribution are likely restricted to oncology or antiarrhythmic indications, where the risk-benefit profile permits a higher toxicity threshold. Conversely, for indications such as analgesia or anti-infectives, a positive hERG signal is generally considered a definitive 'no-go' criterion. |

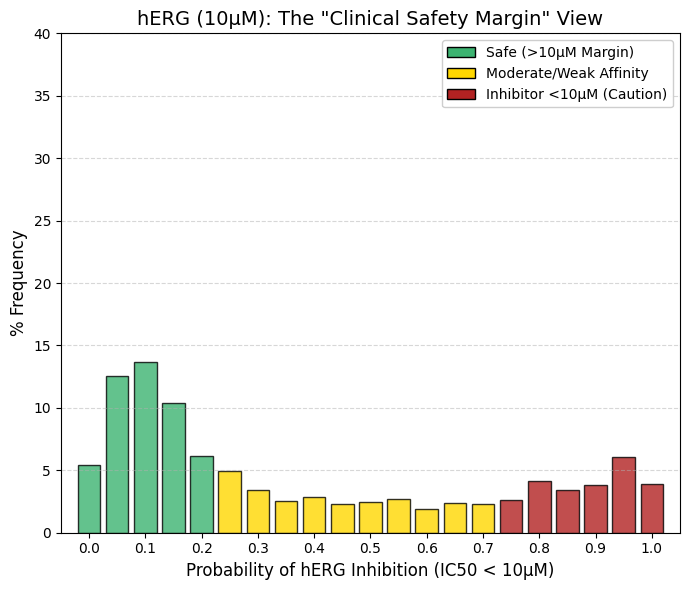

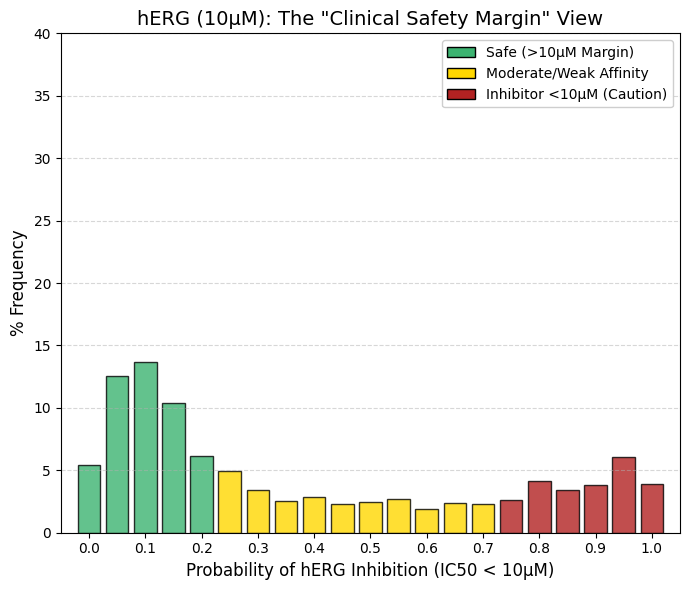

hERG (10 µM): A threshold value of 10 µM was used.

While the hERG inhibition prediction at 1 µM primarily identifies highly potent and potentially lethal compounds, increasing the threshold to 10 µM imposes a more stringent safety criterion and allows the detection of compounds associated with latent cardiotoxic risk. At the 10 µM threshold, the distribution shifts noticeably: fewer drugs populate the leftmost bins compared with the 1 µM graph, while the right-hand tail (bins 0.95-1.0) increases substantially. A considerable number of approved drugs exhibit moderate hERG channel inhibition within the 1-10 µM range. Although these compounds are not immediately life-threatening, their clinical use typically requires QT interval monitoring in hospital settings. The right tail (bins 0.8-1.0) comprises approximately 17% of marketed drugs. These molecules interact measurably with the hERG channel but have nevertheless been approved because their therapeutic benefit outweighs the associated cardiac risk, as exemplified by certain antipsychotics and macrolide antibiotics. From a drug development perspective, compounds that fail the 1 µM criterion are generally discarded, whereas those that pass at 1 µM but fail at 10 µM are classified as requiring caution. |

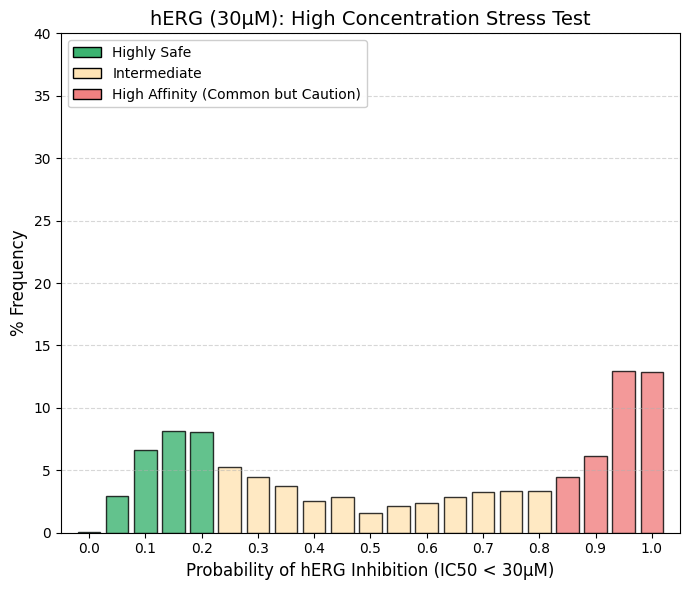

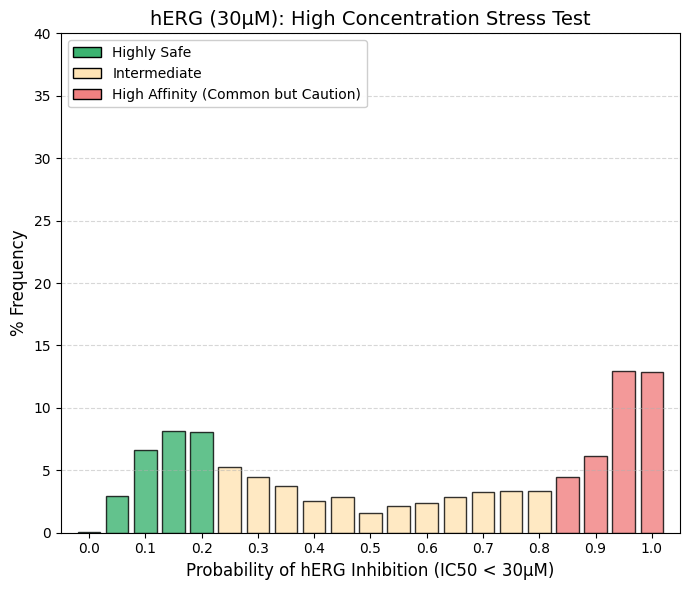

hERG (30 µM): A threshold value of 30 µM was used.

The frequency distribution at 30 µM exhibits a pronounced morphological shift compared to the 1 µM dataset. Whereas the 1 µM distribution was heavily skewed towards the lower quantiles (indicating safety), the 30 µM profile demonstrates a significant rightward shift. At this elevated concentration, cumulative analysis of the upper bins (0.85-1.0) reveals that over 30% of compounds test positive for inhibition, a marked contrast to the negligible activity observed at 1 µM. High-concentration hERG blockade is a frequent phenomenon, often driven by nonspecific interactions involving lipophilic moieties. However, clinical risk is determined by the safety margin rather than absolute potency. For example, a drug with a therapeutic plasma concentration of 0.01 µM that inhibits hERG at 30 µM retains a 3000-fold safety margin, generally considered robust. Conversely, compounds that persist in the lower range (Bins 0-0.2) even at 30 µM are classified as 'hERG-Silent.' These agents represent the optimal safety profile, appearing electrophysiologically inert even at supratherapeutic concentrations. |

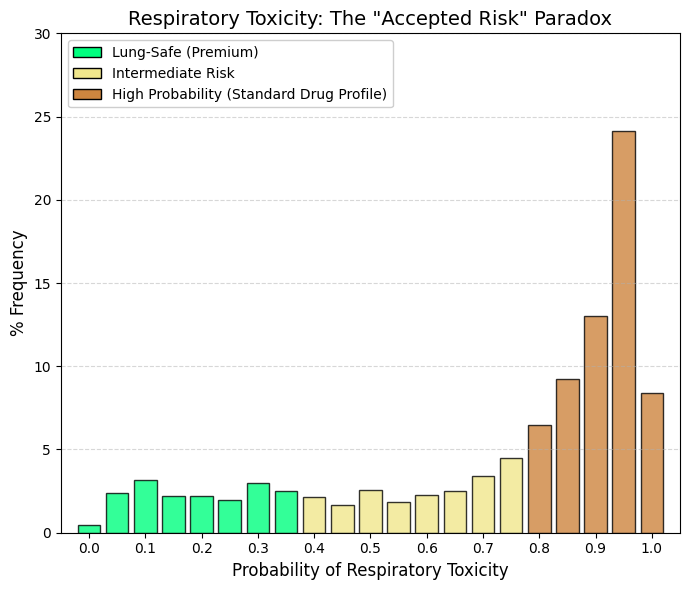

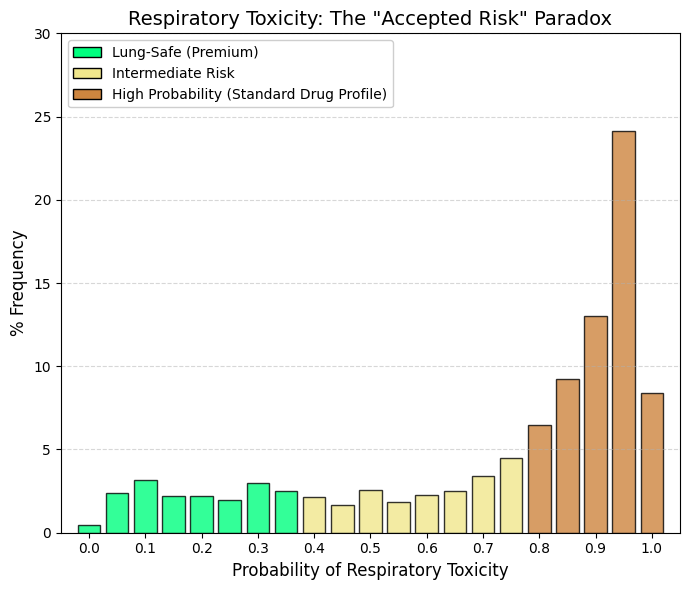

Respiratory toxicity.

In stark contrast to the hERG inhibition profile, which was heavily skewed toward the lower (safe) quantiles, the respiratory toxicity distribution exhibits a pronounced rightward skew. The highest probability bin (0.95) alone accounts for 24.15% of compounds. Cumulative analysis of the bins >0.7 reveals that 60-70% of approved drugs are classified as 'Probably Toxic to the Respiratory System,' whereas less than 5% reside in the minimal risk interval (0-0.2). This distribution likely reflects the broad phenotypic definitions employed by ADMETsar, which encompass endpoints ranging from 'respiratory reactions' to 'respiratory depression'. Numerous therapeutic classes—including opioids, benzodiazepines, antihistamines, and beta-blockers—possess known but clinically manageable pulmonary side effects. Consequently, the model appears to detect any signal of adverse respiratory events within product labeling or pharmacovigilance reports (e.g., cough, mild dyspnea), rather than distinguishing solely for fatal acute toxicity. Given that DrugBank comprises approved therapeutics, the model effectively learns an inherent association between pharmaceutical activity and respiratory liability. Therefore, compounds located in the lower probability zone (<0.3) represent a rare and exceptional subset characterized by a uniquely clean pulmonary safety profile. |

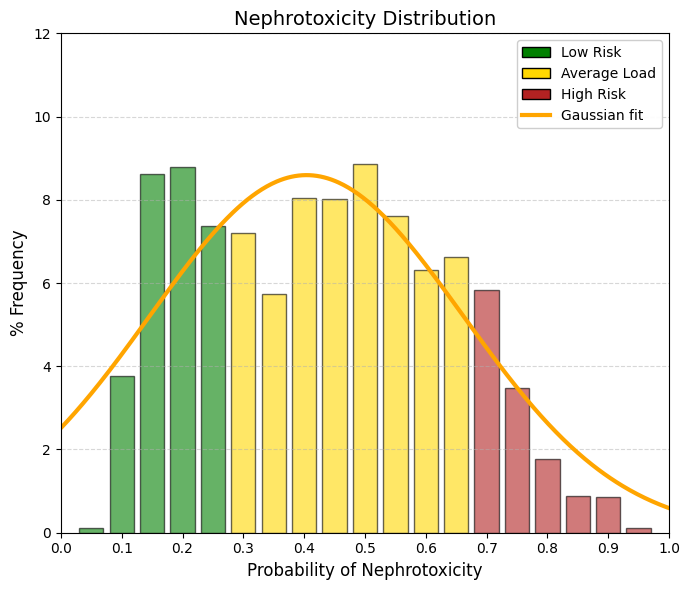

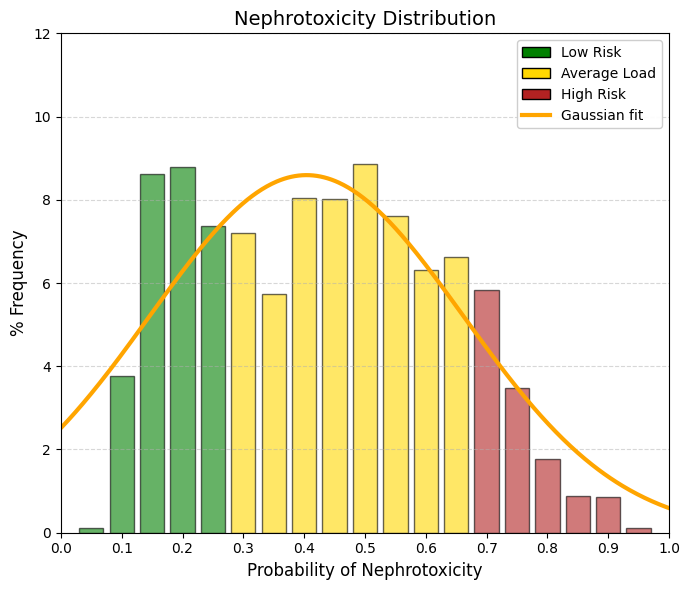

Nephrotoxicity.

The kidney, together with the liver, is one of the two most important excretory organs in the human body. Its primary functions include the reabsorption of water and other essential substances, such as glucose, amino acids, and sodium ions, as well as the production of urine for the elimination of metabolic waste products and toxic compounds. In addition, the kidney regulates the body’s water, electrolyte, and acid-base balance. These renal functions are essential for normal metabolism and for maintaining the stability of the internal environment. However, during these physiological processes, the kidneys are continuously exposed to drugs and chemicals circulating in the bloodstream and are therefore particularly susceptible to drug-induced damage. While the liver primarily metabolizes drugs to increase their water solubility and directs them either to the kidneys for urinary excretion or to the bile for fecal elimination, the kidney filters both unmetabolized and metabolized compounds into the urine. Most drugs cluster in the intermediate range of the distribution, between 0.2 and 0.7. The extremes are sparsely populated: very few drugs can be classified as “non-nephrotoxic” (<0.1) or “highly nephrotoxic” (>0.8), and compounds with pronounced nephrotoxicity, such as aminoglycosides, cisplatin, and high-dose nonsteroidal anti-inflammatory drugs, are well known and clinically feared. A predicted nephrotoxicity probability of 40-50% (corresponding to the center of the graph) does not imply inevitable renal failure; rather, it indicates a tendency for renal accumulation and increased renal workload, which in turn confers a heightened susceptibility to kidney injury. |

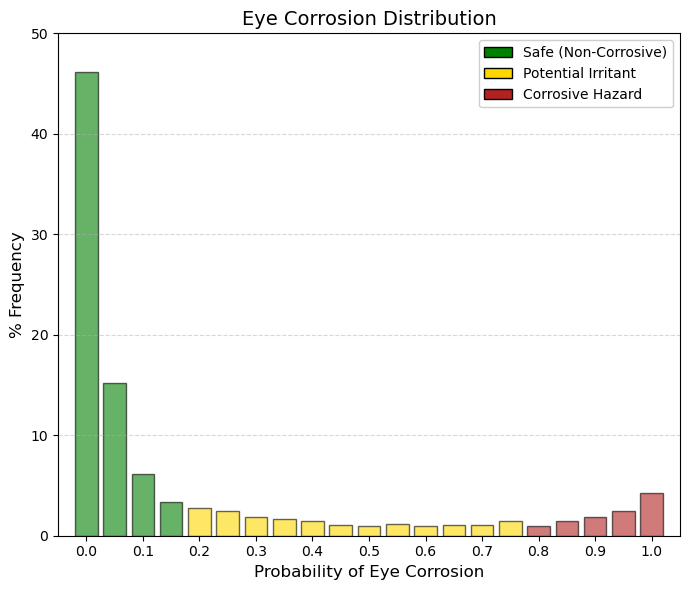

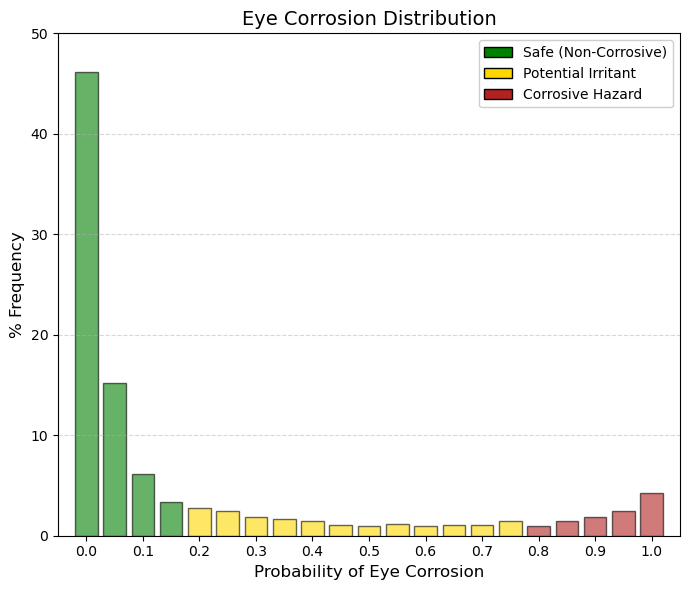

Eye corrosion.

By summing the first three bars (bins 0-0.1), it becomes evident that 67.5% of drugs have an almost negligible probability of causing ocular corrosion. In ADMETsar, this endpoint corresponds to hazard labels H314 (causes severe skin burns and eye damage) and H318 (causes serious eye damage). Importantly, this classification does not refer to mild or reversible irritation (H319), such as transient conjunctival redness, but rather to severe and often irreversible ocular injury. The final group (bin 1.0) comprises 4.25% of drugs that are predicted to be confirmed ocular corrosives. These compounds are likely to include strong acids, strong bases, or highly reactive alkylating agents (e.g., certain chemotherapeutics), all of which require the use of strict personal protective equipment, including safety goggles, during laboratory handling. Eye corrosion is therefore a critical parameter for manufacturing and handling safety. The vast majority of drugs are intentionally designed to be chemically mild and close to physiological pH, which explains the strong left-skewed concentration of the distribution. If a drug is intended for ophthalmic administration and exhibits a high predicted probability of eye corrosion, the development project would be immediately terminated. Even for orally administered drugs, a high score in this parameter would not necessarily halt development, but it would imply a significant occupational hazard for the chemists involved in its synthesis and formulation. |

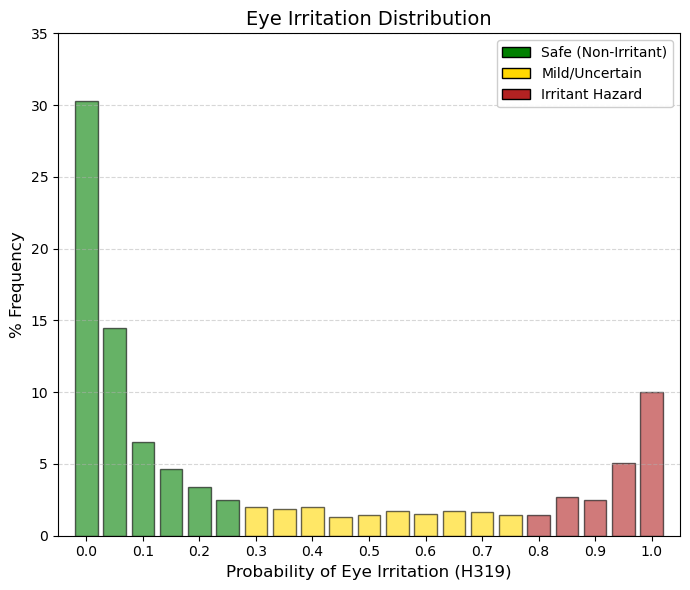

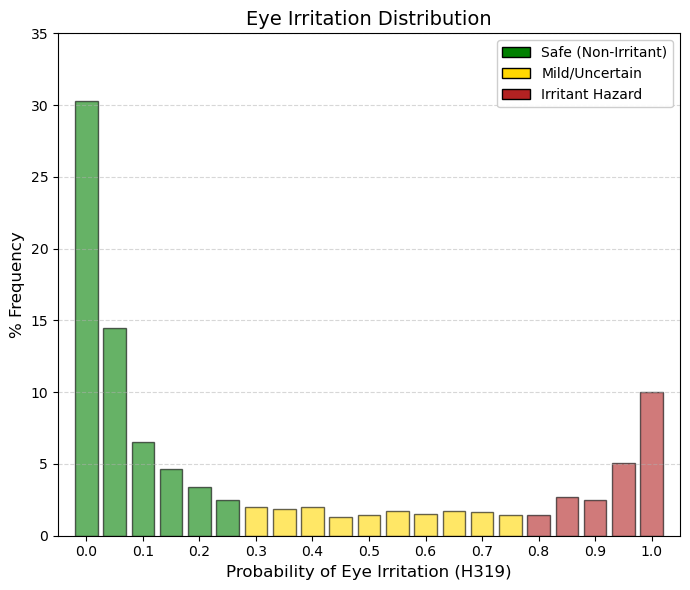

Eye irritation.

Clear distinctions can be observed between ocular corrosion (H314/H318) and ocular irritation (H319). At the extreme right of the distribution (bin 1.0), corrosion accounts for approximately 4.2% of approved drugs listed in DrugBank, whereas ocular irritation encompasses nearly 10%. Thus, it is substantially more common for a drug to be classified as irritating—a reversible and generally manageable effect—than as corrosive, which implies permanent tissue damage. Consistent with this distinction, the proportion of compounds in bin 0 (no interaction) decreases from 46% for corrosion to approximately 30% for irritation, indicating a lower degree of absolute safety with respect to mild ocular effects. Compounds located in the high-probability region (>0.8) are unlikely to be suitable for ophthalmic formulations without specialized buffering systems and would require strict eye protection (e.g., safety goggles) during industrial manufacture. From a regulatory perspective (OECD, EPA, ECHA), ocular corrosion and irritation are clearly differentiated by the criterion of reversibility. Hazard category H318 (Eye Corrosion/Serious Eye Damage) refers to irreversible tissue injury or visual impairment that does not fully resolve within 21 days following exposure. In contrast, H319 (Eye Irritation, Category 2A) is defined by ocular changes—such as conjunctival redness, edema, or corneal opacity—that are fully reversible within 21 days after exposure. |

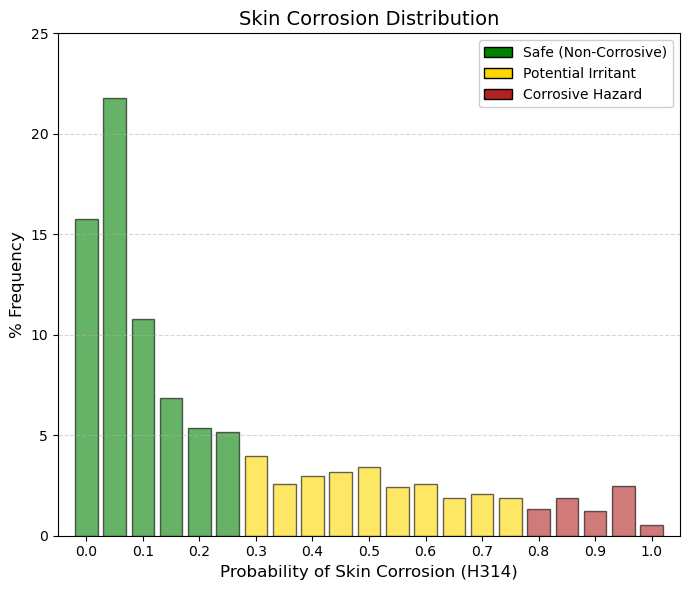

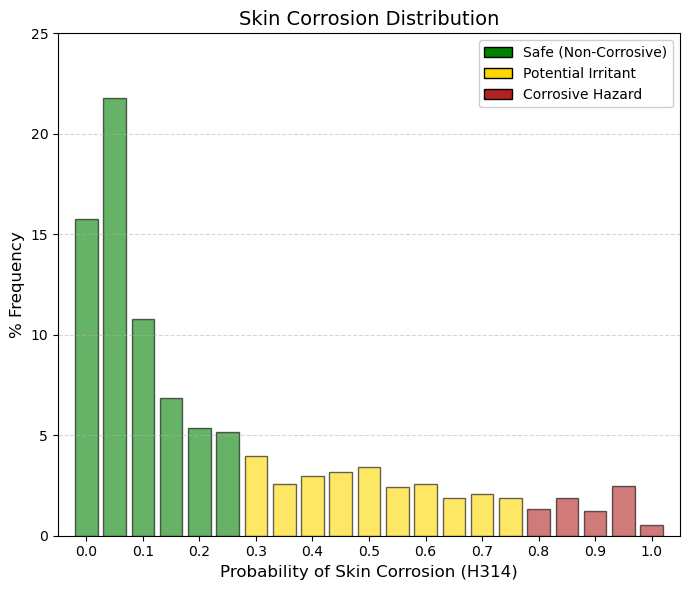

Skin corrosion.

At this point, it is important to emphasize the marked difference relative to ocular irritation, for which approximately 10% of drugs fall into bin 1.0 (maximum predicted toxicity), whereas for skin corrosion only about 0.5% of drugs occupy this extreme category. This discrepancy reflects fundamental anatomical and physiological differences between the eye and the skin. Whereas the eye is a moist and highly exposed mucosal surface, the skin is protected by the stratum corneum—a layer of dead, keratinized cells that functions as an efficient biological barrier. For a compound to be classified as H314 (skin corrosion), it must be sufficiently aggressive to penetrate and destroy this barrier, leading to necrosis of the underlying dermal tissue. Consequently, the vast majority of drugs are clustered in the low-risk region of the distribution. The ADMETsar predictions for this endpoint are derived from the OECD Test Guideline 404 (Acute Dermal Irritation/Corrosion). Low-probability values indicate that the compound does not induce observable skin damage within up to 4 hours of contact, whereas high-probability values correspond to compounds that produce visible dermal necrosis following exposure times ranging from 3 minutes to 4 hours. |

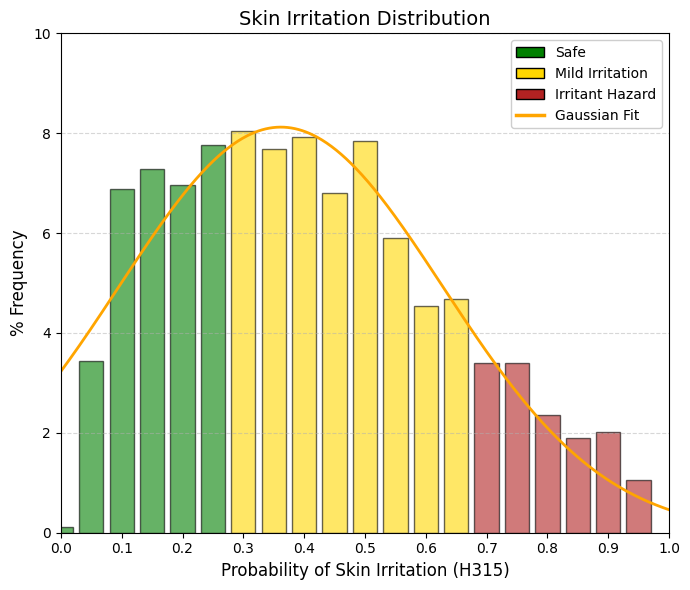

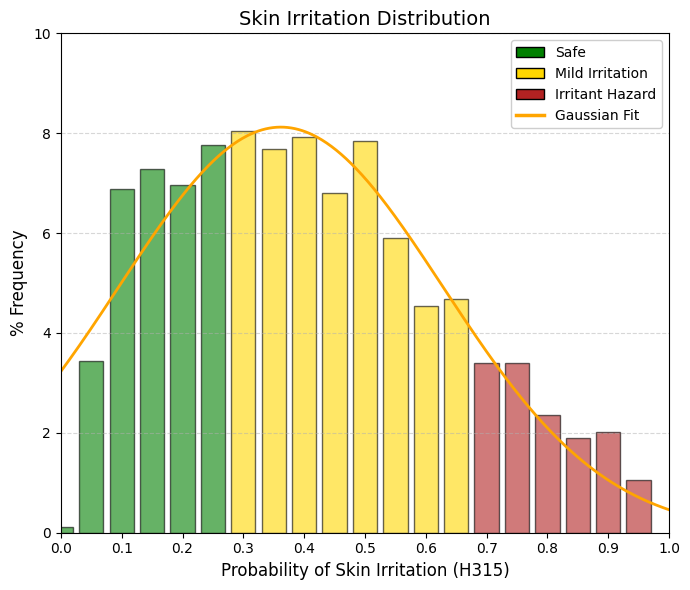

Skin irritation.

In contrast to skin corrosion, which displays a steeply declining distribution reflecting the rarity of truly corrosive compounds, skin irritation potential (H315) follows an approximately Gaussian distribution centered around 0.36. This pattern indicates that mild skin irritation—manifesting as transient redness or dryness—is a common and expected property of many pharmacologically active compounds. Most approved drugs occupy the central region of the distribution, corresponding to mild to moderate irritant potential, whereas only a small fraction of compounds are either completely non-irritating or highly aggressive. The stratum corneum generally withstands chemical insult but responds with inflammation, reflecting a temporary disruption of the skin’s lipid barrier. Such responses are not exceptional but rather typical, as the majority of drugs induce some degree of reversible perturbation of the cutaneous barrier. A key distinction emerges when comparing skin irritation with ocular irritation. In the H319 eye irritation distribution, approximately 10% of compounds occupy the extreme right bin (1.0), whereas in the H315 skin irritation distribution, the corresponding high-probability bin (0.95) accounts for only about 1% of drugs. This difference underscores the substantially greater resistance of skin to chemical irritation relative to the eye. The machine-learning model implemented in ADMETsar 3.0 accounts for this biological disparity by penalizing skin irritation less severely than ocular irritation. ADMETsar 3.0 was trained using compounds with established regulatory classifications under the United Nations’ Globally Harmonized System (GHS). Within this framework, the H315 label (“Causes skin irritation”) is assigned when a compound induces erythema (visible reddening due to inflammation) and/or edema (tissue swelling caused by fluid accumulation) in standardized assays, including OECD Test Guideline 404 or validated in vitro human skin models. Unlike skin corrosion, which involves irreversible tissue destruction, H315-classified irritation is fully reversible, with complete tissue recovery typically occurring within 14 days. |

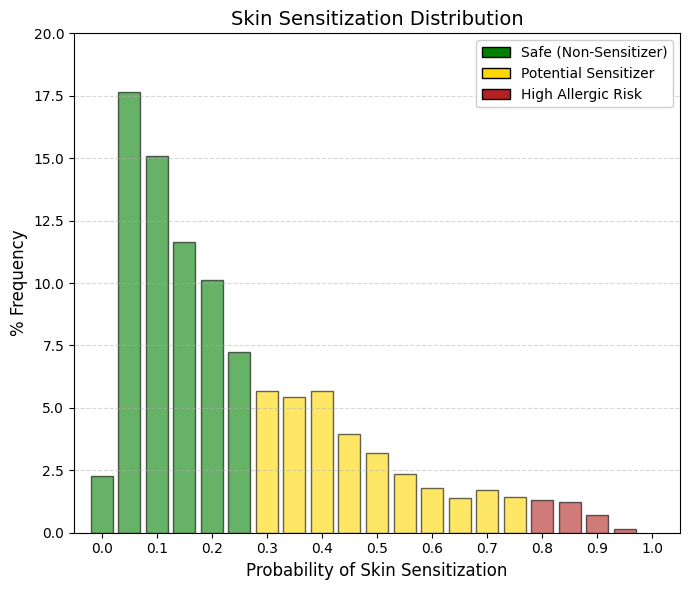

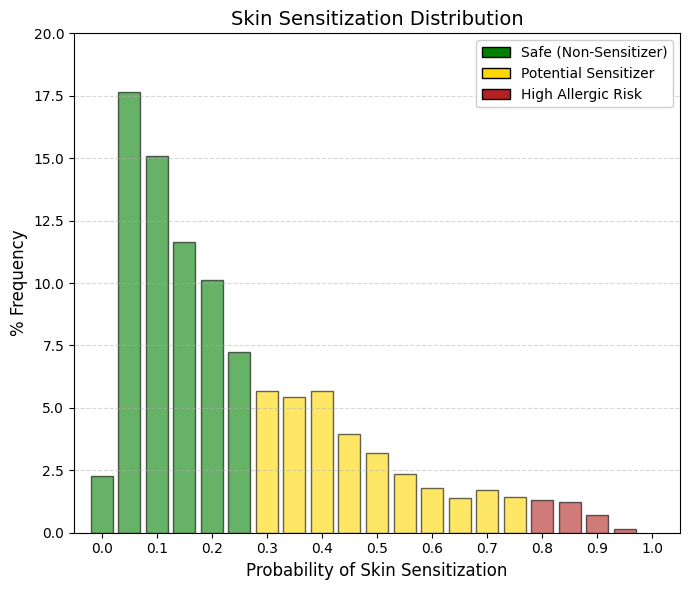

Skin sensitisation.

Sensitization is an immunological reaction, and for it to occur the drug molecule must covalently bind to proteins in the skin, forming a complex known as a hapten, which is subsequently recognized and attacked by T lymphocytes. A hapten is a small molecule that, by itself, is not capable of inducing a complete immune response. However, it can be recognized by the immune system when it covalently binds to a larger protein, known as a carrier protein. Upon binding to this protein, a hapten-protein complex is formed that can function as a complete antigen and trigger an immune response. The relationship between haptens and T lymphocytes can be understood through the process of antigen presentation. When the hapten-protein complex enters the organism, it may be captured by antigen-presenting cells such as dendritic cells, macrophages, or B lymphocytes. These cells internalize the complex and degrade the carrier protein into small peptides. These peptides are then presented on the cell surface bound to major histocompatibility complex class II (MHC II) molecules. Helper T lymphocytes (T helper, CD4⁺) recognize these peptide-MHC complexes through their T-cell receptor (TCR) and become activated. A key aspect is that T lymphocytes do not recognize the hapten directly. Instead, they recognize peptides derived from the carrier protein that are presented in the MHC molecules of the antigen-presenting cell. Therefore, T-cell activation depends on the carrier rather than on the hapten itself. This characteristic explains why haptens must be bound to proteins in order to induce an effective immune response. In contrast, B lymphocytes can directly recognize the hapten through their membrane receptor (BCR). When a B lymphocyte recognizes a hapten bound to a protein, it internalizes the entire complex. The carrier protein is then processed and fragments of this protein are presented on MHC II molecules. These peptide fragments are recognized by helper T lymphocytes that are specific for the carrier. Once activated, the T cells provide co-stimulatory signals and cytokines that stimulate the B lymphocyte to proliferate, differentiate, and produce antibodies specifically directed against the hapten. This mechanism is known as the hapten–carrier effect and represents a classic example of cooperation between T and B lymphocytes. Through this cooperation, the immune system can generate an effective response against small molecules that would otherwise not be immunogenic. This phenomenon also has clinical relevance, as certain drugs or chemical substances may act as haptens when they bind to proteins in the body, thereby triggering immune responses such as drug allergies or contact hypersensitivity reactions. Fortunately, the vast majority of approved drugs do not possess the chemical reactivity required to initiate this immunological cascade. Consequently, the main peak in the dataset (almost 55%) is concentrated in the safest region (bins 0.050.20). Unlike irritation, which represents temporary tissue damage, sensitization creates immunological memory. Once a patient or worker becomes allergic, the hypersensitivity to that molecule will generally persist for life. For this reason, the filtering criteria applied here are strict and depend on the intended use:

🟢 GREEN (< 0.3): Safe and non-allergenic. These molecules are structurally inert toward skin proteins and do not form haptens. They are therefore ideal candidates for topical formulations such as creams, gels, transdermal patches, or medical cosmetics.

🟡 YELLOW (0.3-0.8): Moderate risk. These compounds display some degree of chemical reactivity. The immune system could recognize them if exposure is repeated or if the skin barrier is compromised. They are generally acceptable for oral or injectable administration. However, if formulated for topical use, additional clinical testing would be required to ensure that the applied dose does not cross the sensitization threshold.

🔴 RED (> 0.8): Confirmed sensitizers (immunological alert). These substances have a high probability of inducing allergic contact dermatitis, a T-cell-mediated reaction. A patch or cream containing compounds in this range would likely cause severe eczema in patients after only a few exposures. Although the drug may still be suitable for oral administration, it triggers a critical occupational safety alert. Chemical operators in manufacturing plants would need to use strict personal protective equipment (e.g., Tyvek suits and double nitrile gloves), because airborne drug powder could rapidly sensitize exposed workers. |

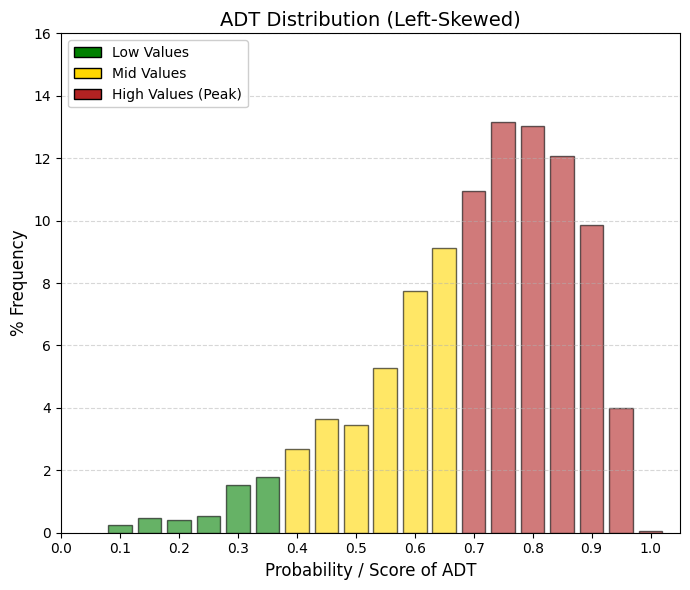

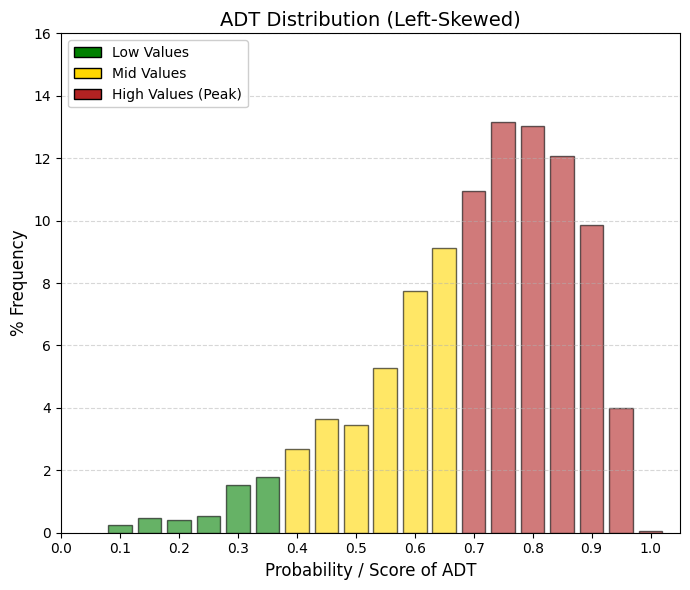

ADT: acute dermal toxicity.

This parameter is not used to determine whether a molecule is suitable as a drug, but rather to classify how it should be handled and manufactured.

🟢 GREEN (< 0.4): Biologically Mild Compounds. Even when applied in very large doses through the skin, these compounds do not produce severe systemic toxicity. They are typically large molecules with very limited skin penetration.

🟡 YELLOW (0.4-0.7): Moderate Systemic Toxicity.

🔴 RED (> 0.7): Highly Bioactive Compounds (industry standard). If such compounds cross the skin in their pure state and at high concentration, they may produce severe systemic effects. In practice, the molecule is assigned an occupational risk alert label. Chemical plant operators involved in the synthesis or handling of these substances must therefore use personal protective equipment (PPE)—including full protective suits and nitrile gloves—to prevent absorption of the pure powder through the skin.